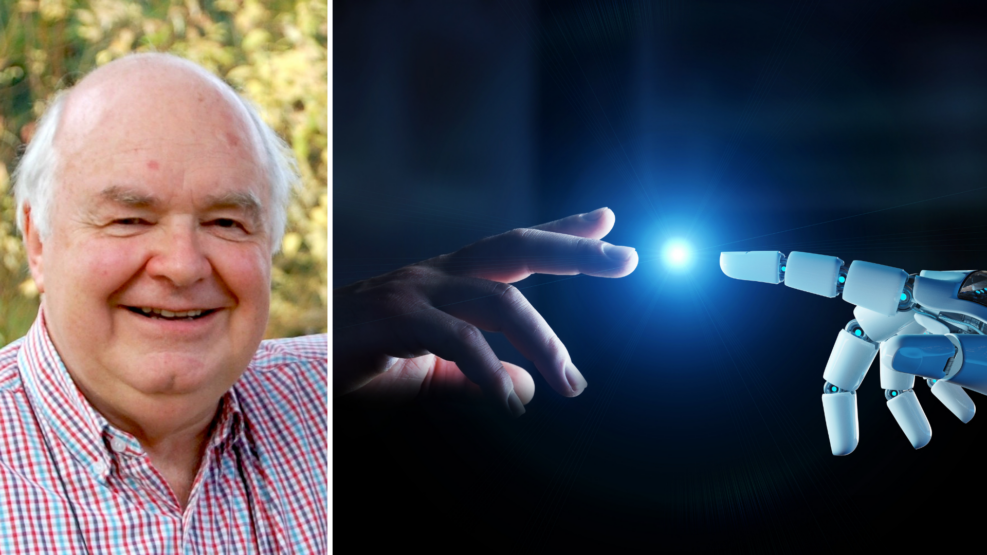

Bingecast: Selmer Bringsjord on the Lovelace Test

The Turing test, developed by Alan Turing in 1950, is a test of a machine’s ability to exhibit intelligent behaviour indistinguishable from a human. Many think that Turing’s proposal for intelligence, especially creativity, has been proven inadequate. Is the Lovelace test a better alternative? What are the capabilities and limitations of AI? Robert J. Marks and Dr. Selmer Bringsjord discuss Read More ›