Is Ray Kurzweil’s Singularity Nearer or Still Impossible?

AI might help us unlock our potential, a panel concludes, but it won’t take overA panel of experts wrestled with that question in a recent panel discussion. They were responding to the famed futurist’s prediction at the COSM 2019 Technology Summit that we will merge with our computers by 2045 — The Singularity. “Our intelligence will then be a combination of our biological and non-biological intelligence,” he explained. We will then be apps of our smart computers.

Kurzweil, a Director of Engineering at Google, should be taken seriously. He boasts a 30-year track record of accurate predictions and many key patents. That said, many predictions fail because the prophet overlooks the fact that the changes required are of nature, not of scale. Changes of nature sometimes prove impossible, often for unforeseen reasons. For example, the panelists at the followup discussion, “AI’s Role in Unlocking Human Potential,” raised a number of objections:

● Oren Etzioni, CEO of the Allen Institute for Artificial Intelligence (brainchild of Paul G. Allen, Microsoft co-founder), cautioned against hype about superhuman AI: “Exponentials are very important. If we extrapolate exponentials, we can be exponentially wrong.” He made a distinction, for example, between retrieval of data and understanding it: “To take one tidbit from his talk, he talked about the ability of computers to read 100 million sentences. But they actually only retrieve words.” AI may help us understand but it doesn’t itself understand.

Etzioni also reflected on the strictly limited focus of artificial intelligence achievements. “It taught itself to play chess in four hours? But that was in 2018. It was structured to play chess. What has it done for us lately?” Generally, AI achievements are one-offs; that same program does not go on to make headway against cancer or COVID-19.

● George Montañez, Assistant Professor of Computer Science at Harvey Mudd College, took issue with Kurzweil’s claim that AlphaGoZero needed no instructions to beat humans at the game of Go: “For a system like this to work, a human must define the incentive structure, also encoding the assumptions.” The sheer power of a computing system does not cause it to do anything at all. Programmers must define both the goal and the outcome. Afterwards, he noted, flush with success, the programmers may believe themselves when they say, “Oh, I had no hand in it.” But nothing at all would have happened apart from their efforts.

The machine does not “want” to play Go in the sense that a fish wants to catch a worm or the worm wants to escape. As MIT’s David Gelernter put it, “It plays the game for the same reason a calculator adds or a toaster toasts: because it is a machine designed for that purpose.” With its powerful computing abilities, it is an artificial extension of the programmers’ abilities. Getting around that problem is not a matter of adding to the computing power.

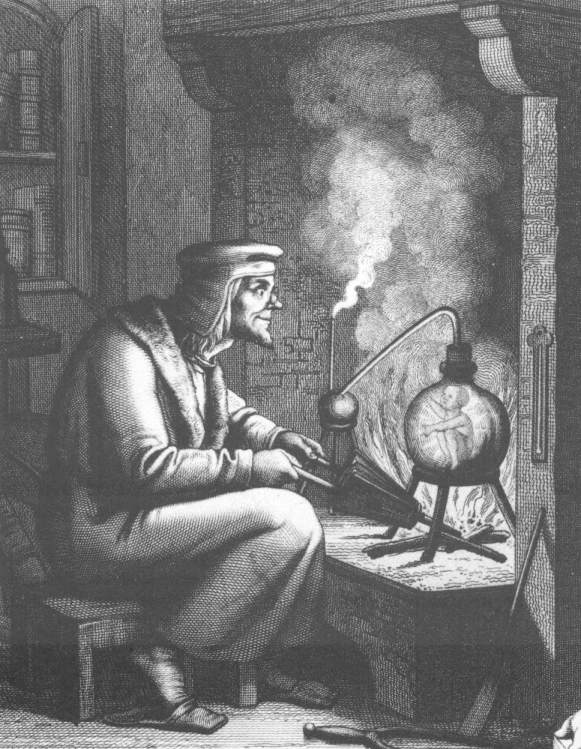

● Robert J. Marks, director of the Walter Bradley Center for Natural and Artificial Intelligence, offered a historical take on Kurweil’s claims. One of the goals of early modern alchemy was the creation of a “homunculus,” a manufactured human that can think for us. As we began to understand nature better, alchemists became chemists and abandoned such pursuits in favor of compiling the periodic table of the elements. “I believe that the Singularity will live in history as like the homunculus,” he predicted.

● On a practical note, moderator Matt McIlwain, Managing Director of the Madrona Venture Group, asked, “What are the constraints to more, faster progress for the AlphaGos of the world?”

Montañez responded, “What AI and machine learning systems are doing is really revolutionary. But our systems are only as good as the data that goes into them. We don’t necessarily know how biased our data systems are.” He cited Amazon’s recent widely-publicized misfortunes with a short-lived AI hiring system which turned out to be biased against women.

Google could tell a similar tale about entirely unintended racism in its images program. The problem was traced to deficiencies in machine vision. The machines don’t think; they simply reflect a mass of data scarfed into them from a variety of sources—many of which a thoughtful human might have omitted. This problem dogs banking (decisions about credit) and law enforcement (decisions about bail and early release) as well. As Montañez put it, “It’s almost as if we had a central nervous system without a brain.”

● Etzioni warned, in any event, “Don’t mistake a clear view for a short distance.” What if a media release went out, saying, “We are going to release an artificial scientist!” Most people would sense a problem with the claim, he said. There is more to what science personnel do than a machine can handle. For one thing, creativity is important for advances in the sciences and it’s one thing that machines don’t really do.

● As Marks put it, non-algorithmic things (things that cannot be calculated), “cannot be uploaded.” Human consciousness, little as we understand it, appears to be one of those non-algorithmic things.

The panel was held at COSM, A National Technology Summit: AI, Blockchain, Crypto, and Life After Google October 23–25, 2019 was sponsored by the Walter Bradley Center for Natural and Artificial Intelligence, hosted by technology futurist George Gilder.

You may also enjoy:

Business prof: Stop it! The world is not running out of stuff. A famous bet between two top thinkers settled that a long time ago. BYU Hawaii’s Gale Pooley tells us, we’re not creating more atoms but we ARE creating the ability to make these atoms smarter.

and

Tech investment analysts strategize how to deal with China today. China’s assertions of power in recent years have left many uncertain about the future of business relationships. One panelist commented, “Look, we can try and boil the ocean or we can just try and get some things done.” The question then comes down to: Which things?

All the conference videos to date are here.