Can an Algorithm Be Racist?

No, the machine has no opinion. It processes vast tracts of data. And, as a result, the troubling hidden roots of some data are exposedIn 2016, following up on a U.S. government report, AI researchers identified a blind spot in the systems that produce predictions by crunching masses of data:

AI will not necessarily be worse than human-operated systems at making predictions and guiding decisions. On the contrary, engineers are optimistic that AI can help to detect and reduce human bias and prejudice. But studies indicate that in some current contexts, the downsides of AI systems disproportionately affect groups that are already disadvantaged by factors such as race, gender and socio-economic background.

Kate Crawford & Ryan Calo, “There is a blind spot in AI research” at Nature

Some mistakes have been pretty embarrassing:

One notable and recent example was Google admitting in January that it couldn’t find a solution to fix its photo-tagging algorithm from identifying black people in photos as gorillas, initially a product of a largely white and Asian workforce not able to foresee how its image recognition software could make such fundamental mistakes. (Google’s workforce is only 2.5 percent black.) Instead of figure out a solution, Google simply removed the ability to search for certain primates on Google Photos. It’s those kinds of problems — the ones Google says it has trouble foreseeing and needs help solving — that the company hopes its contest can try and address.

Nick Statt, “Google is hosting a global contest to develop AI that’s beneficial for humanity” at The Verge (2018)

It’s tempting to assume that a villain lurks behind such a scene when the exact opposite is the problem: A system dominated by machines is all calculations, not thoughts, intentions, or choices. If the input is wrong, so is the output. There is no one “in there” to say stop, wait, this is just wrong, that can’t be the right answer, etc.

Human-caused biases include prejudice. But there is also sample, measurement, and algorithm bias, any of which may result in unfair or unrepresentative output, if not monitored carefully:

AI algorithms are built by humans; training data is assembled, cleaned, labeled and annotated by humans. Data scientists need to be acutely aware of these biases and how to avoid them through a consistent, iterative approach, continuously testing the model, and by bringing in well-trained humans to assist.

Glen Ford, “4 human-caused biases we need to fix for machine learning” at The Next Web

In 2017, Yonatan Zunger, a Distinguished Engineer at Humu, offered some broader insights on how these things really happen:

I’ll give you one spoiler to start with: most of the hardest challenges aren’t technological at all. The biggest challenges of AI often start when writing it makes us have to be very explicit about our goals, in a way that almost nothing else does — and sometimes, we don’t want to be that honest with ourselves…

Machine-learned models have a very nasty habit: they will learn what the data shows them, and then tell you what they’ve learned. They obstinately refuse to learn “the world as we wish it were,” or “the world as we like to claim it is,” unless we explicitly explain to them what that is — even if we like to pretend that we’re doing no such thing.

Yonatan Zunger, “Asking the Right Questions About AI” at Medium

He offers the example of high school student Kabir Alli’s Google image search in 2016: for “three white teenagers” and “three black teenagers.” The request for white teens turned up stock photography for sale and the request for black teens turned up local media stories about arrests: The ensuing anger over deep-seated racism submerged the fact that the algorithm was not a decision someone made. It was an artifact of what people were looking for: “When people said ‘three black teenagers’ in media with high-quality images, they were almost always talking about them as criminals, and when they talked about ‘three white teenagers,’ they were almost always advertising stock photography.’”

The machine only shows us what we ask for, it doesn’t tell us what we should wonder about. Zunger cautions that, of course, “Nowadays, either search mostly turns up news stories about this event.” That makes it difficult to determine whether anything has changed.

There is no simple way to automatically remove bias because much of it comes down to human judgment. Most Nobel Prize winners are men but a thoughtful human being will not assume that a winner “must be” a man. A machine learning system “knows” nothing other than the data input. It certainly doesn’t “know” that it might be creating prejudice or giving offense. If we want it to prevent that, we must constantly monitor its output.

Zunger was the technical leader for Google’s social efforts in July 2015, with responsibility for photos, when he got the call that Google’s photo indexing system had publicly described a black man and his friend as “gorillas.” What had gone wrong?

First, facial recognition is much more difficult than we are sometimes told. White people, Zunger says, were also often misidentified, perhaps as dogs or seals. When the machine learning system made those errors, they didn’t matter much. But, as he says, “nobody thought to explain to it the long history of Black people being dehumanized by being compared to apes. That context is what made this error so serious and harmful, while misidentifying someone’s toddler as a seal would just be funny.”

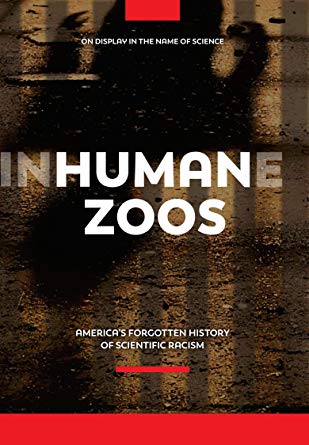

Human Zoos: America’s Forgotten History of Scientific Racism should be required viewing for that algorithm—except that algorithms don’t understand what they see. And there is no simple, external way to predict cultural sensitivities globally and permanently. Sensible rules cannot be derived without frequent human input:

Humans deal with this by having an extremely general intelligence in their brains, which can handle all sorts of concepts. You can tell it that it should be careful with its image recognition when it touches on racial history, because the same system can understand both of those concepts. We are not yet anywhere close to being able to do that in AI’s.

Yonatan Zunger, “Asking the Right Questions About AI” at Medium

Of course, some problems with AI and racial bias are not simple errors. Consider COMPAS, a system used to predict which criminal defendants would be more likely to commit further crimes. As the Digital Ethics Lab at Oxford University explains, “When a team of journalists studied 10,000 criminal defendants in Broward County, Florida, it turned out the system predicted that black defendants pose a higher risk of recidivism than they actually do in the real world while predicting the opposite for white defendants.” What went wrong there?

Zunger accepts that Compas’s programmers made reasonable efforts to eliminate racial bias. The trouble was, their model didn’t predict what they thought it was predicting:

It was trained to answer, “who is more likely to be convicted,” and then asked “who is more likely to commit a crime,” without anyone paying attention to the fact that these are two entirely different questions. (COMPAS’ not using race as an explicit input made no difference: housing is very segregated in much of the US, very much so in Broward County, and so knowing somebody’s address is as good as knowing their race.)

Yonatan Zunger, “Asking the Right Questions About AI” at Medium

Every day across North America, many crimes go unreported for a variety of reasons. But African Americans were more likely to be stopped and questioned, then charged, tried, and convicted than others were. So they entered the records at a greater rate, irrespective of occurrences as such. COMPAS reflected that fact and did not reflect on it.

But that is part of a bigger issue as well, as Zunger reminds us: “there is often a difference between the quantity you want to measure, and the one you can measure.” For example, we might want to know how many people drive while impaired. However, for hard data, we must be content with the number of people convicted of impaired driving, a different and probably much lower figure. What’s more important for our purposes is that the biographical information we get about impaired drivers derives only from this smaller set of drivers who were convicted. So the data will never capture reality in real time.

Facts like that make a great deal of difference to what we can expect artificial intelligence to do. It will never reflect more than we can measure and the misleading answers we get from it are a reflection of inescapable problems of data gathering, not the output of a Universal Answers Machine.

So, can an algorithm be racist? The output has no opinion. As always, it’s the input we must be concerned with. So we find ourselves back at the beginning, seeing things perhaps a little more clearly.

See also: Did AI teach itself to “not like” women?

and