CategoryMachine Learning

“Artificial” Artificial Intelligence

What happens when AI needs a human I?Artificial intelligence often fails at crucial points. It must then be supplemented by human intelligence. Many software systems that look to their users like pure advanced artificial intelligence hide a lot of human effort behind a technological mask.

Read More ›

Slaughterbots: How far is too far?

And how will we know if we have crossed a line?

Karl Marx’s Eerie AI Prediction

He felt that capitalism would fall when machines replaced human labor

Imagining Life after Google

Reviewers of George Gilder's new book weigh inIf we have simply taken the big software, hardware, and social media companies who dominate our lives for granted, the reactions from the business world to Life after Google: The Fall of Big Data and the Rise of the Blockchain Economy should give us a lot to think about.

Read More ›

Robogeddon!! Pause.

Wait. This just in: AI is NOT killing all our jobs

Ethics for an Information Society

Because machines can’t learn to solve their own ethical problems

AI Tools for Mass Manipulation?

Machine learning can unleash a perfect storm of malice, experts warn

Sometimes the ‘Bots Turn Out To Be Humans

That “lifelike” effect was easier to come by than some might think

Will AI Liberate or Enslave Developing Countries?

Perhaps that depends on who gets there first with the technology

Who Creates Information in a Market?

Do exchange-traded funds (ETFs)' algorithms make personally gathering information obsolete?Algorithmic strategies can only be as good as the information that goes into them. Ignoring how the information is created causes us to misunderstand the dynamics of value creation. Algorithms can leverage information, they can’t create it.

Read More ›

Why machines can’t think as we do

As philosopher Michael Polanyi noted, much that we know is hard to codify or automate

Why Can’t Machines Learn Simple Tasks?

They can learn to play chess more easily than to walk

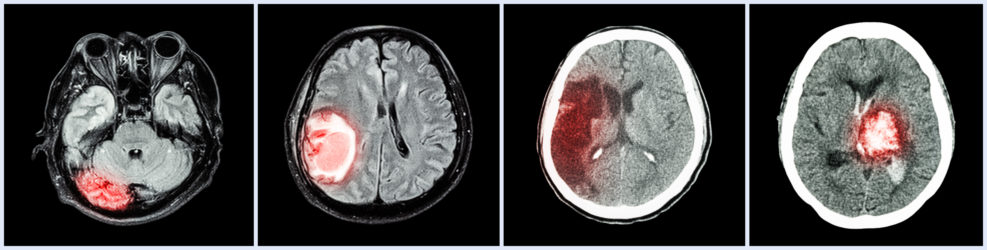

Better medicine through machine learning?

Data can be a dump or a gold mine

AI Is Not (Yet) an Intelligent Cause

So-called “white hat” hackers who test the security of AI have found it surprisingly easy to fool.

The driverless car: A bubble soon to burst?

Car expert says journalists too gullible about high techWhy do we constantly hear that driverless, autonomous vehicles will soon be sharing the road with us? Wolmar blames “gullible journalists who fail to look beyond the extravagant claims of the press releases pouring out of tech companies and auto manufacturers, hailing the imminence of major developments that never seem to materialise.”

Read More ›

GIGO alert: AI can be racist and sexist, researchers complain

Can the bias problem be addressed? Yes, but usually after someone gets upset about a specific instance.From James Zou and Londa Ziebinger at Nature: When Google Translate converts news articles written in Spanish into English, phrases referring to women often become ‘he said’ or ‘he wrote’. Software designed to warn people using Nikon cameras when the person they are photographing seems to be blinking tends to interpret Asians as always blinking. Word embedding, a popular algorithm used to process and analyse large amounts of natural-language data, characterizes European American names as pleasant and African American ones as unpleasant. Now where, we wonder, would a mathematical formula have learned that? Maybe it was listening to the wrong instructions back when it was just a tiny bit? Seriously, machine learning, we are told, depends on absorbing datasets of Read More ›