Can machines really learn?

A parable of a book that learned“Machine learning” is a hot field, and tremendous strides are being made in programming machines to improve as they work. Such machines work toward a goal, in a way that appears autonomous and seems eerily like human learning. But can machines really learn? What happens during machine learning, and is it the same thing as human learning?

Because the algorithms that generate machine learning are complex, what is really happening during the “learning” process is obscured both by the inherent complexity of the subject and the technical jargon of computer science. Thus it is useful to consider the principles that underlie machine learning in a simplified way to see what we really mean by such “learning.”

A book is a type of simple machine. It is a tool we use to store and retrieve information, analogous in that respect to a computer. The paper, the glue, and the ink are the book’s hardware. The information in the book is the software. The programmer is the author and the user is the reader. Can a book learn in the way that machines learn?

Of course it can, in one sense.

Let us say that a teacher writes a reference book for mathematics for his students. He keeps a single copy of the book on a table in class for students to consult on their own, when they want to learn more about a mathematical topic. The book is a compilation of chapters—chapters on addition, subtraction, multiplication, division, calculus, complex numbers, etc. Students are given free time to go to the table and flip through the book, to deepen their knowledge of topics about which they need extra help.

Over time, the book will wear. Its binding will “crack” in places where many students have opened it. Because the binding is cracked in those places, it will tend to open on chapters on the very topics that many students need help with—multiplication tables or calculus, for example.

As the semester goes on, students find that the book naturally falls open more and more often at frequently consulted chapters. It seems as if the book has “learned” what topics students need help with most often. It naturally becomes a more effective aid to learning mathematics because it opens more readily on the chapters students need, even though no one set out to crack the binding those points.

Now suppose that the teacher wants the students to focus on specific chapters that they are not choosing on their own. He could purposely crack the binding of the book on those chapters, so that the book opens more readily on topics he wants them to learn. Now, when they browse the book, they tend to find the chapters he thinks are most relevant. His action makes the book a more effective tool for systematic learning—learning in a way that is “programmed” by the teacher, so to speak.

But what if the teacher has thought of a way to make the book even more effective? When he replaces the copy of the book, he asks the bookbinder to reduce the amount of glue on the binding. Now the binding ‘cracks’ on relevant topics even more easily. This seems to enhance the book’s effectiveness, making it an even better tool on account of its original design.

So here we have a simple but clear case of “machine learning.” With use, the book directs readers to topics of relevance. This “machine learning” can be intentional—the teacher can crack the binding himself. Or it can be unintentional—the students can inadvertently crack the binding by repeated use. And the book can even be ‘programmed’ to learn more readily by making the binding weaker, which makes cracking (and thus marking) of relevant chapters easier.

Machine learning using computers works on the same principles. The properties of the program are such that repeated use reinforces certain outcomes and suppresses other outcomes. It may be deliberate or inadvertent. It can be quite effective, by design.

Is it accurate to say that the book with the cracked binding “learns” by repeated use? Of course not, unless we are using the term “learns” as a metaphor, the way we might say that our new sneakers are “learning” to fit our feet. Books can’t think, so they can’t learn. With proper design—proper hardware (the binding) and proper software (the relevant information in the chapters), patterns of use are reinforced and suppressed. But the machine (the book) itself doesn’t learn. Rather, the book with the cracked binding serves as a tool by which the reader learns.

More generally, “machine learning” is a metaphor for user learning that is facilitated by the design of the machine. Machines—books or computers—are merely physical tools. They don’t have minds, and only things with minds can learn. Users of machines—humans— can learn, and proper design of machines can enhance human learning. Machine learning is a metaphor for machine-assisted human learning, using machines that change function over time. These changes may be unintentional, or they may be part of the design of the machine.

Machine learning is a powerful and important tool that is likely to be of great value (and perhaps great risk) to man. Machines can be designed to change with time but it is man, and only man, who learns.

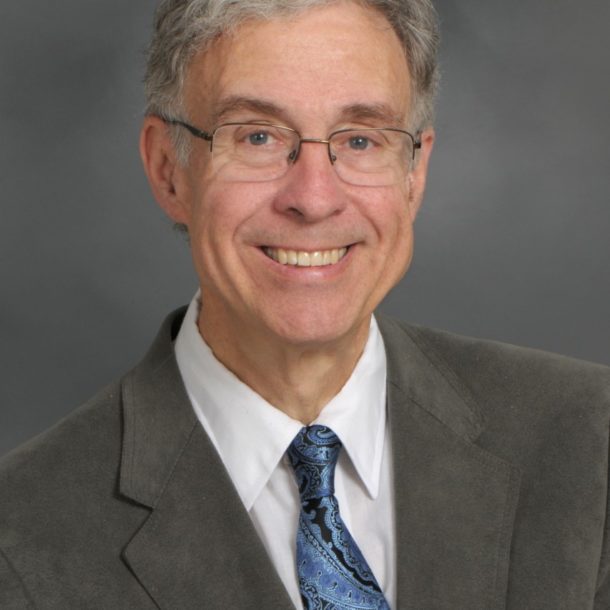

Michael Egnor is a neurosurgeon, professor of Neurological Surgery and Pediatrics and Director of Pediatric Neurosurgery, Neurological Surgery, Stonybrook School of Medicine