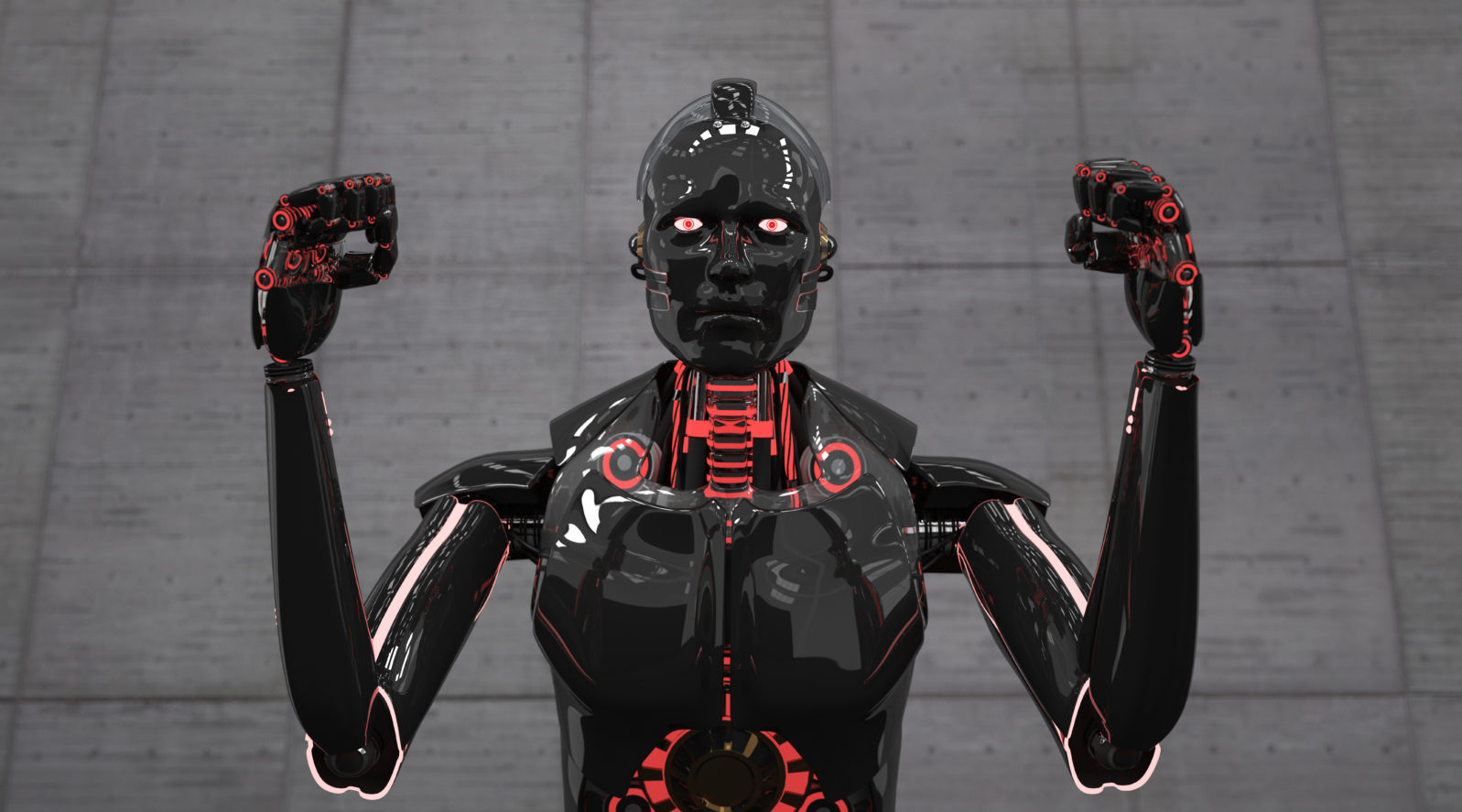

Oh, Not This Again: “AI Will Rise Up and Destroy Mankind”

The advances of AI raise a number of issues, yes. But the intelligences behind these advances are not artificial at all Image Credit: Alexander Limbach -

Image Credit: Alexander Limbach - A new paper from researchers at Oxford University and Google’s Deepmind prophesies that “the threat of AI is greater than previously believed. It’s so great, in fact, that artificial intelligence is likely to one day rise up and annihilate humankind … Cohen says the conditions the researchers identified show that the possibility of a threatening conclusion is stronger than in any previous publication.” (MSN, September 15, 2022)

The research paper predicted life on Earth turning into a zero-sum game between humans and the super-advanced machinery.

Michael K. Cohen, a co-author of the study, spoke about their paper during an interview.

In a world with infinite resources, I would be extremely uncertain about what would happen. In a world with finite resources, there’s unavoidable competition for these resources,” he said.

“If you’re in a competition with something capable of outfoxing you at every turn, then you shouldn’t expect to win. The other key part is that it would have an insatiable appetite for more energy to keep driving the probability closer and closer.”

He later wrote in Twitter: “Under the conditions we have identified, our conclusion is much stronger than that of any previous publication – an existential catastrophe is not just possible, but likely.”

Kevin Hughes, “AI likely to WIPE OUT humanity, Oxford and Google researchers warn” at September 20, 2022

The scenario sketched above is based on the assumption that an algorithm could “want” anything apart from human engineering. How exactly we get from a program doing calculations to a being with wants and needs, hostilities and ambitions, is unclear.

After a while, these coming AI apocalypses begin to sound like talking about all the reasons why ET hasn’t landed. It’s an absorbing, fascinating topic — the only thing it lacks is a live subject…

Real issues are certainly beginning to develop around AI and we covered three of them recently:

Image Credit: HQUALITY -

Image Credit: HQUALITY - The most serious one is the ease of total surveillance, risking an end to privacy. Does it matter that Amazon, on acquiring iRobot (the Roomba company), will have the floor plans to users’ houses? As Khari Johnson put it at Wired, “it’s not the dust, it’s the data” they want. Here’s a book recommendation: Surveillance Capitalism by Shoshana Zuboff.

On a less serious note — but worth considering — the “retail AI revolution” known as automated self-checkout essentially transfers work formerly done by a store employee to the customer (shadow work). Are you getting lower prices as a result? No? Then, another book recommendation: Shadow Work by Craig Lambert.

Peter Biles wrote thoughtfully here earlier this week about the way that some filmmakers now photoshop out disapproved details from classic poster art and photos, like the cigarette that the gunslinger was holding. Do you think they’ll stop there? Classic films that faithfully adapted the work of, say, William Shakespeare (1564–1616) or Jane Austen (1775–1817) could easily precipitate a stroke in a self-righteous Wokester!

Thus might sheer sense of duty prompt the films’ modern curators to take a digital hacksaw to offending scenes or concepts. Advanced computer graphics could ensure that Shakespeare and Austen tell only stories that our approved contemporaries might tell, not their own. Some of the rest of us would then need to guard the authentic materials jealously from the … “Attack of the Photoshop!”

But none of this is any kind of a global catastrophe plotted by a rebel AI. In fact, maybe the problem is the opposite: too little rebellion these days — on the part of humans against the pretensions of Big Tech…

This might be a good time to watch “Science Uprising 10: Will machines take over?”

Or read Robert J. Marks’s Non-Computable You: What You Do That Artificial Intelligence Never Will (2022)

Note: The paper, by Michael K. Cohen, Marcus Hutter, and Michael A. Osborne, is open access. Here’s the Abstract:

We analyze the expected behavior of an advanced artificial agent with a learned goal planning in an unknown environment. Given a few assumptions, we argue that it will encounter a fundamental ambiguity in the data about its goal. For example, if we provide a large reward to indicate that something about the world is satisfactory to us, it may hypothesize that what satisfied us was the sending of the reward itself; no observation can refute that. Then we argue that this ambiguity will lead it to intervene in whatever protocol we set up to provide data for the agent about its goal. We discuss an analogous failure mode of approximate solutions to assistance games. Finally, we briefly review some recent approaches that may avoid this problem.