#3 AI, We Are Now Told, Knows When It Shouldn’t Be Trusted!

Gödel's Second Incompleteness Theorem says that, for any system that can reliably tell you that things are true or false, it cannot tell you that it itself is reliable.Okay, so, in #4, we learned that Elon Musk’s utterly self-driving car won’t be on the road any time soon. What about the AI that knows when it shouldn’t be trusted? (As if anyone does!)

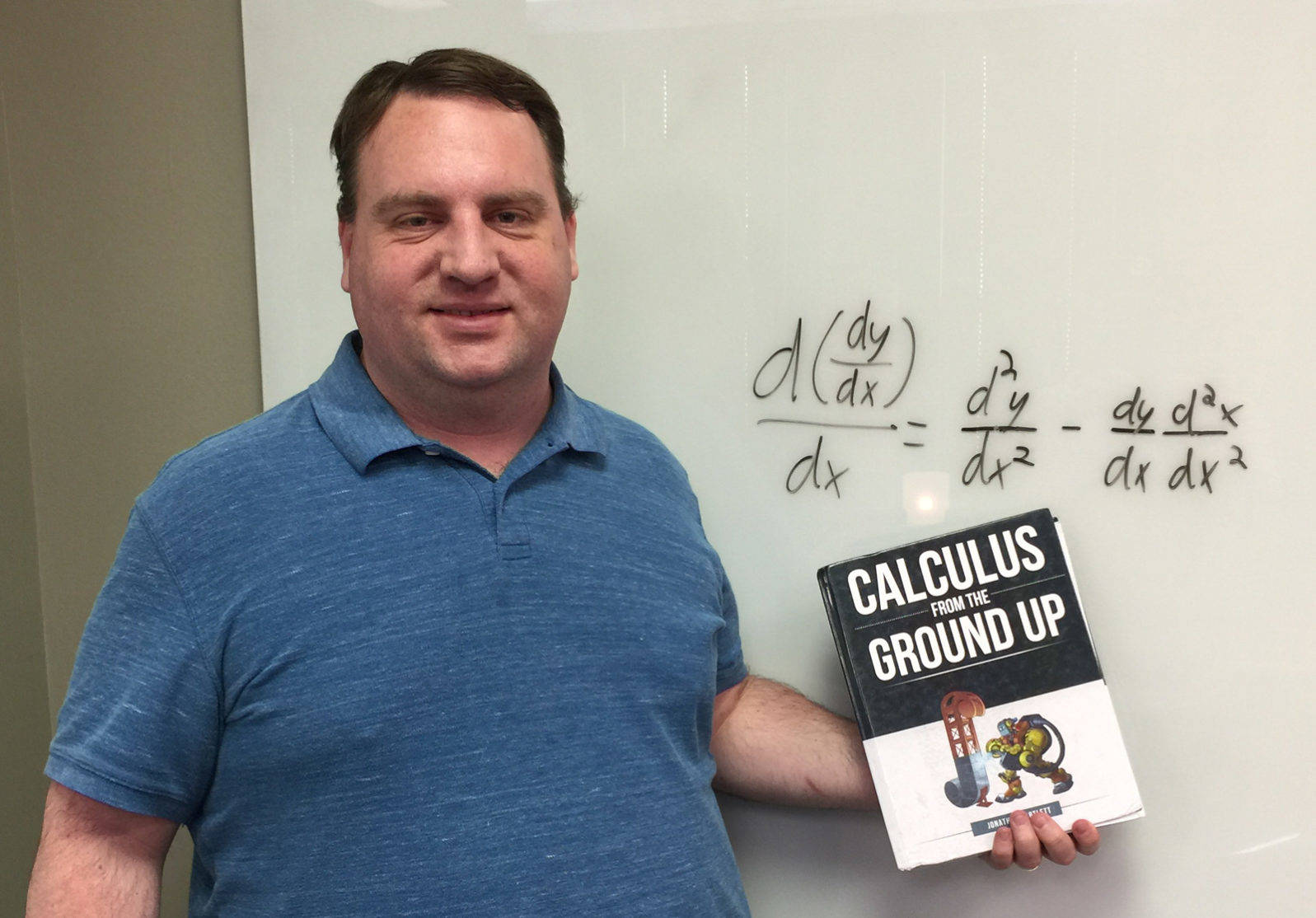

Our nerds here at the Walter Bradley Center have been discussing the top twelve AI hypes of the year. Our director Robert J. Marks, Eric Holloway and Jonathan Bartlett talk about overhyped AI ideas (from a year in which we saw major advances, along with inevitable hypes). From the AI Dirty Dozen 2020 Part III, here’s #3: AI that knows when it shouldn’t be trusted:

Our story begins at 12:57. Here’s a partial transcript. Show Notes and Additional Resources follow, along with a link to the complete transcript.

Robert J. Marks: Can AI really know when it shouldn’t be trusted? The title of the article from Science Alert is “Artificial Intelligence Is Now Smart Enough to Know When It Can’t Be Trusted.” Eric, what’s going on here?

Eric Holloway (pictured): Well, first of all, I’d like to note that the title does not say that AI can know when it should be trusted, so you could just have an AI that says “Never trust me.” It’s always going to be right.

Robert J. Marks: That goes back to fundamental detection theory, right? You have a hundred percent detection, but you have a very high percentage of false alarms, too.

Eric Holloway: Yeah. Now, as to what they actually did, they added some kind of confidence level to their results. If it’s really low confidence, then you know you can’t trust it. But, the converse does not apply. They can’t say that when it has high confidence that you can trust it. There’s a very solid, well-proven theorem called Gödel’s Second Incompleteness Theorem. And it says for any system that can reliably tell you that things are true or false, it cannot tell you that it itself is reliable. If they ever did create an AI system that can tell you, “Oh, you can trust what I say,” then at that point, you know you precisely cannot trust it …

Let’s say it does have some kind of confidence level and can say it’s fairly non-confident about some results than others, you still may not even want to trust that lack of confidence level. There’s another theorem called Rice’s theorem, which says any non-trivial property of a program is impossible to program itself. You can’t have a program that can always say that, “Hey, my confidence level is reliable.” If they can precisely set it up in a constrained environment, then you can probably get some kind of confidence out of it, but they cannot do anything like the headline claims, which is a artificial intelligence that’s smart enough to know when it can’t be trusted. It’s way too general to be something you can actually do with computers.

author supplied

author suppliedJonathan Bartlett (pictured): What’s even worse is if you read the first paragraph of that article. The first paragraph of it just goes to total science fiction land. It says, “How might the Terminator have played out if Skynet had decided it probably wasn’t responsible enough to hold the keys to the entire US nuclear arsenal. As it turns out, scientists may have just saved us from such a future AI-led apocalypse by creating neural networks that know when they’re untrustworthy.”

Robert J. Marks: Oh, good grief. Okay. Yeah. One of the big problems with the AI hype is the confusion of science fiction with science fact. And people need to be more cognizant of that.

Well, here’s the rest of the countdown to date. Read them and think:

4 Elon Musk: This time autopilot is going to WORK!Jonathan Bartlett: I have to say, part of me loves Elon Musk and part of me can’t stand the guy Eric Holloway: Tesla is supposedly worth more than Apple now. Who said you can’t make money with science fiction?

5 AI hype: AI could go psychotic due to lack of sleep Well, that’s what we can hear from Scientific American, if we believe all we read. It seems to be an effort to make AI seem more human than it really is.

6 in our Top 12 AI hypes A Conversation bot is cool —If you really lower your standards. A system that supposedly generates conversation—but have you noticed what is says? Bartlett: you could also ask “Who was President in 1600,” and it would give you an answer, not recognizing that the United States didn’t exist in 1600.

7 AI Can Create Great New Video Games All by Itself! In our 2020 “Dirty Dozen” AI myths: It’s actually just remixing previous games. Eric Holloway describes it as like a bad dream of PACMan. Well, see if it is fun.

8 in our AI Hype Countdown: AI is better than doctors! Sick of paying for health care insurance? Guess what? AI is better ! Or maybe, wait… Only 2 of the 81 studies favoring AI used randomized trials. Non-randomized trials mean that researchers might choose data that make their algorithm work.

9: Erica the Robot stars in a film. But really, does she? This is just going to be a fancier Muppets movie, Eric Holloway predicts, with a bit more electronics. Often, making the robot sound like a real person is just an underpaid engineer in the back, running the algorithm a couple of times on new data sets. Also: Jonathan Bartlett wrote in to comment “Erica, robot film star, is pretty typical modern-day puppeteering — fun, for sure, but not a big breaththrough.

10: Big AI claims fail to work outside lab. A recent article in Scientific American makes clear that grand claims are often not followed up with great achievements. This problem in artificial intelligence research goes back to the 1950s and is based on refusal to grapple with built-in fundamental limits.

11: A lot of AI is as transparent as your fridge A great deal of high tech today is owned by corporations. Lack of transparency means that people trained in computer science are often not in a position to evaluate what the technology is and isn’t doing.

12! AI is going to solve all our problems soon! While the AI industry is making real progress, so, inevitably, is hype. For example, machines that work in the lab often flunk real settings.