Information Is the Currency of Life. But What IS It?

How do we understand information in a universe that resists resolution into one single, simple system?At first, “What is information?” seems like a question with a simple answer. Stuff we need to know. Then, if we think about it, it dissolves into paradoxes. A storage medium—a backup drive, maybe—that contains vital information weighs exactly the same as one that contains nothing, gibberish, or dangerously outdated information. There is no way we can know without engaging intelligently with the content. That content is measured in bits and bytes, not kilograms and joules—which means that it is hard to relate to other quantities in our universe.

In this week’s podcast, “Robert J. Marks on information and AI, Part 1.” neurosurgeon Michael Egnor interviews Walter Bradley Center director and computer engineering prof Robert J. Marks on how we can understand information in a universe that resists any resolution down to one single simple system:

Here is a partial transcript. The Show Notes and a link to the transcript are below:

Michael Egnor: What is information?

Robert J. Marks: That’s a profound question. Before talking about information, you really have to define it… For example, if I burn a book to ashes and scatter the ashes around, have I destroyed information? Does it make a difference if there’s another copy of the book? If I take a picture, am I creating information? The answers depend on your definition of information.

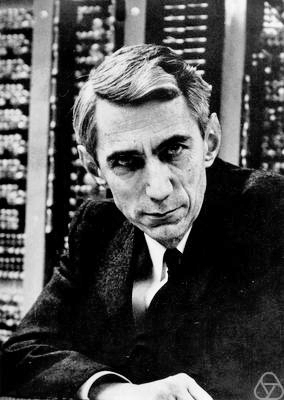

On the other hand, if I’m given a page of Japanese text and I don’t read Japanese, does it have less information for me than it does to somebody who reads Japanese? Claude Shannon (pictured) recognized this. He said, “It seems, to me, that we all define information as we choose. And depending on what field we are working in, we will choose different definitions. My own model of information theory was framed precisely to work with the problem of communication.”

Note: Pioneer information theorist Claude Shannon (1916–2001) first used the term “bit” (= binary digit) in a seminal paper in 1948, A mathematical theory of communication, which defined information. His approach laid the foundation for the communications networks we use today on our cell phones. Andrey Kolmogorov (1903–1987), a Russian scientist, developed the concept of Kolmogorov complexity, which factors in the structure and the description length of an object.

Robert J. Marks: Then, there’s the physical definition. A great physicist named Rolf Landauer (1927–1999) said all information is physical.

Now, I would argue with that. I think that all information is physical if you constrain yourself to the physics definition. And this is in total contrast to the founder of cybernetics [modern computing], Norbert Wiener (pictured, 1894-1964). He is famous for saying: Information is information. It’s neither matter nor energy.

We can think of, for example, information written on a book on the printed page. That’s information that’s etched on matter. But we also know that information can be etched on energy in terms of the cell phone signals that you receive. And so, it’s not matter nor energy, but both can be places where you can represent information. The three we’ve discussed so far are Shannon information, Kolmogorov information, and physical information. And then, the fourth…?

What about information as meaning?

Robert J. Marks: The three models of information that I have just shared with you don’t really measure meaning. The fourth model is specified complexity. The purpose of specified complexity, and specifically the mathematics of algorithmic specified complexity, is to measure the meaning in the bits of an object…

Note: Specified complexity: “A long sequence of random letters is complex without being specified [it is hard to duplicate but it also doesn’t mean anything]. A short sequence of letters like “the,” “so,” or “a” is specified without being complex. [It means something but what it means is not very significant by itself]. A Shakespearean sonnet is both complex and specified.” [It is both complex and hard to duplicate and it means a lot in a few words]

Michael Egnor: How does biological information differ from information in non-living things?

Robert J. Marks: We can talk about creativity. Creativity is the creation of information. And that is outside of naturalistic or information processes.

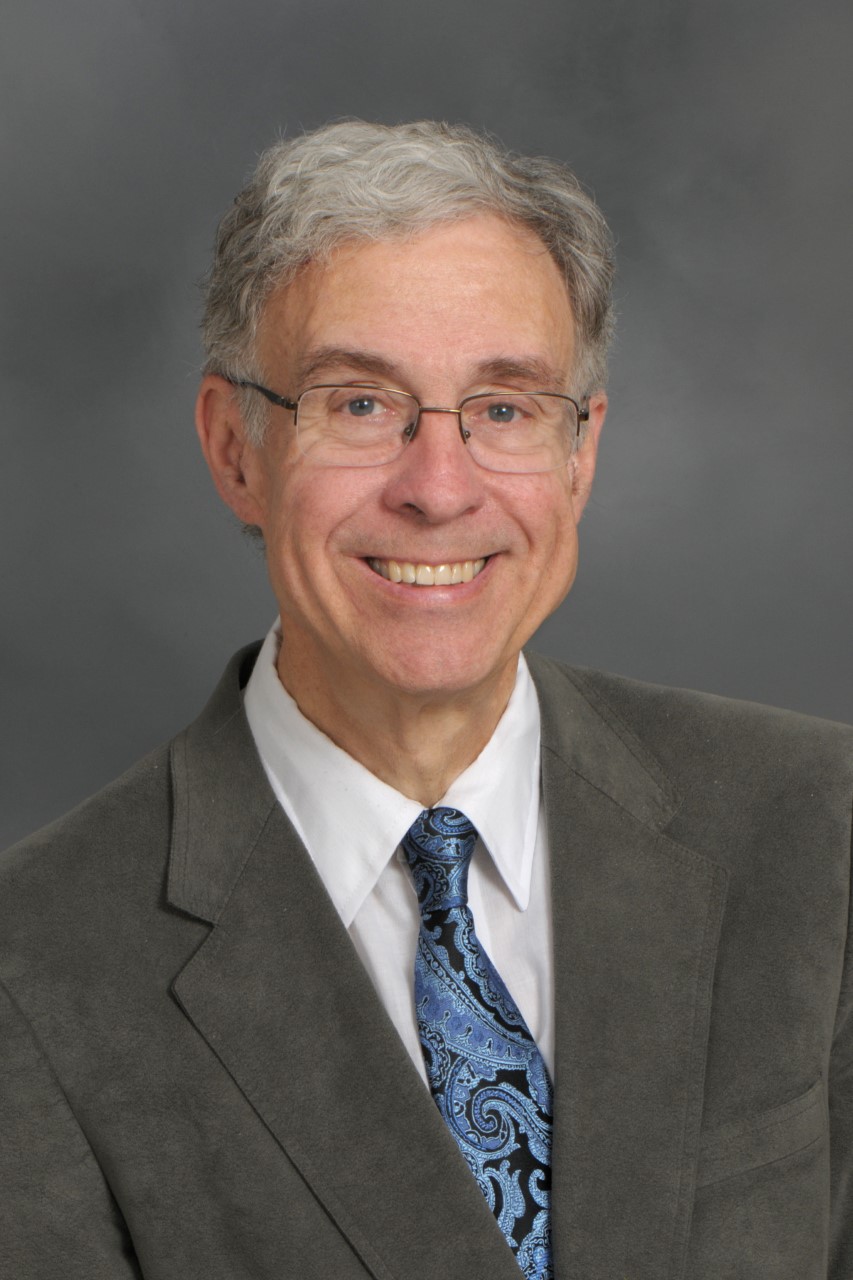

Michael Egnor (pictured): Thomas Aquinas, following Aristotle, defined living things as things that strive for their own perfection. That was what distinguished living things from non-living things. A rock doesn’t wake up in the morning and try to be a better rock. Whereas living things, to greater or lesser degrees of success, try to make themselves better at what they do. They eat, they rest, they interact with nature. They do things to make themselves even better examples of what they are. And it would seem, to me, that that might relate to the difference between information in non-living and in living things. The information in living things is directed to ends; it’s directed to purposes that you don’t see in non-living things in the same way.

Robert J. Marks: In other words, the entity has to have creativity. This is one of the things that we argue a lot about in artificial intelligence. Will artificial intelligence ever be creative? And I maintain, I feel it’s almost like a mantra now, but artificial intelligence will never be creative. It’ll never understand. And, currently, it has no common sense. So, these are materialistic attributes that are beyond the capability of artificial intelligence, which certainly can be applied to the human mind.

Michael Egnor: Sure. I mean, I’ve always thought of artificial intelligence as just representation of human intelligence. And, in a sense, that the term artificial intelligence is an oxymoron. If it’s artificial, it’s not intelligence.

Robert J. Marks: What you mention is exactly the tests that Selmer Bringsjord, a professor at Rensselaer used in his test for whether artificial intelligence would be creative. His test was, does the computer program do something, which is outside the explanation, or the intent of the programmer? And there has been, thus far, no artificial intelligence that has done this.

Note: The test computer science professor Selmer Bringsjord pioneered is the Lovelace test, named after computer pioneer Ada Lovelace (1815–1852). Unlike the iconic Turing test, it doesn’t rely on persuading observers that the computer can really think. It requires a genuine demonstration of creativity, so far unmet. For more from Prof. Bringsjord, see “Can human minds be reduced to computer programs?”

You may also enjoy these articles by Eric Holloway on information:

They say this is an information economy. So what is information? How, exactly, is an article in the news different from a random string of letters and punctuation marks?

and

How can we measure meaningful information? Neither randomness nor order alone creates meaning. So how can we identify communications?

Show Notes

- 00:27 | Introducing Dr. Robert Marks

- 01:14 | What is information?

- 06:44 | Exact representations of data

- 08:29 | A system with minimal information

- 09:27 | Information in nature

- 10:43 | Comparing biological information and information in non-living things

- 11:29 | Creation of information

- 12:50 | Will artificial intelligence ever be creative?

- 17:38 | Correlation vs. causation

Additional Resources

- Robert J. Marks at Discovery.org

- Claude Shannon at Encyclopædia Britannica

- Andrey Kolmogorov at Wikipedia

- Spurious Correlations website