Lovelace: The Programmer Who Spooked Alan Turing

Ada Lovelace understood her mentor Charles Babbage’s plans for his new Analytical Engine and was better than he at explaining what it could doWhen iconic computer genius Alan Turing published a paper on thinking computers, why did he feel the need to refute Ada Lovelace (1815–1852), a largely forgotten 19th century computer pioneer? Who was she? Why did she matter? Why did Selmer Bringsjord name his Lovelace Test for computer intelligence, a rival to the Turing Test, after her?

Ada Lovelace was the daughter of Romantic poet George Gordon, Lord Byron (1788–1824). Her parents separated shortly after she was born and she never saw him again. Her mother, Anne, was gifted mathematically. Indeed, Byron had called his wife the “Princess of Parallelograms” (and later, when they fell out, his “Mathematical Medea”).

Ada’s own gifts were encouraged in an almost ideal way. For example, one of her instructors was Mary Somerville, one of the first two women to be permitted to join the Royal Astronomical Society (along with astronomer Caroline Herschel).

Through Somerville, Ada Byron met mathematician and computer pioneer Charles Babbage (1791–1871) at a party in 1833, when she was about 17. Babbage, Lucasian professor of mathematics at Cambridge (a position earlier held by Isaac Newton and later by Stephen Hawking), also became a mentor. He encouraged his “Enchantress of Numbers” to pursue higher mathematics. At the time, he himself was working on the Difference Engine, a calculating machine whose significance Ada Byron immediately grasped. (She is pictured above left in a daguerreotype by Antoine Claudet 1843 or 1850, courtesy The RedBurn, CC BY-SA 4.0)

Babbage went on to develop plans for the Analytical Engine, designed to handle more complex calculations. He asked Ada to translate into English an article about the new engine, published in 1842 by Italian engineer Luigi Federico Menabrea in French. She did so but added her own thoughts about the machine, resulting in notes that were three times as long as Menabrea’s article. The resulting paper was published in a British science journal in 1843, with only her initials, “A.A.L” for “Augusta Ada Lovelace” attesting to her authorship.

Ada Lovelace? In 1835 she married William King, who later became Earl of Lovelace. She then assumed the title, Countess of Lovelace. Her aristocratic position allowed her to continue to socialize with 19th century scientists and intellectuals like Michael Faraday and Charles Dickens.

In her notes to Menabrea’s article, Lovelace pondered ways to make Babbage’s Analytical Engine a more useful device. She ventured beyond mere calculation:

She speculated that the Engine “might act upon other things besides number… the Engine might compose elaborate and scientific pieces of music of any degree of complexity or extent.” The idea of a machine that could manipulate symbols in accordance with rules and that number could represent entities other than quantity mark the fundamental transition from calculation to computation. Ada was the first to explicitly articulate this notion and in this she appears to have seen further than Babbage. She has been referred to as “prophet of the computer age.” Certainly she was the first to express the potential for computers outside mathematics.

“Ada Lovelace” at Computer History Museum

Lovelace even started to envision a means by which the engine could repeat a series of instructions (looping, still used today).

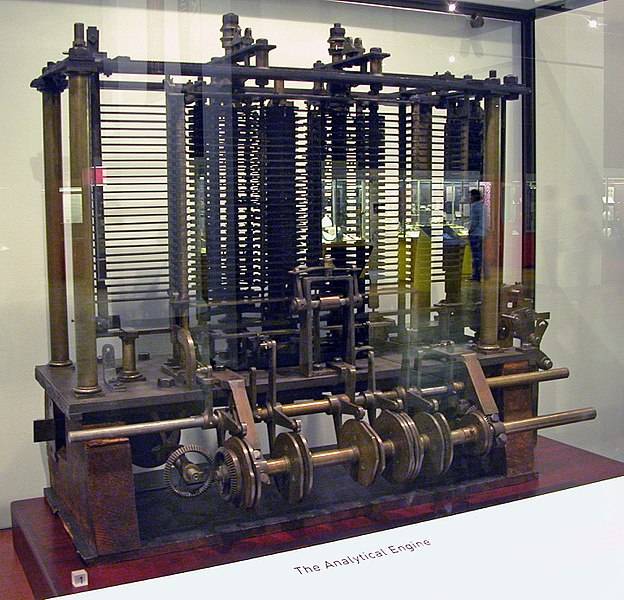

But, sadly for her and for Charles Babbage, the 19th century was not to be the age of computers. Babbage’s Analytical Engine, though “the first machine that deserved to be called a computer” was never finished. Lovelace herself died of cancer in 1852 and was buried alongside the father she never knew. Her work was largely forgotten for a century. (Part of the Analytical Engine, shown above, at the London Science Museum, courtesy Bruno Babai (ByB, CC BY-SA 2.5)

In 1953, B. V. Bowden republished her notes in Faster Than Thought: A Symposium on Digital Computing Machines. At a time when computer programming was slowly becoming an occupation, she began to be recognized as a programming pioneer and visionary:

Ada called herself “an Analyst (& Metaphysician),” and the combination was put to use in the Notes. She understood the plans for the device as well as Babbage but was better at articulating its promise. She rightly saw it as what we would call a general-purpose computer. It was suited for “developping [sic] and tabulating any function whatever… the engine [is] the material expression of any indefinite function of any degree of generality and complexity.” Her Notes anticipate future developments, including computer-generated music.

Merry Maisel and Laura Smart, “Women in Science” at San Diego SuperComputer Center

So then what was it that so concerned Alan Turing?

In 1950, he published a paper on a subject of longstanding interest to him, “Computing Machinery and Intelligence.” It began with a bold premise: “I propose to consider the question, ‘Can machines think?’”

Turing thought that computers could indeed be got to think. Thus he addressed the objection offered by Lovelace a century earlier (her work was then becoming better known though we are not certain how Turing knew about it). In her notes to Menabrea’s paper, she had written,

It is desirable to guard against the possibility of exaggerated ideas that might arise as to the powers of the Analytical Engine. The Analytical Engine has no pretensions whatever to originate anything. It can do whatever we know how to order it to perform. It can follow analysis, but it has no power of anticipating any analytical relations or truths.

Lisa Jardine, “A Point of View: Will machines ever be able to think?” at BBC News (October 18, 2013)

Lovelace, in other words, had high hopes for the machine but thinking creatively wasn’t one of them. Turing disputed her view:

Her objection, he proposes, is tantamount to saying that “computers can never take us by surprise”. Indeed they can, he counters. He believes Lovelace was misled into thinking the contrary because she could have had no idea of the enormous speed and storage capacity of modern computers, making them a match for that of the human brain, and thus, like the brain, capable of processing their stored information to arrive at sometimes “surprising” conclusions.

Lisa Jardine, “A Point of View: Will machines ever be able to think?” at BBC News (October 18, 2013)

Did Turing succeed in refuting Lovelace? Not so far as we can see. Independently thinking computers are still an excellent plot device in science fiction.

In any event, the two positions have led to two different tests of computer intelligence. The test that Turing offered “proposed that a computer can be said to possess artificial intelligence if it can mimic human responses under specific conditions… The test is repeated many times. If the questioner makes the correct determination in half of the test runs or less, the computer is considered to have artificial intelligence because the questioner regards it as “just as human” as the human respondent.”

Bringsjord has criticized this iconic test: “Turing’s claim that “computers do take us by surprise” is only true when “surprise” is given a very superficial interpretation. For, while it is true that computers do things that we don’t intend them to do—because we’re not smart enough, or because we’re not careful enough, or because there are rare hardware errors, or whatever—it isn’t true that there are any cases in which we should want to say that a computer has originated something.”

As he explained in a recent Mind Matters podcast, Bringsjord’s proposed alternative, the Lovelace test, requires that a computer show creativity that is clearly independent of its programming: “Ada Lovelace had a deep, accurate understanding of what computation is. At least, mechanical, standard computation. I care about what she thought was a big, missing, and perhaps eternally missing, piece in a computing machine, that it could not be creative.” The Lovelace test has never been met.

Silicon Valley visionary Ray Kurzweil, told the COSM symposium last fall that we will merge with computers by 2045. Yet it’s not clear what that would even mean, let alone how to proceed.

Perhaps Lovelace’s heritage can offer us a cautionary tale. A voter data program named after her was developed to help Hillary Clinton win the US 2016 election. But, as Pomona College statistics prof Gary Smith, author of The AI Delusion, explains, the developers overlooked a critical fact that may have played a role in Clinton’s defeat:

Some of the stuff that made a difference, they couldn’t put in a computer. Like the enthusiasm factor. When Bernie Sanders and Donald Trump gave speeches, tens of thousands of people showed up and yelled and screamed and were excited. And when Hillary Clinton gave a speech, a couple of hundred people would show up and sit politely. And you couldn’t put that in a computer. The computer algorithm had no idea she was in trouble… they also couldn’t enter into the computer the underlying concern about job erosion and loss which can’t really be quantified. Some people say, if you can’t measure it, it doesn’t count but sometimes the things that count can’t be measured.

News, “The US 2016 election: Why big data failed” at Mind Matters News

Or, as Lovelace told Babbage, “The Analytical Engine has no pretensions whatever to originate anything. It can do whatever we know how to order it to perform.”

Further reading:

Thinking machines: Has the Lovelace Test been passed? Surprising results do not equate to creativity. Is there such a thing as machine creativity?

and

Can human minds be reduced to computer programs? In Silicon Valley that has long been a serious belief. But are we really anywhere close?