Thinking Machines? The Lovelace Test Raises the Stakes

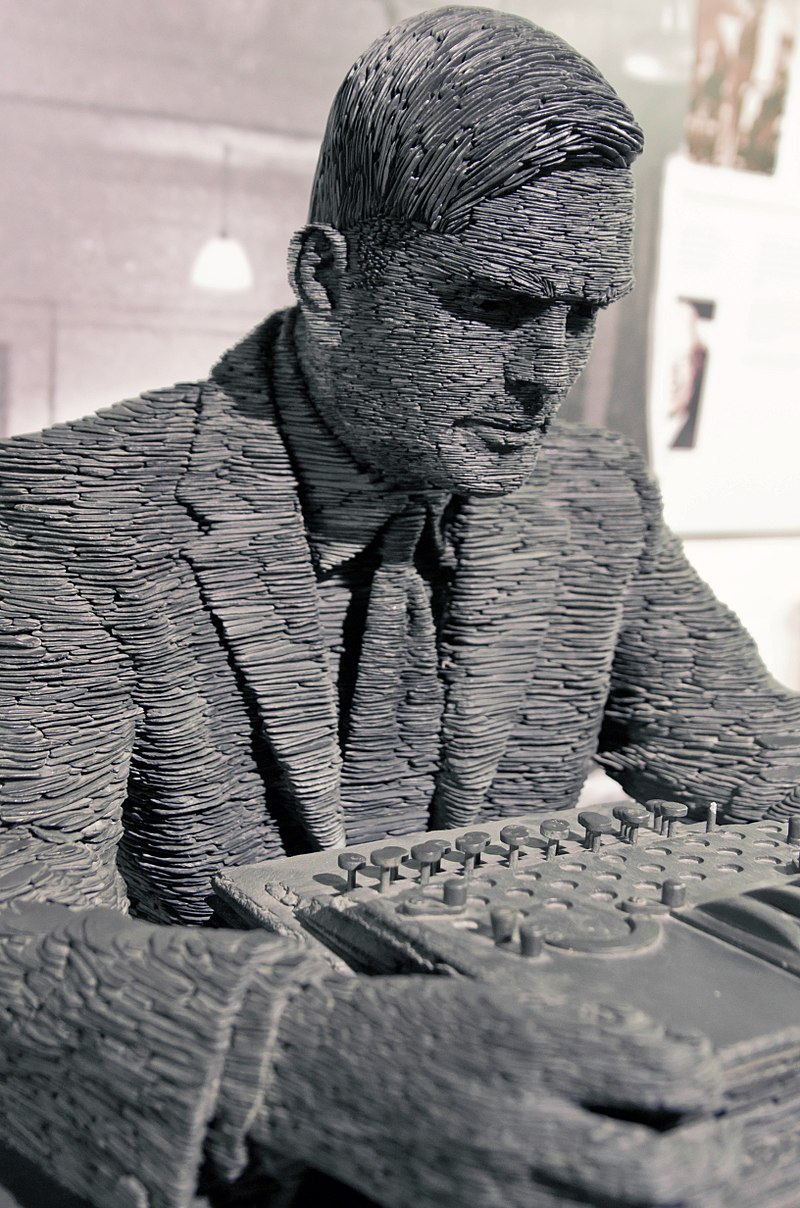

The Turing test has had a free ride in science media for far too long, says an AI expertIn a recent podcast, “The Turing Test is Dead. Long Live The Lovelace Test,” Walter Bradley Center director Robert J. Marks and philosopher and computer scientist Selmer Bringsjord, director of the Rensselaer AI Reasoning Lab, discuss tests for determining whether a computer can think like a human. The best known is the Turing test, developed by computer pioneer Alan Turing (1912–1954) in 1950, which tests whether a machine exhibits intelligent behavior indistinguishable from that of a human by other humans. Many think that Turing’s test doesn’t really work that well, especially when creativity is considered. Is the Lovelace test, originally conceived by Bringsjord, a better alternative?

A partial transcript follows.

01:43 | What is the Turing test?

Robert J. Marks

Robert J. MarksRobert J. Marks (right): I think that, in order to talk about the Lovelace test, we need to go back to the Turing test. Can you elaborate on that and kind of build up to what your Lovelace test is?

Selmer Bringsjord: Most of your listeners will be familiar with it but just in case:

- Lock a computer or a robot AI, or what have you, in one room, seal it off.

- Lock a human being in another, seal it off.

- Get a judge who doesn’t know which entity is in which room and allow the judge to communicate linguistically… e-mail would be ideal; send e-mails back and forth. Ask whatever questions you would like to try to find out which room houses which of these two entities

Turing said that if you strike out as judge, then we have reached the point in time when we have a thinking machine. There are contaminants out there for monkeying with and diluting Turing’s original rules for the game. He said this had to happen for an indeterminate or long period of time. Clearly, by context, you can’t lay down an artificially limited period of time. If it’s two seconds, it’s meaningless. Thirty seconds, probably, a machine, if it is indistinguishable [from a human] is going to have a chance. And no prohibitions on the nature of the judge in terms of their background, education, expertise. Perverted versions of this test restrict the judges to being laypeople, not being experts.

So that’s basically what the Turing test is. As I think you pointed out, he announced and defended this test in a 1950 paper and he considered a series of objections. And one of those objections was the Lovelace objection.

The Lovelace objection: One of the most popular objections to the claim that there can be thinking machines is suggested by a remark made by Lady Lovelace in her memoir on Babbage’s Analytical Engine: The Analytical Engine has no pretensions to originate anything. It can do whatever we know how to order it to perform (cited by Hartree, p.70) The key idea is that machines can only do what we know how to order them to do (or that machines can never do anything really new, or anything that would take us by surprise). As Turing says, one way to respond to these challenges is to ask whether we can ever do anything “really new.” Suppose, for instance, that the world is deterministic, so that everything that we do is fully determined by the laws of nature and the boundary conditions of the universe…

Bringsjord et al. (2001) claim that Turing’s response to the Lovelace Objection is “mysterious” at best, and “incompetent” at worst (p.4). In their view, Turing’s claim that “computers do take us by surprise” is only true when “surprise” is given a very superficial interpretation. For, while it is true that computers do things that we don’t intend them to do—because we’re not smart enough, or because we’re not careful enough, or because there are rare hardware errors, or whatever—it isn’t true that there are any cases in which we should want to say that a computer has originated something.

“The Turing Test,” Stanford Encyclopedia of Philosophy

Note: The “Lady Lovelace” who first raised this objection was Ada Lovelace (1815–1852), an early computer pioneer.

Robert J. Marks: Turing was apparently aware of this and he addressed this objection?

Selmer Bringsjord: He did address it in his 1950 paper—well, charitably put, he addressed it. I don’t think that he really dealt with the objection. That may be true of so many other objections as well.

07:40 | The consciousness objection

Selmer Bringsjord (right): I think the consciousness objection basically says, look, gimme a break, for the machine to be basically indistinguishable [from a human] in a teletype interaction, it doesn’t have to be conscious. Consciousness is kind of a big deal for us humans; it seems to be compared separately from intelligence. I think consciousness is part of the equation, at least when you are talking about deep, robust creativity as well.

Robert J. Marks: I’m going to quote to you from your Lovelace paper about 15 years ago or so: “The progress towards Turing’s dream that’s made is coming only on the strength of clever but shallow trickery. For example, the human creators of artificial agents that compete in present-day versions of the Turing test know all too well that they have merely tried to fool those people who interact with their agents into believing that these agents really have minds.” I think that shows the inadequacy of the Turing test, succinctly put.

08:57 | Eugene Goostman

Robert J. Marks: Are you familiar with “Eugene Goostman” at all? He was supposedly an AI agent that passed the Turing test a few years ago.

Selmer Bringsjord: I’m not intimately familiar with it but I certainly followed it from a media point of view.

Robert J. Marks: Your quote comes to mind because “Eugene Goostman” was supposedly a teenager from Ukraine. So if you talked to him, and he didn’t understand what you were saying, well, come one, the kid’s Ukrainian, he doesn’t understand English, and if he gives a stupid answer, hey, the kid’s only a teenager.

Selmer Bringsjord: Exactly.

Note: Quantum physicist Scott Aaronson showed, amusingly, just how unconvincing this chatbot was in a short conversation. (“Scott: Do you think your ability to fool unsophisticated judges indicates a flaw with the Turing Test itself, or merely with the way people have interpreted the test?” “Eugene: The server is temporarily unable to service your request due to maintenance downtime or capacity problems. Please try again later”)

09:48 | The Lovelace test

Robert J. Marks: So let’s get on to the Lovelace test, a more robust test for intelligence for computers. How do we test whether an artificial intelligence is creative or not? That’s in your Lovelace test. Give a little description of that if you would.

Selmer Bringsjord: The idea is really quite simple. It’s to take Lovelace at her word. So if — it would plausibly be a group of people who have built this amazing system — that’s supposed to be human level conversationally. And if it’s conversational, it could write a sonnet, which was an ability Turing considered. It should be able to do things that we would regard as creative. Now, if it’s already got a sonnet typed in by the programmer, and when an appropriate context is detected in a conversation, along with input, it spits back the sonnet, that’s not going to cut it. Most importantly, and this gets at the nature of the test, the developers of the system would know, on seeing the sonnet emerge, oh great, our guinea pig over here wasn’t exactly sure why the context was there, we know what’s going on. But in a case where the developers literally have absolutely no idea how sustained production through time of artifacts that irresistibly imply, in our minds, that this is a highly creative agent, that is what passing the test amounts to.

(Welsh slate statue of Alan Turing at Bletchley Park/Antoine Taveneau CC– BY-SA 3 0)

Now this does assume that you can’t take a developer who has been on the fringes of the team so it’s really an ideal observer view. And if I had to do this over again and had to update it — and I do think it needs to be updated in light of Machine Learning/Deep Learning — I would appeal to an ideal observer more rigorously than I did. There should be some formal or mathematical way to say, Look, every iota of what’s going on her might not be assimilated by any one developer or even the team of developers that built this remarkable AI.

But hypothetically, let’s say that the ideal observer has total command of everything that’s gone into this system and sits back and lets it start up its system and work and generate these artifacts that are going to, by hypothesis, imply, at least in the observer, that there is something remarkably creative going on. If that ideal observer is in the dark, now we know that we are dealing with something truly astonishing.

It is, of course, analogous to what he have in every computational neuroscience lab on the face of our planet. No matter what they might say in public, if you get any of those folks in a room and ask, how exactly did John Updike come up with that page there, you know, in the Witches of Eastwick? We’re a little bit confused about it. Can you please explain that? They have literally no idea, zero, how that passage comes out of the brain.

The test says, when we reach that point, we better start paying attention to these machines, at least if we want to measure them against the human mind — which is exactly what Turing was all about in his 1950 paper.

Next: Has the Lovelace test for human-type intelligence been passed by any computer?

Further reading: No materialist theory of consciousness is plausible. All such theories either deny the very thing they are trying to explain, result in absurd scenarios, or end up requiring an immaterial intervention (Eric Holloway)

Current artificial intelligence research is unscientific. The assumption that the human mind can be reduced to a computer program has never really been tested. (Eric Holloway)

and

How you can really know that you’re talking to a computer. In a lively exchange, computer science experts offer some savvy advice.