Artificial intelligence is impossible

Meaningful information vs artificial intelligenceWhat is meaningful information, and how does it relate to the artificial intelligence question?

First, let’s start with Claude Shannon’s definition of information. Shannon (1916–2001), a mathematician and computer scientist, stated that an event’s information content is the negative logarithm* of its probability.

So, if I flip a coin, I generate 1 bit of information, according to his theory. The coin came down heads or tails. That’s all the information it provides.

However, Shannon’s definition of information does not capture our intuition of information. Suppose I paid money to learn a lot of information at a lecture and the lecturer spent the whole session flipping a coin and calling out the result. I’d consider the event uninformative and ask for my money back.

But what if the lecturer insisted that he has produced an extremely large amount of Shannon information for my money, and thus met the requirement of providing a lot of information? I would not be convinced. Would you?

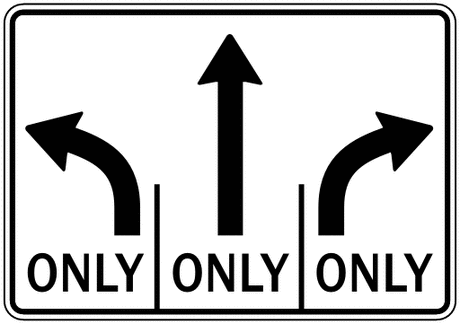

A quantity that better matches our intuitive notion of information is mutual information. Mutual information measures how much event A reduces our uncertainty about event B. We can see mutual information in action if we picture a sign at a fork in the road.

Before event A (reading the sign), we are unsure which branch of the fork will take us home. That is to say, we are uncertain about event B, the outcome of choosing one of the branches.

Once event A occurs (we read the sign), we are certain about event B (the outcome of choosing one of the branches) and we can find our way home.

An important property of mutual information is that it is conserved. Leonid Levin’s law of independence conservation states that no combination of random and deterministic processing can increase mutual information. A series of coin flips would not have told you the direction you are heading in if you enter one of these lanes.

This raises the question: What can create mutual information?

A defining aspect of the human mind is its ability to create mutual information. For example, the traffic sign designer in the example above created mutual information. You understood what the sign was meant to convey.

This brings us to the debate regarding artificial intelligence. Can artificial intelligence reproduce human intelligence?

The answer is no.

All forms of artificial intelligence can be reduced to a Turing machine, that is, a system of rules, states, and transitions that can determine a result using a set of rules. All Turing machines operate entirely according to randomness and determinism.

Because the law of independence conservation states that no combination of randomness and determinism can create mutual information, then likewise no Turing machine nor artificial intelligence can create mutual information. Thus, the goal of artificial intelligence researchers to reproduce human intelligence with a computer program is impossible to achieve.

Note: * A logarithm is the number of times we multiply a number to get another number. To get 8, we multiply 2 three times, so we say that the logarithm of 8 is 3. We can write it like this: log2(8) = 3

See also: Could one single machine invent everything (Eric Holloway)

Eric Holloway

Eric Holloway has a Ph.D. in Electrical & Computer Engineering from Baylor University. He is a current Captain in the United States Air Force where he served in the US and Afghanistan He is the co-editor of the book Naturalism and Its Alternatives in Scientific Methodologies. Dr. Holloway is an Associate Fellow of the Walter Bradley Center for Natural and Artificial Intelligence.