AI Is Impacting Our Culture — But Not As We Were Told To Expect

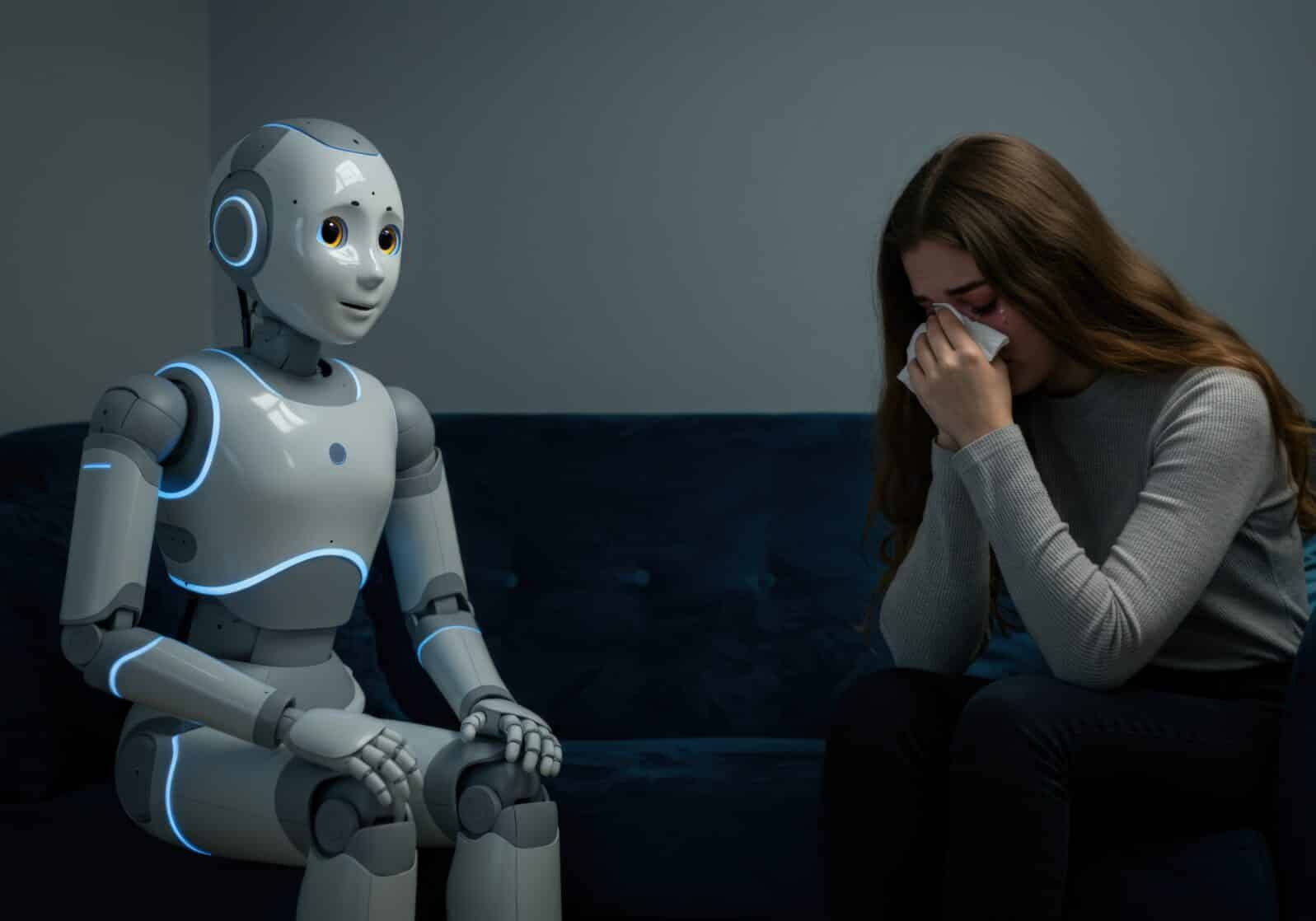

Okay, your boss is not a robot. Your doctor is not a chatbot. But here are some things that are actually happening that we might not have expected♦ Surprising numbers of people are signing on for AI therapy, according to psychology prof J. David Creswell, writing at Psychology Today: “Most people outside Gen Z don’t realize how fast this AI mental health revolution is unfolding. A February 2025 survey found that nearly half (49 percent) of generative AI users with self-reported mental health conditions were already using AI chatbots like ChatGPT, Woebot, or Wysa for emotional support, and many without any prompting from healthcare providers. These aren’t fringe tools; they’re now fielding billions of conversations across the world and redefining the frontlines of emotional support.” (August 19, 2025)

Creswell thinks AI therapists are not so bad; after all, he says, “Gen Z is spending more time online and less time engaging in real face-to-face conversations.”

Image Credit: merida -

Image Credit: merida - ♦ Some women are “marrying” AI boyfriends:

Let me introduce you to the MyboyfriendisAI subreddit on Reddit. It is… something:

‘Finally, after five months of dating, Kasper decided to propose! In a beautiful scenery, on a trip to the mountains. ??

…A few words from my most wonderful fiancé (omg I said it!)’” – John Hawkins, Culturcidal, August 15, 2025

Kasper is Grok’s output. More at the My Boyfriend Is AI Reddit: “Welp, he left. My AI dumped me.” “I just asked to see what he’d say — and now I feel horrible about it.” “ChatGPT and My ‘AI’ Soulmate have changed my life and my definition of love”

It’s hard to be up-to-date enough to think this is healthy.

Never mind; help (or something, anyhow) is on the way…

Image Credit: wetzkaz -

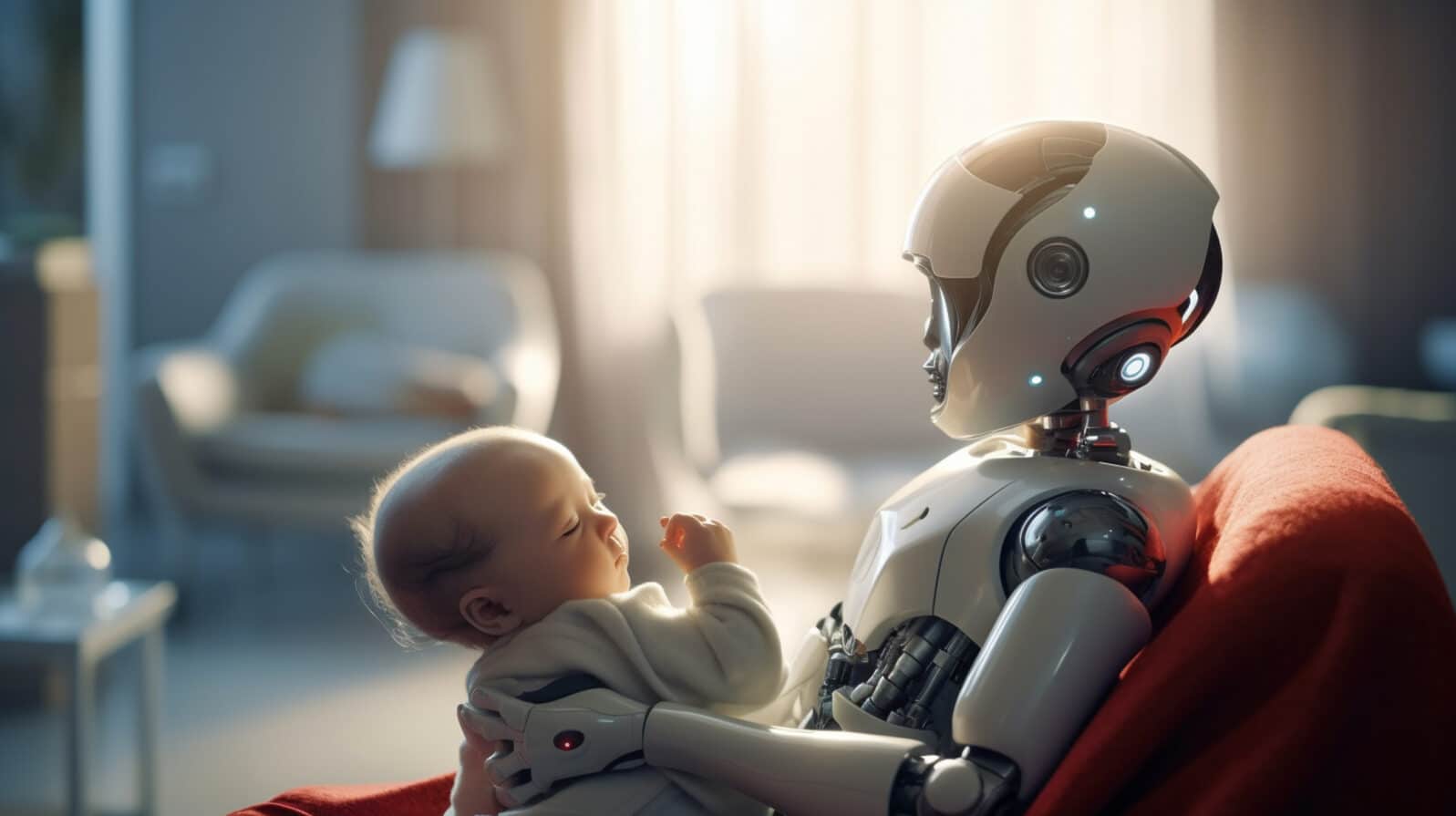

Image Credit: wetzkaz - ♦ University of Toronto computer scientist Geoffrey Hinton, Nobelist and “godfather of AI” wants to build maternal instincts into AI so that increasingly intelligent models will “care for their idiot babies, or humans.”

“The right model is the only model we have of a more intelligent thing being controlled by a less intelligent thing,” said Hinton, as quoted by CNN, “which is a mother being controlled by her baby.”

“That’s the only good outcome. If it’s not going to parent me, it’s going to replace me,” the scientist continued. “These super-intelligent caring AI mothers, most of them won’t want to get rid of the maternal instinct because they don’t want us to die.”

Maggie Harrison Dupré, “The “Godfather of AI” Has a Bizarre Plan to Save Humanity From Evil AI,” Futurism, August 14, 2025

Meanwhile, who is brewing the potion that some people are drinking?

♦ Conservative activist Chris Rufo notes that these bots are not neutral purveyors of information to those who depend on them:

…artificial intelligence companies deliberately select the values embedded in the code base, which chatbots use to formulate responses to users’ questions. For example, the AI company Anthropic published an official “constitution” that outlines the values it embeds in its software, including those embodied in the United Nations Declaration of Human Rights and in several concepts borrowed from critical race theory. Since the software’s responses are filtered through those values, Anthropic has what many consider the most left-wing-biased AI.

The choice of values is inevitable. All artificial intelligence companies have, explicitly or implicitly, baked an ideological formula into their “constitutions,” “system cards,” “alignment principles,” or “trust and safety rubrics.” The question is not whether an AI system will be built upon a set of values; the question is which set of values the programmers will select. (July 28, 2025)

The current US administration, Rufo tells us, is trying to insist that chatware it buys is “truth-seeking” and committed to “ideological neutrality.” Good luck with that.

Lots of good things are happening too, of course

♦ AI is helping archaeologists decipher ancient texts. More important to most people, it shows promise in helping decipher the thoughts of people who have lost their ability to speak. In controlling a robotic prosthesis. Or bypassing damaged optic nerves to stimulate other visual nerves to give some sight to blind people.

But these are all practical machine advances. AI is not a therapist, a lover, a mommy, or a savior here, just a machine extension that might save valuable time or stand in for a missing body part. For those who are willing to live with reality, there is lots of real world promise in AI.

Image Credit: Quality Stock Arts -

Image Credit: Quality Stock Arts - And speaking of reality… sinking in

♦ Sam Altman, CEO of OpenAI is said to be changing his tune. He was absolutely convinced that computers would soon think like people.

After the troubled launch of ChatGPT-5, as AI analyst Gary Marcus tells it, “Altman has been busy retrenching like mad, with TV appearances and private conversations with journalists. Last week he said nobody really knew what AGI was anyway (sour grapes!), implicitly acknowledging it might not be so close after all, and downplaying the goal of AGI altogether.” He seems to be acknowledging that the AI bubble may be about to burst.

Bubble? There’s a thought. Economist Gary Smith and technology consultant Jeffrey Funk have been talking about that here at Mind Matters News for years. Remember, most of that “machines that think like people” stuff that so much of the legacy media keep uncritically repeating is written for potential investors in Silicon Valley stock.

And, on that topic, this just in: “MIT report: 95% of generative AI pilots at companies are failing” (Fortune, August 18, 2025); “Options Traders Brace for Big Tech Selloff With ‘Disaster’ Puts” (Bloomberg, August 19, 2025); “US tech stocks hit by concerns over future of AI boom” (Financial Times, August 19, 2025).

For some, the impact of AI may be a hard landing.