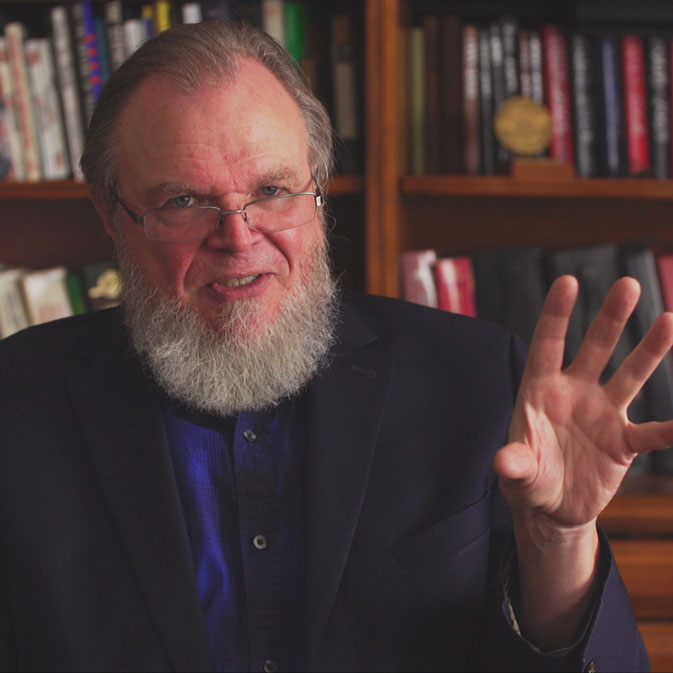

Robert J. Marks: Straight Talk About Killer Robots

Dr. Marks, the author of Killer Robots, shares his expertise with Gretchen Huizinga of the Beatrice InstituteIn the first segment of the recent podcast, “What Does It Mean to Be Human in an Age of Artificial Intelligence?”, Walter Bradley Center director Robert J. Marks discussed what artificial intelligence can and can’t do and its ethical implications with veteran podcaster Gretchen Huizinga In the second segment, they discussed “How did all the AI hype get started?” Then, in this third part, the discussion turned to the use of artificial intelligence in warfare. Dr. Marks is the author of The Case for Killer Robots, which looks at the issues raised in some detail. Here he gives a brief overview.

The entire interview was originally published by Christian think tank, the Beatrice Institute (March 3, 2022) and is repeated here with their kind permission:

Here’s a partial transcript of the third segment, beginning at 28:44 min, with notes, Show Notes, and Additional Resources:

Robert Marks: I think that we only need to look at history to see the foolishness in not developing everything that we can technologically. Technical superiority in nations wins conflict. Even in the absence of hot conflict, it gives pause to people who would do us harm.

There is, for example, a Stop the Killer Robots movement, trying to diminish the development of autonomous killer robots as they call them…

Robert Marks: If you have a swarm drone of, say, 1000 drones attacking you, you can take out 90% of them and that drone swarm still completes its mission. And that to me is very scary. So swarms are very resilient to attack. That gave me pause for a long time.

But the beauty of the technology in the United States, as long as it’s supported, is that it will come up with ways of countering that. How do you take out a swarm of drones? Israel came up with the idea of shooting a laser and maybe taking out a drone swarm one at a time. Well, that would just take too long. And then Russia has come out with an EMP cannon…

Gretchen Huizinga: I’m not sure that we are all familiar with that.

Robert Marks: EMP is electromagnetic pulses. If they put up a thermonuclear bomb sufficiently high above the United States, above Kansas, it could disable our entire power grid.

EMPs fry your electronics. Why is that? Because if you think of your cell phone, the phone receives these very, very small signals, microwave signal and turns them into audio and video and everything of that sort. So, it takes microwave signals from the air, converts it to electricity, and does its magic. Imagine though that the signal is increased a billion times. That energy which is going to be introduced to the little wires in your cell phone literally fries your cell phone. And that’s the problem with the EMPs. So, EMPs give me pause, especially with China’s new launch of this supersonic missile. A a push of the button, they could take out our entire power grid.

But getting back to swarms and our resilience towards swarms, Russia came out with this EMP cannon, which is a big antenna that generates an EMP pulse. Imagine this EMP pulse going into an attacking drone swarm. This EMP pulse would just be like spraying bug spray into a swarm of gnats attacking you and all the gnats would fall down. So the swarm idea bugged me for a long time until I heard of Russia’s solution. All of a sudden, I feel a lot better and hope that the United States is pursuing a similar sort of technology.

This is the problem with the arms race. People come up with different devices, including AI, but then there need to be countermeasures. This is terrible. I wish it didn’t exist, but it’s existed throughout history and will exist forever because of man’s fallen nature. That’s reason that I support the development of so-called killer robots.

By the way, one last thing: There are autonomous killer robots available. Israel has something called the HARPY missile that can do totally autonomous missions. It goes over a battlefield and loiters; it waits till it’s illuminated by radar. When it’s illuminated by radar, it follows the radar beam back to the installation and takes out the radar installation. All of this can be done autonomously without human intervention. So, make no mistake; these autonomous robots are already among us.

Gretchen Huizinga: Yeah. And I think that goes back to our worldview. The people that are saying we shouldn’t be making them are not factoring in that other people are. And again, with the fallen nature, we’re not going to change human nature. So this whole defense system is necessary.

Robert Marks: Yeah. For example, even the best treaties in the world… do you think that the North Korean dictator is going to follow a nuclear arms treaty? What about the dictator in Syria? Is he going to follow a biological weapons ban? No, he’s not. No matter what we do in terms of treaties, it isn’t going to work. And many of these people, including Iran, are developing these weapons behind the scene covertly. So yeah, it’s important we stay on top of it.

Gretchen Huizinga: Well, as you know I’m also part of an organization called AI and Faith. And we’ve mostly been talking about AI, but I’d like to focus for a minute on the faith side of things. And the prevailing worldview in high tech is notably skeptical, if not openly hostile to the Christian faith. I know there are many Christians working in AI and they care deeply about AI from an ethical perspective. So, you’ve talked a bit about the different ends of the ethical discussion. How should Christians inform both design ethics and end user ethics? And can we even?

Robert Marks: Ethics?… I think you divided it well. There is design ethics. This is for the engineer. I think that design ethics is pretty well agnostic. The design engineer is supposed to develop AI and the question is whether this AI — autonomous or not — does what it was designed to do and no more.

This takes a lot of domain expertise, it takes testing. And that testing must be done with a degree of design expertise. And once your product is done, you can go to your customer and say, “This AI does what you asked to do and it does no more.” And that’s the design ethics. This is in contrast with end user ethics.

End user [ethics] would be, for example, a commander in a field sees a certain scenario and wonders whether or not he should use his AI weapons in the conflict in accordance to the circumstance. It also has to do with a lot of ethical questions.

Now, I have attended a lot of ethics lectures. Some are secular ethics. And I always found the secular ethics were kind of built on sand because they use things such as community standard and things of that sort. If you go back to Germany, there it was considered ethical to do terrible things to Jews. Right? That was part of their ethics. And so, I think that any ethics which is built on a secular view is going to be built on sand.

I think though, if you go to the Judaeo-Christian sort of foundation, that you’re building it more on a rock. Even there, I see disagreements as the ethical end user, but I see a lot greater consistency and at least a reference which you can give to substantiate your use of whatever you’re using.

Here are all portions of the discussion in order:

Robert J. Marks: Zeroing in on what AI can and can’t do. Walter Bradley Center director Marks discusses what’s hot and what’s not in AI with fellow computer maven Gretchen Huizinga. One of Marks’s contributions to AI was helping develop the concept of “active information,” that is, the detectable information added by an intelligent agent.

Robert J. Marks: AI history — How did all the hype get started? Dr. Marks and Gretchen Huizinga muse on the remarkable inventors who made AI what it is — and isn’t — today. Dr. Marks points out that the hype is over AI is so intense that, if his car were AI, he could call it “self-aware” because it beeps when nearing an obstacle.

Robert J. Marks: Straight talk about killer robots: Dr. Marks, the author of Killer Robots, shares his expertise with Gretchen Huizinga of the Beatrice Institute. In Marks’s view, no discussion of the rights or wrongs of using autonomous drones in warfare is meaningful apart from what we know re what others are doing.

and

Lead us not into the Uncanny Valley… Robert Marks and Gretchen Huizinga discuss whether future developments in artificial intelligence will lead to a better future or a worse one

Marks agrees with business analyst John Tamny that AI can enable us all to do work for which we are uniquely fitted. Otherwise, it’s the Uncanny Valley.

Show Notes

- 01:32 | Introducing Dr. Robert J. Marks

- 02:38 | The Difference Between Artificial and Natural Intelligence

- 06:31 | The Goldilocks Position

- 07:40 | The Challenges and Limitations to AI

- 14:42 | The Legacy of Walter Bradley

- 18:55 | The Difference Between Computational and Artificial Intelligence

- 24:22 | What is Hope and What is Hype?

- 28:44 | What Keeps Dr. Robert J. Marks Up at Night?

- 34:26 | AI and Faith

- 36:56 | Is Flourishing Bad and Friction Good?

- 40:45 | The Personal Mission of Dr. Robert J. Marks

Additional Resources

- Gretchen Huizinga

- Robert J. Marks

- The Beatrice Institute

- AI and Faith

- Who was Alan Turing?

- Who was Alonzo Church?

- The Church-Turing Thesis

- Who is Walter Bradley?

- Buy For a Greater Purpose: The Life and Legacy of Walter Bradley

- Buy The Case For Killer Robots

- More on Drone Swarms

- More on EMPs