Chaitin’s Number Talks To Turing’s Halting Problem

Why is Chaitin’s number considered unknowable even though the first few bits have been computed?In last week’s podcast,, “The Chaitin Interview V: Chaitin’s Number,” Walter Bradley Center director Robert J. Marks continued his conversation with mathematician Gregory Chaitin( best known for Chaitin’s unknowable number) on a variety of things mathematical. Last time, they looked at whether the unknowable number is a constant and how one enterprising team has succeeded in calculating at least the first 64 bits. This time, they look at the vexing halting problem in computer science, first identified by computer pioneer Alan Turing in 1936:

This portion begins at 07:16 min. A partial transcript, Show Notes, and Additional Resources follow.

Robert J. Marks: Well, here’s a question that I have. I know that the Omega or Chaitin’s number is based on the Turing halting problem, which says that there can be no program written to analyze generally another arbitrary program to say whether it halts or it runs forever. Wouldn’t you have to, in a way, solve the halting problem for smaller programs in order to get Chaitin’s number?

Gregory Chaitin: Yeah. Well, if you had the first n bits in base 2 of the numerical value of a halting probability Omega, it’s very straightforward — but very time-consuming to solve the whole problem for all programs up to n bits in size. In a way, the halting probability Omega is a very compressed form of answers to individual cases of the halting problem. Knowing n bits of the value of the halting probability tells you for twon individual programs, all the programs up to n bits in size, whether each one halts or not, as it’s easy to see. One way to put it, from an engineering point of view, it’s the maximum compression of the answers to individual cases of the halting problem.

It’s provably the best possible compression of all the answers of bits of the halting problem. To solve the halting problem from all programs up to n bits and size cannot be done in less than n bits. So you get a paradox. Omega does it in precisely n bits. That is the best possible compression. It’s like a crystallized essence of answers to the halting problem, the best possible irreducible compression of all the answers.

Note: “A key step in showing that incompleteness is natural and pervasive was taken by Alan M. Turing in 1936, when he demonstrated that there can be no general procedure to decide if a self-contained computer program will eventually halt.” – Scientific American, 2006

The halting problem is described in Scientific American as a reductio ad absurdum proof, meaning that it shows that alternative conclusions are absurd (can’t be rooted in logic).

Robert J. Marks: Even though a few bits have been computed of Chaitin’s number, it is advertised as unknowable…?

Gregory Chaitin: Yeah, because you can show that an n-bit program can’t calculate more than the first n bits. An n-bit mathematical theory can’t enable you to prove what the more than the first n bits are. It’s logically and computationally unknowable. If the tool you’re using has n bits, you’re only going to be able to get, at most, n bits of the numerical value. After that, all the rest of the infinite number of remaining bits look just totally random and totally unstructured and you’ll never know them.

Robert J. Marks: That’s fascinating.

Gregory Chaitin: It’s fun. I’m surprised that it became such a hit in a way.

Robert J. Marks: Well, but think about that, when you tell somebody that there are all of these open problems in mathematics that require a single counterexample, like Goldbach’s Conjecture, Collatz Conjecture and Legendre’s Conjecture. These are all problems with big prizes. Big monetary prizes. You have postulated, in theory, a number — Chaitin’s number Omega. A single number between zero and one that solves all of these problems, at least in a philosophical realm. That is astonishing.

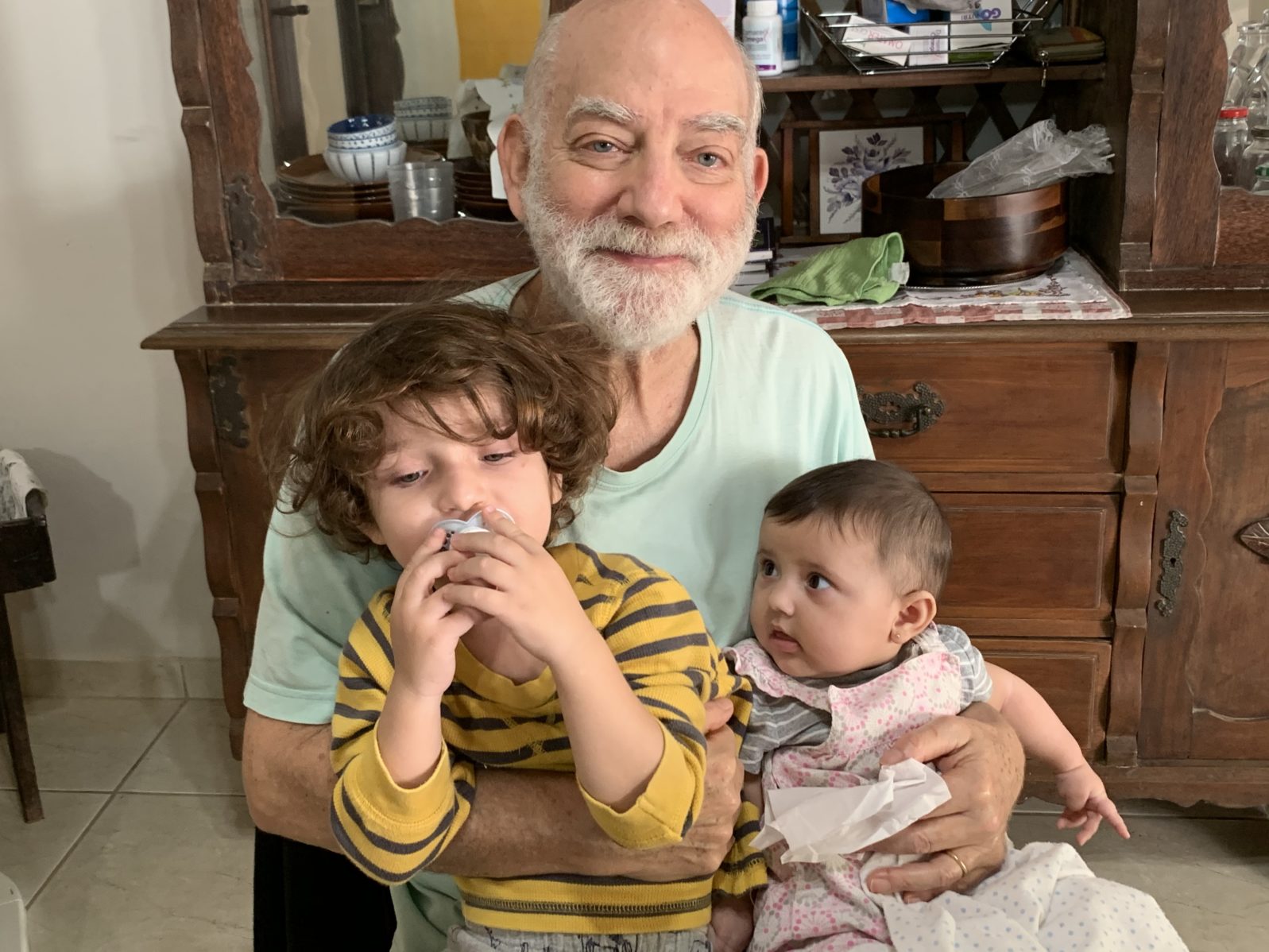

Gregory Chaitin (pictured with his children): I hope it’ll inspire young people.

Engineering is great, wonderful. I’ve worked as an engineer. Elon Musk is my hero. But pure mathematics is also one of my loves and maybe Omega will inspire some young people to become mathematicians, pure mathematicians. It may also give people an interest in philosophical questions instead of practical questions.

Next: Why “impractical” things like philosophy are actually quite useful

Don’t miss the stories and links from the previous podcasts:

From Podcast 5:

Is Chaitin’s unknowable number a constant? One mathematics team has succeeded in the first 64 bits of a Chaitin Omega number. Gregory Chaitin explains that it’s more complicated than that; it depends on whether you use a universal Turing machine that allows for very concise programs.

From Podcast 4:

Can mathematics help us understand consciousness? Gregory Chaitin asks, what if the universe is information, not matter? Some philosophers see the universe as created by mathematics, not matter. Gregory Chaitin prefers to see it as created by information. God is then a programmer.

Why human creativity is not computable. There is a paradox involved with computers and human creativity, something like Gödel’s Incompleteness Theorems or the Smallest Uninteresting Number. Creativity is what we don’t know. Once it is reduced to a formula a computer can use, it is not creative any more, by definition.

The paradox of the first uninteresting number. Robert J. Marks sometimes uses the paradox of the smallest “uninteresting” number to illustrate proof by contradiction — that is, by creating paradoxes. Gregory Chaitin: You can sort of go step by step from the paradox of the smallest “uninteresting” number to a proof very similar to mine.

Why the unknowable number exists but is uncomputable. Sensing that a computer program is “elegant” requires discernment. Proving mathematically that it is elegant is, Chaitin shows, impossible. Gregory Chaitin walks readers through his proof of unknowability, which is based on the Law of Non-Contradiction.

Getting to know the unknowable number (more or less). Only an infinite mind could calculate each bit. Gregory Chaitin’s unknowable number, the “halting probability omega,” shows why, in general, we can’t prove that programs are “elegant.”

Image Credit: dTosh -

Image Credit: dTosh - From Podcast 3:

A question every scientist dreads: Has science passed the peak? Gregory Chaitin worries about the growth of bureaucracy in science: You have to learn from your failures. If you don’t fail, it means you’re not innovating enough. Robert J. Marks and Gregory Chaitin discuss the reasons high tech companies are leaving Silicon Valley for Texas and elsewhere.

Gregory Chaitin on how bureaucracy chokes science today. He complains, They’re managing to make it impossible for anybody to do any real research. You have to say in advance what you’re going to accomplish. You have to have milestones, reports. In Chaitin’s view, a key problem is that the current system cannot afford failure — but the risk of some failures is often the price of later success.

How Stephen Wolfram revolutionized math computing. Wolfram has not made computers creative but he certainly took a lot of the drudgery out of the profession. Gregory Chaitin also discusses the amazing ideas early mathematicians developed without the software-based methods we are so lucky to have today.

Why Elon Musk, and others like him, can’t afford to follow rules. Mathematician Gregory Chaitin explains why Elon Musk is, perhaps unexpectedly, his hero. Very creative people like Musk often have quirks and strange ideas (Gödel and Cantor, for example) which do not prevent them from making major advances.

Why don’t we see many great books on math any more? Decades ago, Gregory Chaitin reminds us, mathematicians were not forced by the rules of the academic establishment to keep producing papers, so they could write key books. Chaitin himself succeeded with significant work (see Chaitin’s Unknowable Number) by working in time spared from IBM research rather than the academic rat race.

Mathematics: Did we invent it or did we merely discover it? What does it say about our universe if the deeper mathematics has always been there for us to find, if we can? Gregory Chaitin, best known for Chaitin’s Unknowable Number, discusses the way deep math is discovered whereas trivial math is merely invented.

From the transcripts of the second podcast: Hard math can be entertaining — with the right musical score! Gregory Chaitin discusses with Robert J. Marks the fun side of solving hard math problems, some of which come with million-dollar prizes. The musical Fermat’s Last Tango features the ghost of mathematician Pierre de Fermat pestering the math nerd who solved his unfinished Last Conjecture.

Chaitin’s discovery of a way of describing true randomness. He found that concepts f rom computer programming worked well because, if the data is not random, the program should be smaller than the data. So, Chaitin on randomness: The simplest theory is best; if no theory is simpler than the data you are trying to explain, then the data is random.

How did Ray Solomonoff kickstart algorithmic information theory? He started off the long pursuit of the shortest effective string of information that describes an object. Gregory Chaitin reminisces on his interactions with Ray Solomonoff and Marvin Minsky, fellow founders of Algorithmic Information Theory.

Gregory Chaitin’s “almost” meeting with Kurt Gödel. This hard-to-find anecdote gives some sense of the encouraging but eccentric math genius. Chaitin recalls, based on this and other episodes, “There was a surreal quality to Gödel and to communicating with Gödel.”

Gregory Chaitin on the great mathematicians, East and West: Himself a “game-changer” in mathematics, Chaitin muses on what made the great thinkers stand out. Chaitin discusses the almost supernatural awareness some mathematicians have had of the foundations of our shared reality in the mathematics of the universe.

and

How Kurt Gödel destroyed a popular form of atheism. We don’t hear much about logical positivism now but it was very fashionable in the early twentieth century. Gödel’s incompleteness theorems showed that we cannot devise a complete set of axioms that accounts for all of reality — bad news for positivist atheism.

You may also wish to read: Things exist that are unknowable: A tutorial on Chaitin’s number (Robert J. Marks)

and

Five surprising facts about famous scientists we bet you never knew: How about juggling, riding a unicycle, and playing bongo? Or catching criminals or cracking safes? Or believing devoutly in God… (Robert J. Marks)

Show Notes

- 00:27 | Introducing Gregory Chaitin and Chaitin’s number

- 01:32 | Chaitin’s number or Chaitin’s constant?

- 07:16 | Must the halting problem be solved for smaller programs in order to get Chaitin’s number?

- 09:50 | The usefulness of philosophy and the impractical

- 17:17 | Could Chaitin’s number be calculated to a precision which would allow for a proof or disproof of something like Goldbach’s Conjecture?

- 19:20 | The Jump of the Omega Number

Additional Resources

- Gregory Chaitin’s Website

- Unravelling Complexity: The Life and Work of Gregory Chaitin, edited by Shyam Wuppuluri and Francisco Antonio Doria

- Elements of Information Theory by Thomas Cover and Joy Thomas

- “On the length of programs for computing finite binary sequences,” by Gregory Chaitin, published when he as a teenager. (Journal of the ACM (JACM) 13, no. 4 (1966): 547-569).

- Chris Calude, professor at the University of Auckland

- Gottfried Wilhelm Leibniz, German Enlightenment philosopher, mathematician, and political adviser

- Stephen Wolfram, computer scientist and physicist

- Goldbach Conjecture

- Collatz Conjecture

- Legendre’s Conjecture

- Elon Musk, engineer and entrepreneur

- G.H. Hardy, English mathematician

- Ray Solomonoff, one of the founders of algorithmic information theory

- Hector Zenil, computational natural scientist

- Automacoin

- Bitcoin

- Marvin Minsky, cognitive and computer scientist

- George Gilder, economist and co-founder of Discovery Institute

- Twin Prime Conjecture

- Georg Cantor, mathematician

- BBC 4’s Dangerous Knowledge