Jonathan Bartlett recalls the Rise and Fall of PlayStation 3 Supercomputers

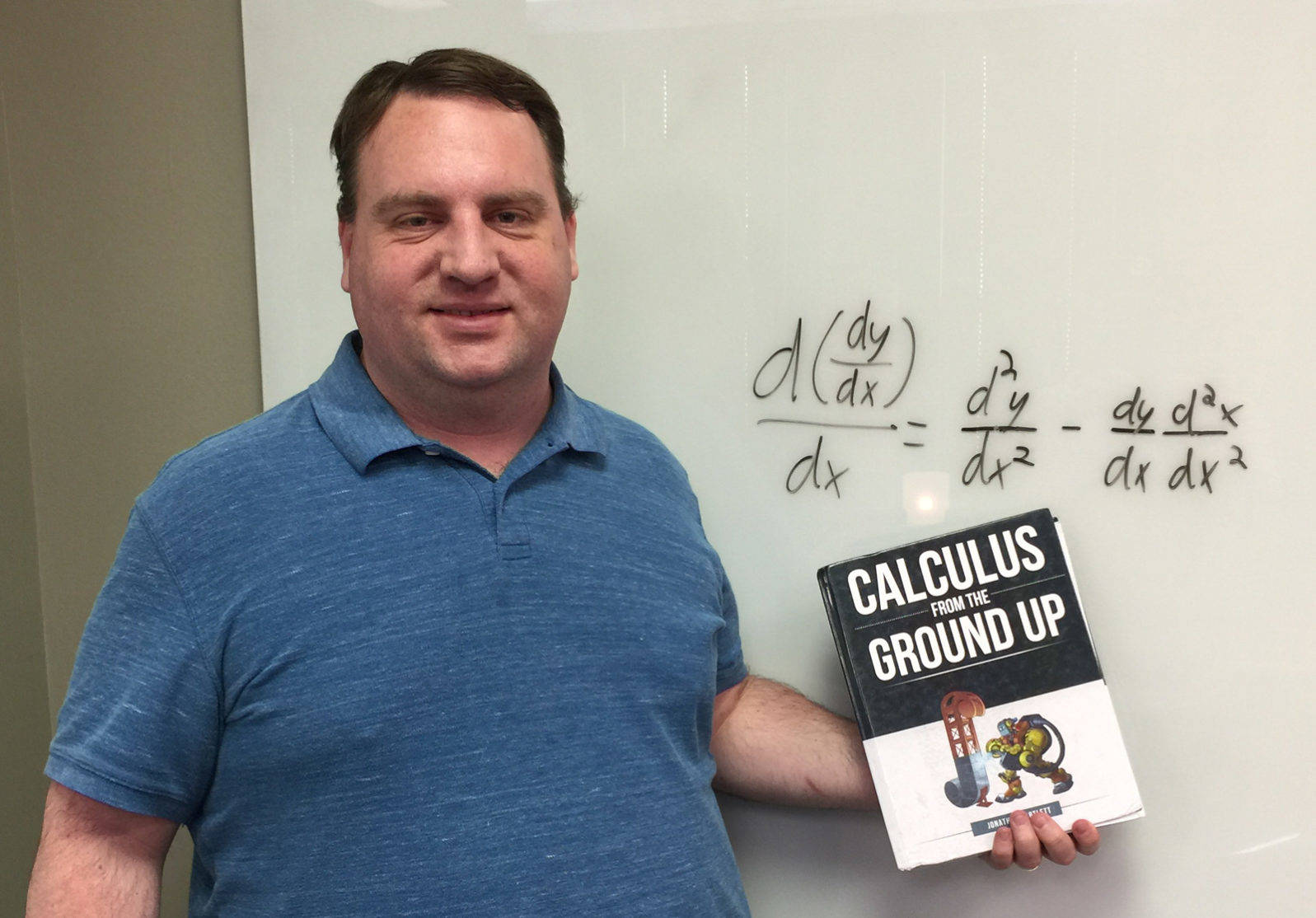

And how, at one point, he got kicked out of a WalMart on that account. Hey, high tech means vulnerabilityA recent article at The Verge recounts the rise and fall of supercomputers made out of game consoles, specifically the PlayStation 3. Bradley Center fellow Jonathan Bartlett (below, pictured with his recent calculus textbook) sits down with Mind Matters News to discuss his own role in that entertaining history:

Mind Matters News: Jonathan, of all the things we could use to build supercomputers, why the craze for PlayStation 3s?

Jonathan Bartlett: First, the habit of creating supercomputers from commodity computers was popularized in the late 1990s. These supercomputers are known as Beowulf clusters, named after the original supercomputer built in this fashion. They were typically running Linux because at the time it was one of the few operating systems that did not charge licensing fees. That greatly reduced the cost of building these clusters.

When the PlayStation 3 came out, there was no other computer like it. It used a brand new processor built by IBM, The Cell Broadband Engine. This computer chip was especially advanced for its time (late 2006/early 2007) because it had a total of 9 cores (8 usable). The primary core was an SMT-capable PowerPC chip. Attached to this chip were 8 specialized processors called “Synergistic Processing Elements” or SPEs for short. The SPEs were interesting because they were a mix between a general processor and a vector processor, kind of like a mini-graphics card.

author supplied

author suppliedMM: Why were only 8 of the cores usable?

JB: The chip was pretty advanced for its day, and hard to manufacture. The manufacturing yields were reported to be only around 20% (typical chips have yields of around 95%). However, the design of the processor meant that it could still function if one of its SPEs were inoperable. Therefore, to increase yields, they had an agreement with Sony that the PlayStation would only use 7 of the SPEs, plus the main PowerPC core. Therefore, after manufacture, if there was a problem with just one SPE on the chip, they would simply disable that SPE. It wasn’t a defective chip unless there were two or more broken SPEs, or if the PowerPC core was broken. If the chip was perfect, they would actually disable one of the working SPEs in order to make it uniform with the rest. I’m not aware of any particular numbers, but my guess is that this at least doubled their yields.

MM: Tell us more about vector processors and why they are important.

JB: Vector processors speed up computation. They allow you to perform the same instruction on multiple pieces of data, with a single operation. These operations are known as SIMD (single instruction, multiple data) operations. The Cell was built so that it could operate on up to 16 values at once. So, essentially, for these instructions, you get a 16x speedup. Not only did each individual SPE have instructions that could boost speeds up to 16x, you had 8 of them available. That was especially appealing for two uses: high-speed graphics and scientific calculations.

MM: So why did the Cell Processor never take over the market for processors? Hasn’t IBM largely stepped away from the Cell processor?

JB: This is true. There haven’t been any developments in the Cell since around 2009, and I’m not even sure that the Cell is available anymore. The problem was that the Cell was really hard to program for. The SPEs had extremely tiny memories, and the communication between the main PowerPC processor and the SPEs was pretty tedious. In order to get the massive speed boosts that the platform promised, both your problem and your program had to be written in such a way as to take advantage of it. That was not an easy task, and certain limitations in the hardware design made it even harder.

Later advances in graphics cards and tools to program them have largely left the Cell as a solution without a problem. You can buy multicore general-purpose processors and install multicore graphics cards, giving you essentially the same setup. On top of this, because every computer has a graphics card, the tooling to make use of this environment is much better. So programming for CPU + Graphics Card is much, much easier than trying to build for the Cell. While it is certainly possible that the price/performance of the Cell is superior to the more popular approach, IBM released the chip before it released good tools to take advantage of the Cell.

MM: So what was your role in all of this?

JB: I came into this area entirely by accident. I was writing for IBM’s DeveloperWorks at the time. I had purchased a Mac, which, at the time, were using PowerPC (not x86/Intel) processors. This processor had a completely different instruction set. So, because I had already written a book on assembly language with x86/Intel processors, I decided I would write some articles for DeveloperWorks on programming the PowerPC chip. You can still find this tutorial series on IBM’s website.

In any case, the PlayStation 3 was going to launch IBM’s new chip. A problem developed. Although they had written reference material for the chip, there were no easy-to-follow tutorials for the platform. My editor at DeveloperWorks asked me if I would dig through the reference material and put together some simple tutorials for launch. I agreed, and wrote an eight-part tutorial series on writing high-performance applications for their chip.

MM: Is that series still available?

JB: IBM has removed most of their tutorials on Cell programming, including mine. The articles are still available on the Internet Archive, so I’ll send you links to the tutorials in case any readers are interested [below]. Even though it would be hard to adapt these tutorials to work on present-day systems, some of the ideas might be interesting for someone thinking about them.

MM: So what was Sony’s response to the PlayStation 3 being used in Beowulf clusters?

JB: Sony actively encouraged it. There was a whole underground cluster programming scene that developed around the PlayStation 2, with which even the NCSA got involved.

If I recall correctly, Sony was originally against that ue of the PlayStation 2 but eventually provided some support. Sony knew that the same thing would happen for the PlayStation 3 so they got ahead of the curve and sponsored work in that area. They paid Terrasoft Solutions to port Linux to the PlayStation 3, and had it ready to go on the release date. My tutorials were actually based on the Linux operating system, not their gaming platform.

So, Sony gave the push for using the PlayStation 3 (below right) in clusters, and many scientific oriented applications arose. There were ports for a lot of the @home-style scientific applications to the Cell processor, actively encouraged and supported by Sony. While not based on the PlayStation 3, IBMs RoadRunner supercomputer used Cell processors and was the number 1 supercomputer for quite some time.

Because of my tutorial series, I was asked to be involved in some of these projects. However, my schedule was pretty full. I had a full-time job as the director of development for a company called New Medio (later bought out by Rochester-based ITX). At the same time, I was going through seminary to get a masters in theology. I also spent some time on my writing gig to make ends meet and pay off credit card debt from family medical issues. So my schedule was too full to contribute, though they sounded like a lot of fun.

MM: Any other stories you want to share?

JB: Well, there was the time I was kicked out of a Walmart. It’s a long story but kind of fun. When I was writing the articles for this series, the PlayStation 3 had not yet come out. Therefore, all of the development I was doing was on a simulator. Simulators are never exactly the same as the real thing, and I didn’t want to release my articles without testing on an actual PlayStation 3.

Because of certain non-disclosure agreements that IBM had with Sony, they couldn’t actually ship me a PlayStation 3. However, I wanted my articles to be available as soon as it came out. In any case, I had planned on just buying one the day it came out, testing it, and releasing the articles. IBM also payed me additional money for the articles to be able to buy myself a PlayStation 3 console for testing.

However, I had not been in gaming in many years, so I wasn’t aware at how popular this thing would be. I figured if I showed up the night before to get the gaming console, I would be able to get one. Well, I was wrong. First of all, I showed up dressed like a hobo because I had basically planned on spending the night there (that was my first mistake). However, when I got there, there were already 100 people in line, and the Walmart was only going to have 10 consoles available.

So, I thought about it, and I figured that, since I didn’t actually need the console long-term, I could find someone whom I could mutually benefit from the console. So, I decided that I would explain my situation and offer the people at the front of the line to pay for their console if they let me have the first 10 hours on it (which was what I estimated it would take to test out my articles).

I was surprised that they all said, no until one of them put into words what everyone had been thinking, “How do we know that you’re not going to steal it?” A fair question, especially given my disheveled appearance. So, to show that I was in fact a software writer, I would get a copy of my programming book, and show that I was, indeed, an author.

However, while I was gone, the people at the front of the line contacted security, so, when I returned, I was thrown out of the Walmart altogether.

Anyway, my family likes to joke at my expense that I’m the only one in the family that’s ever been kicked out of Walmart.

MM: Thanks for joining us, and thanks for sharing your stories!

JB: Any time.

Further reading on the cell broadband engine and related topics:

An introduction to Linux on the PLAYSTATION 3

Program the synergistic processing elements of the Sony PLAYSTATION 3

Meet the synergistic processing unit

Program the SPU for performance

Programming the SPU in C/C++

Smart buffer management with DMA transfers

How to make an SPE and existing code work together

Removing obstacles to speedy performance

Also of possible interest – an Internet radio interview I did at the time.