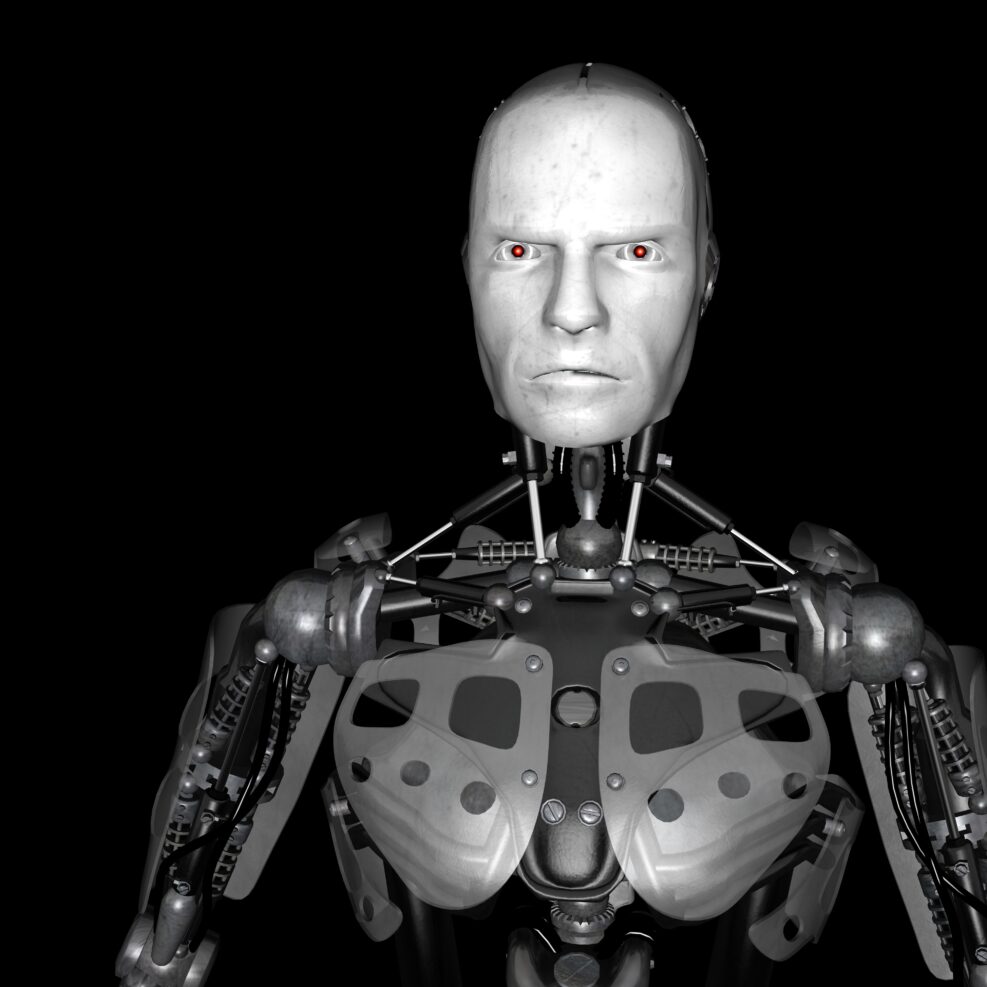

AI Is Not Nearly Smart Enough to Morph Into the Terminator

Computer engineering prof Robert J. Marks offers some illustrations in an ITIF think tank interviewIn a recent podcast, Walter Bradley Center director Robert J. Marks spoke with Robert D.Atkinson and Jackie Whisman at the prominent AI think tank, Information Technology and Innovation Foundation, about his recent book, The Case for Killer Robots—a plea for American military brass to see that AI is an inevitable part of modern defense strategies, to be managed rather than avoided. It may be downloaded free here. In this second part ( here’s Part 1), the discussion (starts at 6:31) turned to what might happen if AI goes “rogue.” The three parties agreed that AI isn’t nearly smart enough to turn into the Terminator: Jackie Whisman: Well, opponents of so-called killer robots, of course argue that the technologies can’t be Read More ›