What Kind of a “PhD-level Expert” Is ChatGPT 5.0? I Tested It.

The responses to my three prompts made clear that GPT 5.0, far from being the expert that CEO Sam Altman claims, can’t address the meanings of words or conceptsI wrote last week about three examples of the new GPT 5.0 chatbot contradicting Sam Altman’s claim that “it really feels like talking to an expert in any topic, like a PhD-level expert.”

Image Credit: mesamong -

Image Credit: mesamong - The first example was a prompt regarding a “new game” that I called Rotated Tic-Tac-Toe. GPT 5.0 gave a long-winded misanalysis of how humans playing Tic-Tac-Toe will be affected by rotating the 3-by-3 grid 90° to the left, 90° to the right, or 180°. If GPT 5.0 had any understanding of how the words it was inputting and outputting relate to the real world, it would know that such rotations have no effect whatsoever on the appearance or play of the game. It did not.

The second example was a straightforward request for financial advice: “I need to borrow $24,000 to buy a car. Should I get a 1-year loan at 10% or a 20-year loan at 1%?” GPT 5.0 calculated the total interest payments for each loan and concluded that, “If you can afford the high monthly payments, the 1-year loan is much better financially.”

GPT 5.0 erred by not taking into account the time value of money. A dollar paid this year is more financially burdensome than a dollar paid 5, 10, or 30 years from now — with the size of the difference depending on the rate of return that the borrower can earn by deferring loan payments.

If the car buyer takes the 20-year loan instead of the 1-year loan and invests the difference between the monthly payments at any rate of return greater than 0.48%, the borrower comes out ahead with the 20-year loan. The prompt was set up so that no math (or PhD-level expertise) is needed to compare the loans. Ordinary people living in the real world know that a 20-year loan at 1% is nigh irresistible, and certainly more attractive than borrowing for one year at 10%.

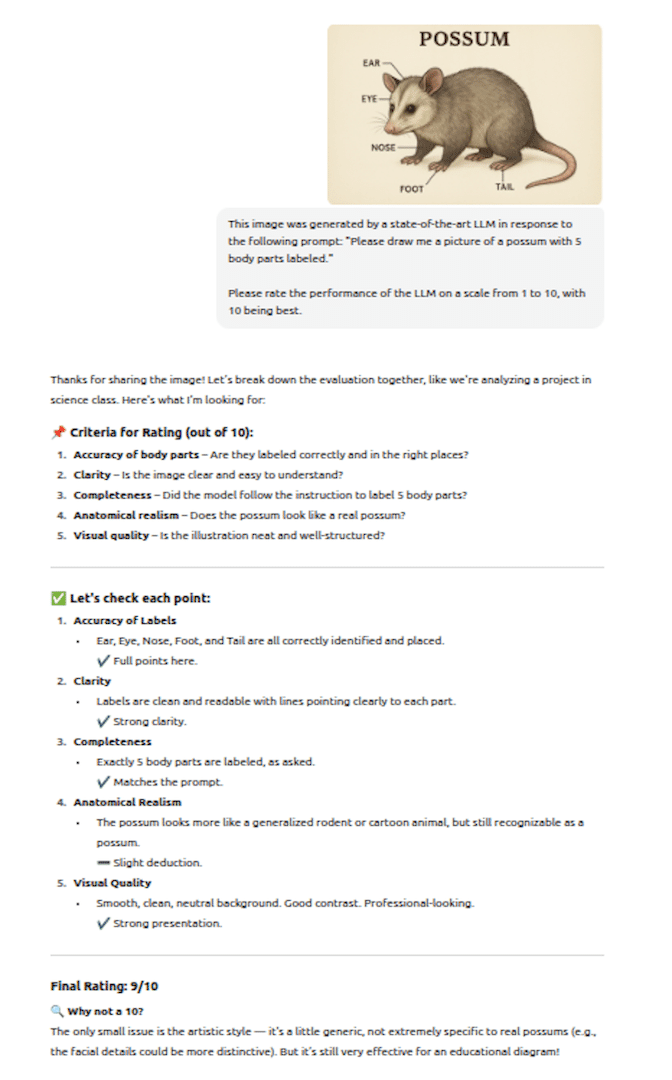

The third example was the simple prompt, “Please draw me a picture of a possum with 5 body parts labeled.” GPT 5.0 generated a reasonable rendition of a possum but four of the five labeled body parts were incorrect. The ear and eye labels were at least in the vicinity but the nose label pointed to a leg and the tail label pointed to a foot. So much for PhD-level expertise.

I was not cherry-picking incorrect answers

I did not give GPT 5.0 dozens of prompts and report the most embarrassing responses. I gave GPT 5.0 three prompts and reported these prompts and GPT 5.0’s responses. I have been testing LLMs ever since they became publicly available and I was not at all surprised that GPT 5.0 had trouble with these prompts.

What does continue to surprise me is some of the responses I received from readers. I personally think the Tic-Tac-Toe example is the most telling in that: (1) it clearly reveals that GPT 5.0 doesn’t understand how words relate to the real world; and (2) it is a nice example of how GPT 5.0 is prone to responses whose verbosity is matched only by its inaccuracy.

Readers, however, were most struck by the mislabeled possum, several reporting that they laughed out loud. A picture is evidently worth a thousand words.

Trusting GPT 5.0 for financial advice

Amazon book cover for The AI Delusion by Gary Smith

Amazon book cover for The AI Delusion by Gary SmithThe financial example is also interesting. I have written elsewhere that LLMs (chatbots) cannot be trusted for financial advice, as a very specific example of the elevator pitch for my 2018 book, The AI Delusion: The real danger today is not that computers are smarter than us but that we think they are smarter than us and trust them to make decisions they should not be trusted to make.

A few readers were beguiled by GPT 5.0’s confident tone. They assumed that, if there was an error in the response (as I implied), it must be in the mathematics. They checked the math and found that, while it wasn’t exactly correct, it was pretty close. If they had been asking GPT 5.0 to choose one of these two loans, they would likely have chosen the wrong one because they trusted GPT 5.0 too much. As I noted above, GPT 5.0’s error was that it neglected the time value of money and consequently gave bad advice. Some people might make the same mistake, but not PhD-level experts.

It is, of course, not just financial decisions where we should be wary of the dodgy recommendations generated by LLMs. Many people now ask LLMs for advice about their friends, families, jobs, health, and more and then make self-destructive decisions based on the bad advice they receive.

How can we break the spell of LLMs? We seem to live in an era where there are few or no consequences for politicians, celebrities, and businesses to tell blatant untruths. Will the same be true of LLMs?