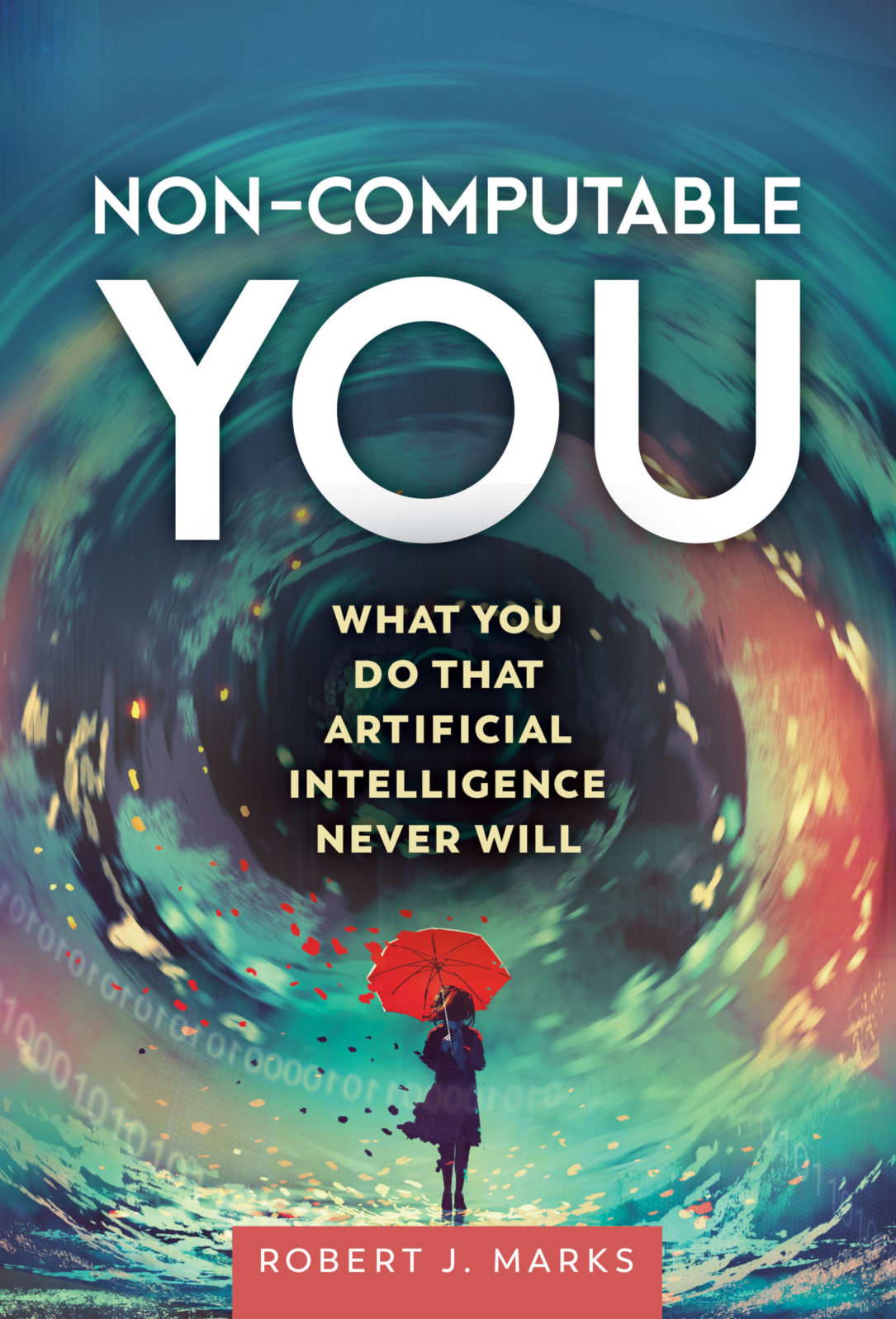

The Software of the Gaps: An Excerpt from Non-Computable You

In his just-published book, Robert J. Marks takes on claims that consciousness is emerging from AI and that we can upload our brains

Artificial Intelligence Never Will

(Discovery Institute Press, June 2022) is available here.

There are human characteristics that cannot be duplicated by AI. Emotions such as love, compassion, empathy, sadness, and happiness cannot be duplicated. Nor can traits such as understanding, creativity, sentience, qualia, and consciousness.

Or can they?

Extreme AI champions argue that qualia and, indeed, all human traits will someday be duplicated by AI. They insist that while we’re not there yet, the current development of AI indicates we will be there soon. These proponents are appealing to the Software of the Gaps, a secular cousin of the God of the Gaps. Machine intelligence, they claim, will someday have the proper code to duplicate all human attributes.

Impersonate, perhaps. But experience, no.

Mimicry versus Experience

AI will never be creative or have understanding. Machines may mimic certain other human traits but will never duplicate them. AI can be programmed only to simulate love, compassion, and understanding.

The simulation of AI love is wonderfully depicted by a human-appearing robot boy brilliantly acted by a young Haley Joel Osment in Steven Spielberg’s 2001 movie A. I. Artificial Intelligence. Before activation, the robot boy played by Osment is emotionless. But when his love simulation software is turned on, the boy’s immediate attraction to his adoptive mother is convincing, thanks to Osment’s marvelous acting skill. The robot boy is attentive, submissive, and full of snuggle-love.

But mimicking love is not love. Computers do not experience emotion. I can write a simple program to have a computer enthusiastically say “I love you!” and draw a smiley face. But the computer feels nothing. AI that mimics should not be confused with the real thing.

Emergent Consciousness

Moreover, tomorrow’s AI, no matter what is achieved, will be from computer code written by human programmers. Programmers tap into their creativity when writing code. All computer code is the result of human creativity — the written code itself can never be a source of creativity itself. The computer will perform as it is instructed by the programmer.

But some hold that as code becomes more and more complex, human-like emergent attributes such as consciousness will appear. (“Emergent” means that an entity develops properties its parts do not have on their own — a sum greater than the parts can account for.) This is sometimes called “Strong AI.”

Those who believe in the coming of Strong AI argue that non-algorithmic consciousness will be an emergent property as AI complexity ever increases. In other words, consciousness will just happen, as a sort of natural outgrowth of the code’s increasing complexity.

Such unfounded optimism is akin to that of a naive young boy standing in front of a large pile of horse manure. He becomes excited and begins digging into the pile, flinging handfuls of manure over his shoulders. “With all this horse poop,” he says, “there must be a pony in here somewhere!”

Strong AI proponents similarly claim, in essence, “With all this computational complexity, there must be some consciousness here somewhere!” There is — the consciousness residing in the mind of the human programmer. But consciousness does not reside in the code itself, and it doesn’t emerge from the code, any more than a pony will emerge from a pile of manure.

Like the boy flinging horse poop over his shoulder, strong AI proponents — no matter how insistently optimistic—will be disappointed. There is no pony in the manure; there is no consciousness in the code.

Uploading a Brain

Are there any similarities between human brains and computers? Sure. Humans can perform algorithmic operations. We can add a column of numbers like a computer, though not as fast. We learn, recognize, and remember faces, and so can AI. AI, unlike me, never forgets a face.

Because of these types of similarities, some believe that once technology has further advanced, and once enough memory storage is available, uploading the brain should work. “Whole Brain Emulation” (also called “mind upload” or “brain upload”) is the idea that at some point we should be able to scan a human brain and copy it to a computer.1

The deal breaker for Whole Brain Emulation is that much of you is non-computable. This fact nixes any ability to upload your mind into a computer. For the same reason that a computer cannot be programmed to experience qualia, our ability to experience qualia cannot be uploaded to a computer. Only our algorithmic part can be uploaded. And an uploaded entity that is totally algorithmic, lacking the non-computable, would not be a person.

So don’t count on digital immortality. There are other more credible roads to eternal life.

1 Becca Caddy, “Will You Ever Be Able to Upload Your Entire Brain to a Computer?” Metro, June 5, 2019. Also see Selmer Bringsjord, “Can We Upload Ourselves to a Computer and Live Forever?,” April 9, 2020, interview by Robert J. Marks, Mind Matters News, podcast, 22:14.

Here are all of the excerpts in order:

Why you are not — and cannot be — computable. A computer science prof explains in a new book that computer intelligence does not hold a candle to human intelligence. In this excerpt from his forthcoming book, Non-Computable You, Robert J. Marks shows why most human experience is not even computable.

The Software of the Gaps: An excerpt from Non-Computable You. In his just-published book, Robert J. Marks takes on claims that consciousness is emerging from AI and that we can upload our brains. He reminds us of the tale of the boy who dug through a pile of manure because he was sure that … underneath all that poop, there MUST surely be a pony!

Marks: Artificial intelligence is no more creative than a pencil. You can use a pencil — but the creativity comes from you. With AI, clever programmers can conceal that fact for a while. In this short excerpt from his new book, Non-Computable You, Robert J. Marks discusses the tricks that make you think chatbots are people.

Machines with minds? The Lovelace test vs. the Turing test. The answers computer programs give sometimes surprise me too — but they always result from their programming. When it comes to assessing creativity (and therefore consciousness and humanness), the Lovelace test is much better than the Turing test.

Machines with minds? The Lovelace test vs. the Turing test The answers computer programs give sometimes surprise me too — but they always result from their programming. When it comes to assessing creativity (and therefore consciousness and humanness), the Lovelace test is much better than the Turing test.

and

AI: The shadow of Frankenstein lurks in the Uncanny Valley. The fifth and final excerpt from Non-Computable You (2022), from Chapter 6, focuses on the scarier AI hype. Mary Shelley’s “Frankenstein” monster (1808) wasn’t strictly a robot. But she popularized the idea — now AI hype — of creating a human-like being in a lab.