How Do We Know Lincoln Contained More Information Than His Bust?

Life forms strive to be more of what they are. Grains of sand don’t. You need more information to strive than to just exist.In Define information before you talk about it, neurosurgeon Michael Egnor interviewed engineering prof Robert J. Marks on the way information, not matter, shapes our world (October 28, 2021). In the first portion, Egnor and Marks discussed questions like: Why do two identical snowflakes seem more meaningful than one snowflake. Then they turned to the relationship between information and creativity. Is creativity a function of more information? Or is there more to it? And human intervention make any difference? Does Mount Rushmore have no more information than Mount Fuji? Does human intervention make a measurable difference? That’s specified complexity.

Putting the idea of specified complexity to work, how do we measure meaningful information? What if an information-rich entity were scattered as molecules through the universe, as opposed to integrated as a live human being?

This portion begins at 29:49 min. A partial transcript and notes, Show Notes, and Additional Resources follow.

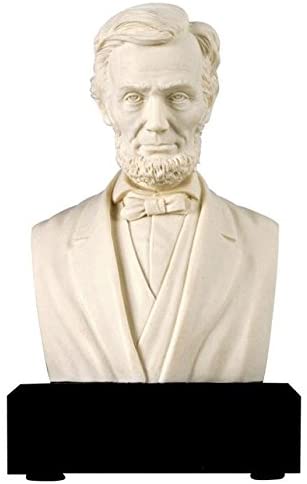

Michael Egnor: If you have three different systems, a bowling ball, a bust of Lincoln, and the atoms that would make up a bowling ball or a bust of Lincoln — reduced to individual atoms and just distributed throughout the universe, just dust — which has the most information?

Robert J. Marks: We are talking about Kolmogorov information, which is description length, in terms of the computer program required to duplicate the object. So a computer program will be longer for a 3D printer to print a bust of Lincoln than a bowling ball.

Now, there is the open question: Do you want to get as far as duplication? Do you want to get down to the atom? I would say probably not. You’re just interested in the surface of the bust of Lincoln and the surface of the bowling ball.

Michael Egnor: But wouldn’t something with maximal entropy have more of that kind of information than a bust of Lincoln?

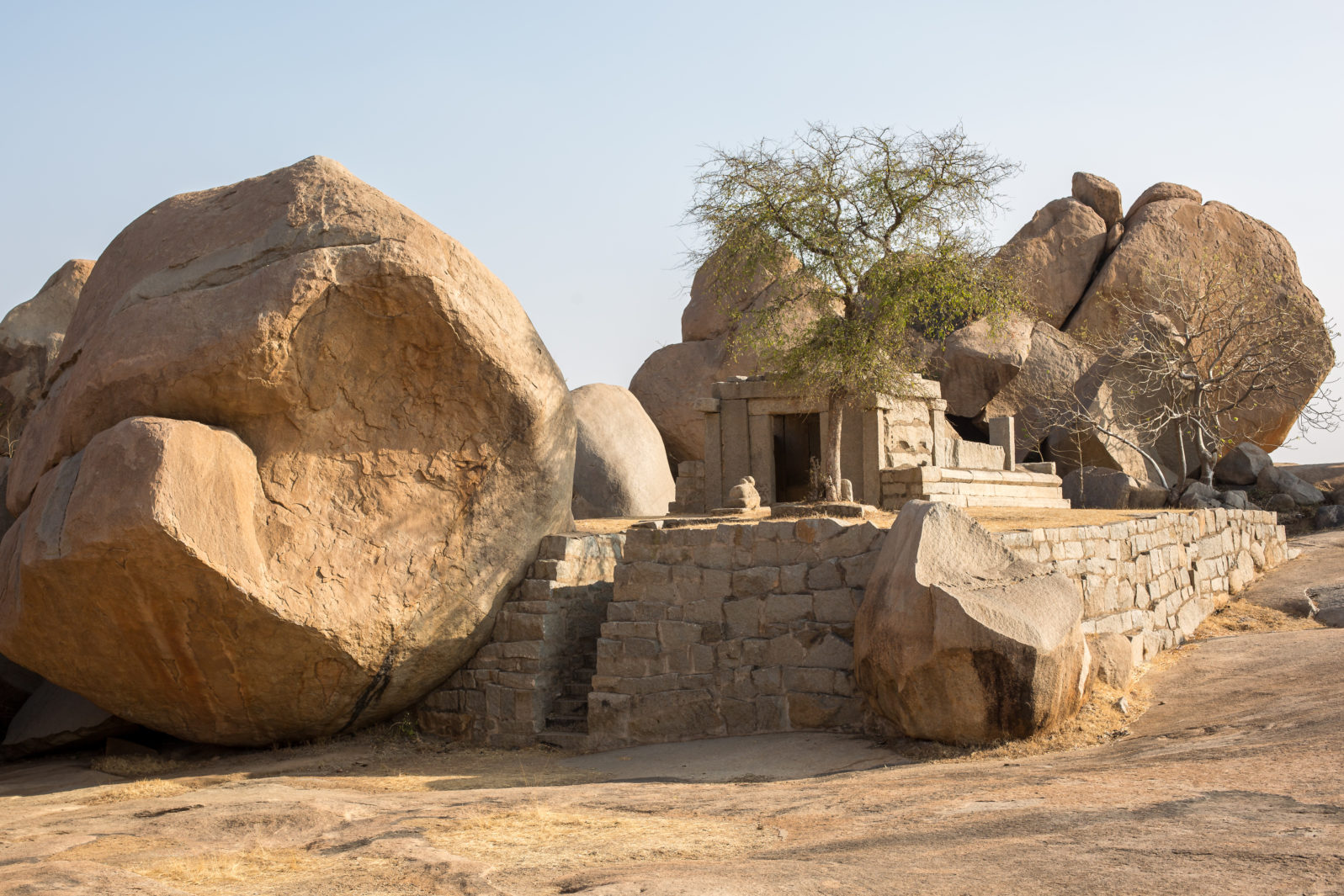

Robert J. Marks: Yes, and this is where the rub comes in. Let’s have a bust of Lincoln versus say, a rock that we picked out in the driveway.

Now this rock might have a bunch of dimples and indentations and it might have the same complexity that the bust of Lincoln does. But that’s only one part of specified complexity. The other one is specification. Why do we recognize that there’s more meaning in a bust of Lincoln than there is in a rock that you pick out of your driveway, even though the computer programs that generate them are of the same length?

In terms of experience, the bust of Lincoln is more meaningful. And it can be folded into the idea of Kolmogorov complexity in order to come up with algorithmic specified complexity. It uses the idea of conditional Kolmogorov complexity. And the idea here is that you bring into the interpretation of the meaning your experience.

About the text in Japanese that I can’t read, versus a person was fluent in Japanese. Well: for them, it would have more information, because they had the context to interpret it.

So specified complexity has two components, complexity and specification. Those two things combined give the overall measure of algorithmic specified complexity, which measures the meaning of an object.

Michael Egnor: From the standpoint of information theory how does a bust of Lincoln, our statue of Lincoln differ from Lincoln?

Note: In the trailer below, Daniel Day-Lewis portrays Abraham Lincoln in Steven Spielberg’s 2012 film. This is several layers of massive amounts of information overlaid on one another:

Robert J. Marks: Oh, well, I think they’re two different total worlds. For the bust of Lincoln we’re just only interested in the outside, the external surface. We’re not really interested in what goes on inside. I believe we’re constrained with whatever the physics of the 3D printer is …

Michael Egnor: I kind of like to take the observer out of it. Let’s not consider so much what we’re interested in, but rather, what the actual differences are.

Let’s say that you made the statue in such a way that it you also had a statue of Lincoln’s internal organs. You tried to make it as detailed as you could. How would the most detailed statue that you could imagine, differ from Lincoln himself, information theory-wise.

Robert J. Marks: Well, I think that in terms of meaning, Shannon information, Kolmogorov information, physical information… none of those address, meaning. The only way to address meaning of which I am aware in information theory is to place it into context. Into something which is meaningful. And that context must come through experience…

So in that sense, it is all based on context. I’m not sure, for example, how an alien — a bulbous blob sort of alien with no form — would look at the bust of Lincoln and think that it had any meaning. It would have to have the context of knowing what humans look like. And if it had no idea what humans looked like, it would just sit there and say: “This is just like a moon rock.”

Michael Egnor: As I mentioned in the past, I’m fascinated by the traditional Thomistic and scholastic definition of living things: they are things that strive to perfect themselves. And what Thomas Aquinas meant by that is that there are purposes built into nature, final causes — what he called teleology broadly. And those purposes provide goals for things in nature, that things in nature tend to change in the direction of those goals.

But what is unique about living things is that they act of their own accord to achieve their goals. Whereas nonliving things are acted upon, but don’t act of their own accord. An example would be: No matter how detailed a statue of Lincoln you make, the statue wouldn’t be trying to make itself a better statue of Lincoln.

Whereas Lincoln tried to make himself a better man every day. What made Lincoln alive was that he was always trying to be a better Lincoln. Better in terms of more fully realized or perfectly himself. As we all do, there’s always a striving in living things. And there’s no striving inanimate things. Statues don’t try to become better statues. They can only be made better by something external, but they don’t try it themselves.

Robert J. Marks: What you are describing, this intent to better yourself, is non-algorithmic. I would maintain that it is beyond the scope of naturalism, beyond the scope of information theory to capture, at least as I know it right now.

Michael Egnor: The other connection that I think is absolutely fascinating here … is that there is a remarkable analog to this idea of things in nature, and particularly living things, striving to perfect themselves, striving towards a goal. And that is intentionality, which is a technical philosophical term that refers to the fact that all thoughts are directed at things.

Every thought you could have is about something. You can’t really think anything that isn’t about something. Every thought has something it points to. And the implication there that the Scholastic philosophers drew, was that the tendency in nature for things to go to ends, to go to goals, and particularly the tendency of living things to perfect themselves is the kind of thing that has to have a mind behind it.

That is, there’s there’s no striving unless there’s a more profound organizing mind that creates the striving. And so, the ancient philosophers connected the idea of intentionality in the human mind to the idea of teleology and final cause in nature. And that was one of the reasons that was, for example, was Aquinas’s Fifth Way of demonstrating the existence of God.

Note: Aquinas’ Fifth Way: The Fifth Way is the teleological proof — inanimate things in nature follow patterns of behavior. Rocks, when we drop them, fall down. They don’t fall up. Electrons go around protons. They don’t just shoot off into space for no reason. Nature is full of natural laws that have consistency. But the things that obey these laws don’t have minds. They’re not capable of knowing what they’re supposed to do. A rock doesn’t know it’s supposed to go down when you let it go instead of going up. And the fact that inanimate things don’t know what to do but do it anyway implies that there is a mind guiding things. This is kind of an intelligent design argument, that nature shows this elegant example of design of purpose.

Robert J. Marks: Well, let me ask you a question. We’re all familiar with robots and robotic mice finding themselves a path through a maze in order to get some sort of reward at the end… But couldn’t these robots be designed to improve themselves by getting better and better?

Michael Egnor: Oh sure. But the design is externally imposed on them. There’s no inherent tendency. That if you take a robot, and you just plop it down in the middle of a desert, and watch it for a while, if it does do things, it won’t do them for long. That is that robots are conglomerates of silicon and copper, steel, and things like that. And you can leave silicon and copper and steel out in your backyard or on your desktop for as long as you want. And it will never do anything that even remotely resembles a robot. The only way that silicon, copper and steel become robots is if a human being assembles and programs them and makes them do it.

Note: Some argue that artificial intelligence (AI) can evolve into a superintelligence all by itself. But, as Eric Holloway points out, “it is the fundamental insights that are necessary to drive machine learning and artificial intelligence forward. The only known source of such fundamental insights is humans.” Why? Goodhart’s Law is in play: “Once a policy becomes a target, it loses all information.” Which just means that a higher (anyway, outside) intelligence must always evaluate the performance and the direction. He adds, “There is no way to achieve fundamental insights by optimizing for objective functions. Even making novelty itself the objective function cannot work due to the limits placed by Turing’s halting problem and Kolmogorov complexity.”

Robert J. Marks: So their intentionality is external.

Michael Egnor: And that external teleology, or external intentionality, is what characterizes inanimate objects. They can’t make themselves better in any way unless some intelligent agent comes along and pushes them, and makes them do it. Whereas an intelligent agent can make itself better without being pushed. Or I should say, not even intelligent, a living thing makes itself better. Bacteria make themselves better. And they’re not intelligent in any sense that we think of intelligence. But bacteria make themselves better. But grains of sand, that are the same size as a bacterium don’t make themselves better.

Next: Why AI can’t filter out hate on the internet

Here are all the episodes in the series. Browse and enjoy:

- How information becomes everything, including life. Without the information that holds us together, we would just be dust floating around the room. As computer engineer Robert J. Marks explains, our DNA is fundamentally digital, not analog, in how it keeps us being what we are.

- Does creativity just mean Bigger Data? Or something else? Michael Egnor and Robert J. Marks look at claims that artificial intelligence can somehow be taught to be creative. The problem with getting AI to understand causation, as opposed to correlation, has led to many spurious correlations in data driven papers.

- Does Mt Rushmore contain no more information than Mt Fuji? That is, does intelligent intervention increase information? Is that intervention detectable by science methods? With 2 DVDs of the same storage capacity — one random noise and the other a film (BraveHeart, for example), how do we detect a difference?

- How do we know Lincoln contained more information than his bust? Life forms strive to be more of what they are. Grains of sand don’t. You need more information to strive than to just exist. Even bacteria, not intelligent in the sense we usually think of, strive. Grains of sand, the same size as bacteria, don’t. Life entails much more information.

- Why AI can’t really filter out “hate news.” As Robert J. Marks explains, the No Free Lunch theorem establishes that computer programs without bias are like ice cubes without cold. Marks and Egnor review worrying developments from large data harvesting algorithms — unexplainable, unknowable, and unaccountable — with underestimated risks.

- Can wholly random processes produce information? Can information result, without intention, from a series of accidents? Some have tried it with computers… Dr. Marks: We could measure in bits the amount of information that the programmer put into a computer program to get a (random) search process to succeed.

- How even random numbers show evidence of design Random number generators are actually pseudo-random number generators because they depend on designed algorithms. The only true randomness, Robert J. Marks explains, is quantum collapse. Claims for randomness in, say, evolution don’t withstand information theory scrutiny.

Show Notes

- 00:00:09 | Introducing Dr. Robert J. Marks

- 00:01:02 | What is information?

- 00:06:42 | Exact representations of data

- 00:08:22 | A system with minimal information

- 00:09:31 | Information in nature

- 00:10:46 | Comparing biological information and information in non-living things

- 00:11:32 | Creation of information

- 00:12:53 | Will artificial intelligence ever be creative?

- 00:17:40 | Correlation vs. causation

- 00:24:22 | Mount Rushmore vs. Mount Fuji

- 00:26:32 | Specified complexity

- 00:29:49 | How does a statue of Abraham Lincoln differ from Abraham Lincoln himself?

- 00:37:21 | Achieving goals

- 00:38:26 | Robots improving themselves

- 00:43:13 | Bias and concealment in artificial intelligence

- 00:44:42 | Mimetic contagion

- 00:50:14 | Dangers of artificial intelligence

- 00:54:01| The role of information in AI evolutionary computing

- 01:00:15| The Dead Man Syndrome

- 01:02:46 | Randomness requires information and intelligence

- 01:08:58 | Scientific critics of Intelligent Design

- 01:09:40 | The controversy between Darwinian theory and ID theory

- 01:15:07 | The Anthropic Principle

Additional Resources

- Robert J. Marks at Discovery.org

- Michael Egnor at Discovery.org

- Claude Shannon at Encyclopædia Britannica

- Andrey Kolmogorov at Wikipedia

- Spurious Correlations website

- Chapter 7 of: R.J. Marks II, W.A. Dembski, W. Ewert, Introduction to Evolutionary Informatics, (World Scientific, Singapore, 2017).

- Winston Ewert, William A. Dembski and Robert J. Marks II “Algorithmic Specified Complexity in the Game of Life,” IEEE Transactions on Systems, Man and Cybernetics: Systems, Volume 45, Issue 4, April 2015, pp. 584-594.

- Winston Ewert, William A. Dembski and Robert J. Marks II “On the Improbability of Algorithmically Specified Complexity,” Proceedings of the 2013 IEEE 45th Southeastern Symposium on Systems Theory (SSST), March 11, 2013, pp. 68-70

- Winston Ewert, William A. Dembski, Robert J. Marks II “Measuring meaningful information in images: algorithmic specified complexity,” IET Computer Vision, 2015, Vol. 9, #6, pp. 884-894