Evolution And Artificial Intelligence Face The Same Basic Problem

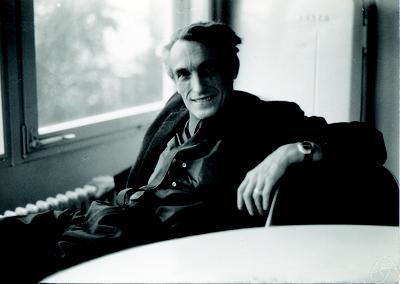

Think of the word ladder game, where we transform one word into another by changing only one letter at a timeIn 1966, an unusual symposium was hosted at the Wistar Institute of Anatomy and Biology at the University of Pennsylvania. The topic of the symposium was “Mathematical Challenges to the Neo-Darwinian Theory of Evolution” where mathematicians and engineers presented what they saw as fundamental problems with the theory of evolution. One of the mathematicians, Marcel-Paul Schutzenberger (1920–1996, pictured) worked closely with Noam Chomsky (1928–) on the intersection between linguistics and computer science.

Schutzenberger’s fundamental objection to many claims about evolution is that DNA, as modified by mutations, produces a very simple kind of language. On the other hand, the organisms and the environment they live within is a very complex domain with very far-ranging interactions. It seemed implausible to Schutzenberger that such a simple structure could match the complexities of the real world to the extent that evolution based on natural selection acting on purely random mutation could produce highly complex new organisms.

Think of the word ladder game, where we transform one word into another by changing only one letter at a time. The rule is, each time we change a letter, the new sequence must itself be a valid word. As an example, here is a word ladder that takes us from the word CAT to the word DOG.

- CAT

- COT

- COG

- DOG

Changing a three-letter word to another three-letter word is easy. But transforming longer words rapidly becomes more difficult until it becomes impossible. For example, there is no such transformation possible from the word TRANSMUTATION to the word PERAMBULATION.

And that was Schutzenberger’s point with respect to evolution. There is no ladder that randomness can reach. Yet evolution, as described in approved textbooks, is more like turning TRANSMUTATION into PERAMBULATION than it is like turning CAT into DOG.

So, to justify the theory of evolution, biologists must explain the pathway from the simplest organism to the highly complex organisms of today.

It is not a scientific argument to merely assume that such a transformation is possible, especially when we can see with our example from the word ladder game how quickly a transition becomes impossible merely by changing one word into another. That is many orders of magnitude (a great understatement!) simpler than transforming one DNA sequence into another.

Schutzenberger offered the example of computer programs, which are extremely brittle and even more difficult than transforming English words. A single character out of place will render an entire computer program invalid and inoperable. His question is simple: What is it about DNA and evolution that makes the process so much more productive and robust than mutating computer programs or human languages? It is even more mysterious if this process makes the modifications “randomly,” in other words without any information regarding the environment nor what would benefit the organism. As we know, the debate rages today as to whether the way evolution is supposed to have happened is even possible.

Interestingly, the same question applies to artificial intelligence.

The language of the computer consists of ones and zeros, analogous to the four GATC* letters of DNA, transformed through various computational operations. This is the heart of artificial intelligence—operations on ones and zeros. These operations occur without any insight into the environment around them. Thus any information that enters the artificial intelligence can only be understood if it is anticipated by the artificial intelligence’s programming. So, Schutzenberger asked, if we have a mechanical process with no direct insight into its environment, how can it hope to learn from its environment anything beyond what has already been programmed into its source code?

During Schutzenberger’s time, some artificial intelligence researchers proposed that randomness could solve that problem. Randomness could add variation to the hard-coded rules of artificial intelligence, just as it could perhaps jostle biological systems to potentially evolve new genes.

There was a good reason that artificial intelligence experts pinned their hopes on randomness as the fundamental source of new information. It is well known, due to Turing’s halting problem and Kolmogorov complexity, it is impossible to increase the net information of a deterministic system like a computer program by running it.

Thus, Schutzenberger was incredulous that a random process with no insight into the environment can somehow increase information about that environment within evolving DNA sequences and/or artificial intelligence programs. By what mechanism can randomness “know” anything?

To understand Schutzenberger’s incredulity, let’s go back to our word ladder game. What if, instead of knowingly varying CAT to COT with the anticipation that this gets us closer to DOG, we instead try random letters until we hit upon success. Obviously, that approach would take a whole lot more work. Additionally, even if we successfully randomly mutate CAT into another valid word, it is unlikely we will have hit a word that then gets us closer to DOG.

This is the same problem as faced by both evolution and artificial intelligence. Without knowledge about the goal and how to get there, it rapidly becomes first difficult and then completely impossible to reach the goal. Once we add randomness to the picture, everything becomes even worse. And it’s hard to get worse than impossible!

- Note 1: The image of Marcel-Paul Schutzenberger (1920–1996), 1972, is Konrad Jacobs, Erlangen, Copyright is MFO – Mathematisches Forschungsinstitut Oberwolfach, CC BY-SA 2.0 de

- Note 2: The four acids that make up DNA are Guanine, Adenine, Thymine, and Cytosine, hence the abbreviation GATC.

You may also enjoy:

Why Noam Chomsky is a great scientist of our era. He singlehandedly rid linguistics of a stultifying (and technically mistaken) behaviorism. (Michael Egnor)

Why engineering can’t be reduced to the laws of physics: When we reduce the engineer’s mind to a computer, the source of innovation disappears. (Eric Holloway)

and

Why is Bell’s Theorem important for conservation of information? Proving a negative is difficult. Demonstrating that there are no leafy green crows is hard to do without examining every crow. But there’s another way. (Eric Holloway)