Why Information Theory Is Like a Good Run

Information theory can help us understand a wide range of fields besides computersInformation theory is a deep field that is responsible for our modern internet and satellite TV. The field was pioneered by Claude Shannon to measure our ability to communicate meaning. But besides powering the information revolution, information theory is also very widely applicable elsewhere. Once you understand the basic intuition, you can see applications popping up all over the place. To prove the point, I’ll show how we can apply information theory to gain insight in the very low tech world of running.

I’ve been running off and on for many years and I’ve noticed that information theory describes a good run. First of all, what is a good run? A good run is when your body feels as if it is just flowing. The effort involved feels minimal, breathing is regular, strides follow a steady cadence, and the whole body moves in an oscillating rhythm as one. So what is a bad run? While there are only a few ways to have a good run there are many ways to have a bad run:

● Irregular breathing

● Rolling on the inside of the foot

● Keeping arms too close

● Not swinging arms

● Swinging arms side to side

● Stepping too high or too low

● Leaning too far forward or back

What all these problems have in common is disorder. In information theory terms, we can say that a bad run has high entropy. (high disorder). On the other hand, per the description above, a good run has low entropy.

Entropy is the core idea of information theory. It is a way of counting how many options we have. The idea is to measure how much information can be communicated over a communications channel. With more options, more information can be transmitted and visa versa.

More options results in higher entropy and fewer options results in lower entropy.

When we run, our bodies experience a wide range of movements, swinging legs and arms, turning our abdomen left and right, and taking deep breaths. When performing these movements, our body parts can deviate in many ways from the ideal paths. Thus we have a situation with many options. When our body parts are making many of these deviations, then we are taking many of these options, and the entropy of our movement is high.

On the other hand, when our movement is regular, then it deviates little and the number of options taken, and thus the entropy, is low.

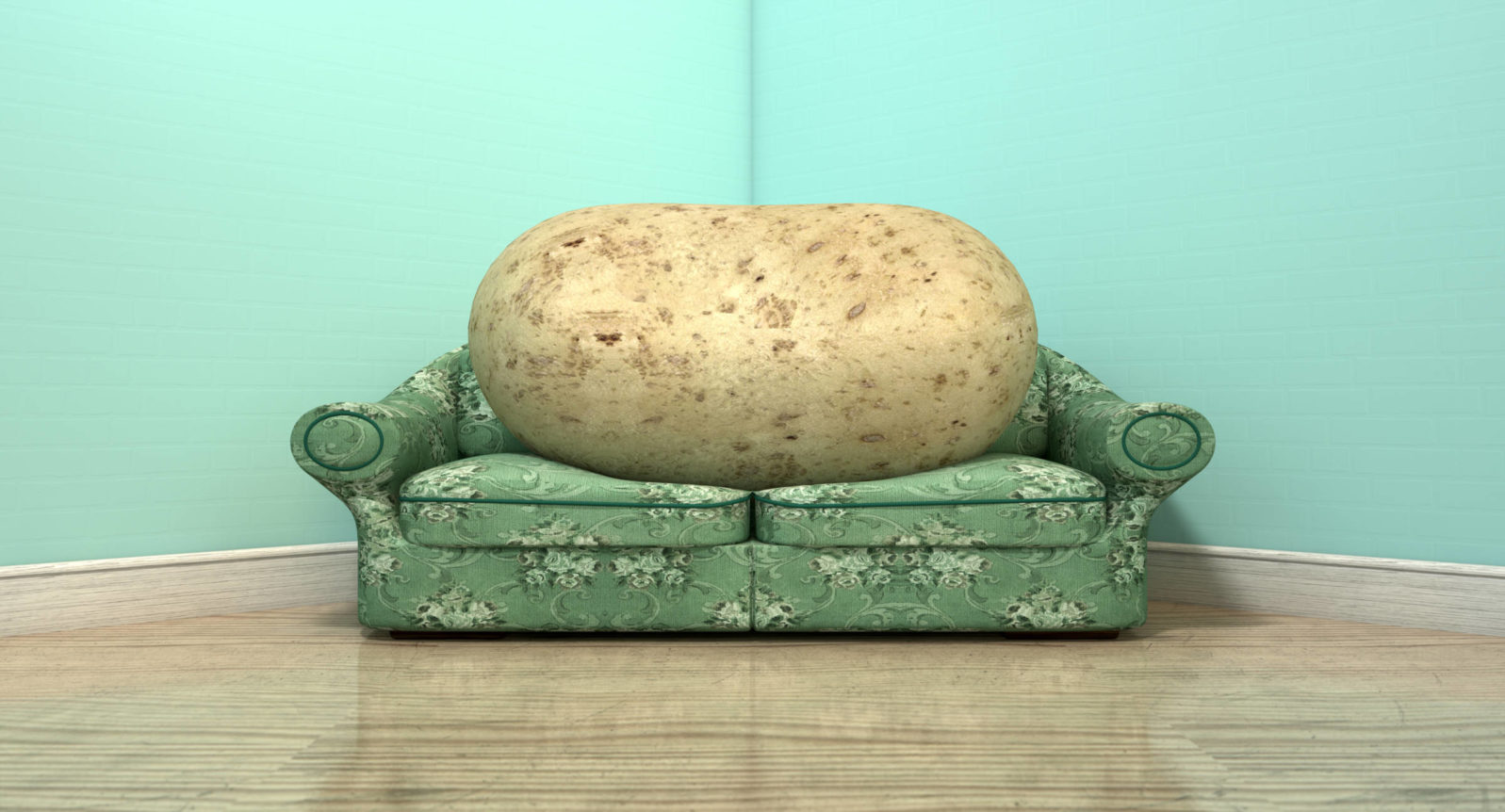

Couch Potato On Old Sofa Photo by

Couch Potato On Old Sofa Photo by Based on this analysis, we might be tempted to say that a good run can thus be best quantified by its entropy. However, there is an objection to this straightforward application of entropy, one of which you may have already thought.

The objection is this: If the entropy of a run is characterized by the number of options in body’s movements, then we also minimize entropy by minimizing movement. Therefore, if we quantify a good run based on its low entropy, then the best run is sitting on the couch and watching TV.

Obviously, this is not a good run, and might even be said to be the worst run. Any of the bad run characteristics listed above is better than sitting on the couch, because at least the person is running.

As a less extreme, someone could just shuffle along, which is not an bad way to transport oneself from point A to point B, but is also not the same as running. This shows that mere entropy is insufficient to characterize a good run since achieving the goal means we are not even running.

How can we fix this problem? Returning to our examples of good and bad runs, we can see that a good run is partly defined by what it is not. A run consists of a large number of movements: The bad run is deviations from these movements and the good run is consistency of these movements.

Progression from bad to good is a movement from high entropy to low entropy. On the other hand, perfecting one’s couch potato routine is a movement (or lack thereof) from low entropy to low entropy.

Now we see how to fix the information metric for a good run. A good run is the difference between the possible range of motion and the actual range of motion. With many body movements, there is a very large range of motion, so the background entropy is high. When runners are starting to learn good running form, their form will resemble the background entropy. As they improve over time, the entropy of their motion will decrease and will diverge from the background entropy.

Thus, instead of using a single entropy measure, we take the difference between two entropy measures. Now we have a more sophisticated information metric for run performance.

We can say that the first entropy measure of the entire possible range of motion while running is the unconditioned entropy. It represents all the possible paths our limbs can take when trying to run. There is no condition to constrain the range of possibility. The second entropy measures runners who have developed their form and the set of possible paths becomes constrained by this condition. This second measure is thus the conditioned entropy measure. In information theory, the difference between the unconditioned and conditioned entropy measures is called mutual information.

Back to our test case of the couch potato. Does the mutual information metric allow us to now distinguish between a couch potato and an Olympian? Recall that previously, when we were measuring a single entropy value for the run form, it turned out the couch potato is the best

runner of all, even better than Usain Bolt (above in 2016, courtesy Fernando Frazão/Agência Brasil). The couch potato shows almost zero possible range of motion. But with the new difference metric, hopefully the situation changes.

When we apply the mutual information metric we start with the unconstrained possible range of motion. In the case of the couch potato, we will say this is effectively zero. In the case of Usain Bolt, this range contains all the possible running forms of all the possible runners and thus is much higher than that of the couch potato.

Now for the conditioned entropy. The couch potato continues to exhibit no change in range of motion so the conditioned entropy for the couch potato remains zero. On the other hand, Usain Bolt represents an extreme refinement of running form and thus his range of motion is much smaller than the general case. Consequently, the conditioned entropy for Usain Bolt is much smaller than the unconditioned entropy.

Finally, let’s calculate the mutual information. For the couch potato, zero minus zero is zero, so we have zero mutual information.

On the other hand, Usain Bolt’s mutual information is a high entropy value minus a low entropy value, so his mutual information remains much greater than zero.

Thus we see that, by measuring the mutual information of body movement, we can characterize a good run as possessing high mutual information. Consequently, the same fundamental mathematical theory that has brought us phenomenal technology can also help us become phenomenal runners, or at least help us get off the couch and into our sneakers.

Further reading: COVID-19: When 900 bytes shut down the world A great physicist warned us, information precedes matter and energy: Bit before it.