Data Mining: A Plague, Not a Cure

It is tempting to believe that patterns are unusual and their discovery meaningful; in large data sets, patterns are inevitable and generally meaninglessThe scientific revolution that has improved our lives in so many wonderful ways is based on the fundamental principle that theories about the world we live in should be tested rigorously. For example, centuries ago, more than 2 million sailors died from scurvy, a ghastly disease that is now known to be caused by a prolonged vitamin C deficiency. The conventional wisdom at the time was that scurvy was a digestive disorder caused by sailors working too hard, eating salt-cured meat, and drinking foul water. Among the recommended cures were the consumption of fresh fruit and vegetables, white wine, sulfate, vinegar, sea water, beer, and various spices.

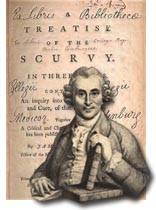

In 1747, a doctor named James Lind (1716–1794, left) did an experiment while he was on board a British ship. He selected 12 scurvy patients who were “as similar as I could have them.” They were all fed a common diet of water-gruel, mutton-broth, boiled biscuit, and other unappetizing sailor fare. In addition, Lind divided the 12 patients into groups of two so that he could compare the effectiveness of six recommended cures. Two patients drank a quart of hard cider every day; two took 25 drops of sulphate; two were given two spoonsful of vinegar three times a day, two drank seawater; two were given two oranges and a lemon every other day; and two were given a concoction that included garlic, myrrh, mustard, and radish root.

Lind concluded that,

… the most sudden and visible good effects were perceived from the use of oranges and lemons; one of those who had taken them, being at the end of six days fit for duty … The other was the best recovered of any in his condition; and … was appointed to attend the rest of the sick.

(Dr James Lind’s “Treatise on Scurvy” published in Edinburgh in 1753)

Unfortunately, his experiment was not widely reported and Lind did little to promote it. He later wrote that, “The province has been mine to deliver precepts; the power is in other to execute.”

The medical establishment continued to believe that scurvy was caused by disruptions of the digestive system caused by the sailors’ hard work and bad diet, and that it could be cured by “fizzy drinks” containing sulphuric acid, alcohol, and spices.

Finally, in 1795, the year after Lind died, Gilbert Blane, a British naval doctor, persuaded the British navy to add lemon juice to the sailors’ daily ration of rum—and scurvy virtually disappeared on British ships.

Lind’s experiment was a serious attempt to test six recommended medications, though it could have been improved in several ways:

– There should have been a control group that received no treatment. Lind’s study found that the patients given citrus fared better than those given seawater. But maybe that was due to the ill effects of seawater, rather than the beneficial effects of citrus.

– The distribution of the purported medications should have been determined by a random draw. Maybe Lind believed citrus was the most promising cure and subconsciously gave citrus to the healthiest patients.

– It would have been better if the experiment had been double-blind in that neither Lind nor the patients knew what they were getting. Otherwise, Lind may have seen what he wanted to see and the patients may have told Lind what they thought he wanted to hear.

– The experiment should have been larger. Two patients taking a medication is anecdotal, not compelling, evidence.

Nonetheless, Lind’s experiment remains a nice example of the power of statistical tests that begin with a falsifiable theory, followed by the collection of data— ideally through a controlled experiment to test the theory.

Today, powerful computers and vast amounts of data make it tempting to reverse the process by putting data before theory. Using our scurvy example, we might amass a vast amount of data about the sailors and notice that sufferers often had earlobe creases, short last names, and a fondness for pretzels, and then invent fanciful theories to explain these correlations.

This reversal of statistical testing goes by many names, including data mining and HARKing (Hypothesizing After the Results are Known). The harsh sound of the word itself reflects the dangers of HARKing: It is tempting to believe that patterns are unusual and their discovery meaningful; in large data sets, patterns are inevitable and generally meaningless.

Decades ago, data mining was considered a sin comparable to plagiarism. Today, the data mining plague is seemingly everywhere, cropping up in medicine, economics, management, and, now, history. Scientific historical analyses are inevitably based on data documents, fossils, drawings, oral traditions, artifacts, and more. But now, historians are being urged to embrace the data deluge as teams systematically assemble large digital collections of historical data that can be data mined.

Tim Kohler, an eminent professor of Archaeology and Evolutionary Anthropology has touted the “glory days” created by opportunities for mining these stockpiles of historical data:

By so doing we find unanticipated features in these big-scale patterns with the capacity to surprise, delight, or terrify. What we are now learning suggests that the glory days of archaeology lie not with the Schliemanns of the nineteenth century and the gold of Troy, but right now and in the near future, as we begin to mine the riches in our rapidly accumulating data, turning them into knowledge.

The promise is that an embrace of formal statistical tests can make history more scientific. The peril is the ill-founded idea that useful models can be revealed by discovering unanticipated patterns in large databases where meaningless patterns are endemic. Statisticians bearing algorithms are a poor substitute for expertise.

For example, one algorithm that was used to generate missing values in a historical database concluded that Cuzco, the capital of the Inca Empire, once had only 62 inhabitants, while its largest settlement had 17,856 inhabitants. Humans would know better.

Peter Turchin has reported that his study of historical data revealed two interacting cycles that correlate with social unrest in Europe and Asia going back to 1000 BC. We are accustomed to seeing recurring cycles in our everyday lives: night and day, planting and harvesting, birth and death. The idea that societies have long, regular cycles, too, has a seductive appeal to which many have succumbed. It is instructive to look at two examples that can be judged with fresh data.

Based on movements of various prices, the Soviet economist Nikolai Kondratieff concluded that economies go through 50-60 year cycles (now called Kondratieff waves). The statistical power of most cycle theories is bolstered by the flexibility of starting and ending dates and the co-existence of overlapping cycles. In this case, that includes Kitchin cycles of 40-59 months, Juglar cycles of 7-11 years, and Kuznets swings of 15-25 years. Kondratieff, himself, believed that Kondratieff waves co-existed with both intermediate (7-11 years) and shorter (about 3 1/2 years) cycles. This flexibility is useful for data mining historical data, but undermines the credibility of the conclusions, as do specific predictions that turn out to be incorrect.

In the 1980s and 1990s, some Kondratieff enthusiasts predicted a Third World War in the early 21st century:

the probability of warfare among core states in the 2020s will be as high as 50/50.

More recently, there have been several divergent, yet incorrect, Kondratieff-wave economic forecasts:

in all probability we will be moving from a ‘recession’ to a ‘depression’ phase in the cycle about the year 2013 and it should last until approximately 2017–2020.

The Elliot Wave theory is another example of how coincidental patterns can be discovered in historical data. In the 1930s, an accountant named Ralph Nelson Elliot studied Fibonacci series and concluded that movements in stock prices are the complex result of nine overlapping waves, ranging from Grand waves that last centuries to Subminuette waves that last minutes.

Elliott proudly proclaimed that, “because man is subject to rhythmical procedure, calculations having to do with his activities can be projected far into the future with a justification and certainty heretofore unattainable.” He was fooled by the phantom patterns that can be found in virtually any set of data, even random coin flips. And, just like coin flips, guesses based on phantom patterns are sometimes right and sometimes wrong.

In March 1986, Elliot-wave enthusiast Robert Prechter was called the “hottest guru on Wall Street” after a bullish forecast he made in September 1985 came true. Buoyed by this success, he confidently predicted that the Dow Jones Industrial Average would rise to 3600–3700 by 1988. The highest level of the Dow in 1988 turned out to be 2184. In October 1987, Prechter said that, “The worst case [is] a drop to 2295,” just days before the Dow collapsed to 1739. In 1993 the Dow hit 3600, just as Prechter predicted, but six years after he said it would.

Findings patterns in data is easy. Finding meaningful patterns that have a logical basis and can be used to make accurate predictions is elusive.

Data are essential for the scientific testing of well-founded hypotheses, and should be welcomed by researchers in every field where reliable, relevant data can be collected. However, the ready availability of plentiful data should not be interpreted as an invitation to ransack the data for patterns or to dispense with human knowledge. The data deluge makes common sense, wisdom, and expertise essential.

Data mining is a plague, not a panacea.

Further reading on data mining:

Coronavirus: Is data mining failing its first really big test? Computers scanning thousands of paper don’t seem to be providing answers for COVID-19.