Playing Tetris Shows That True AI Is Impossible

Here’s a look inside my brain that will show you whyHi there! I recently put together an electroencephalogram (EEG), or in normal words, a brain wave reader, so you can see what goes on inside my brain!

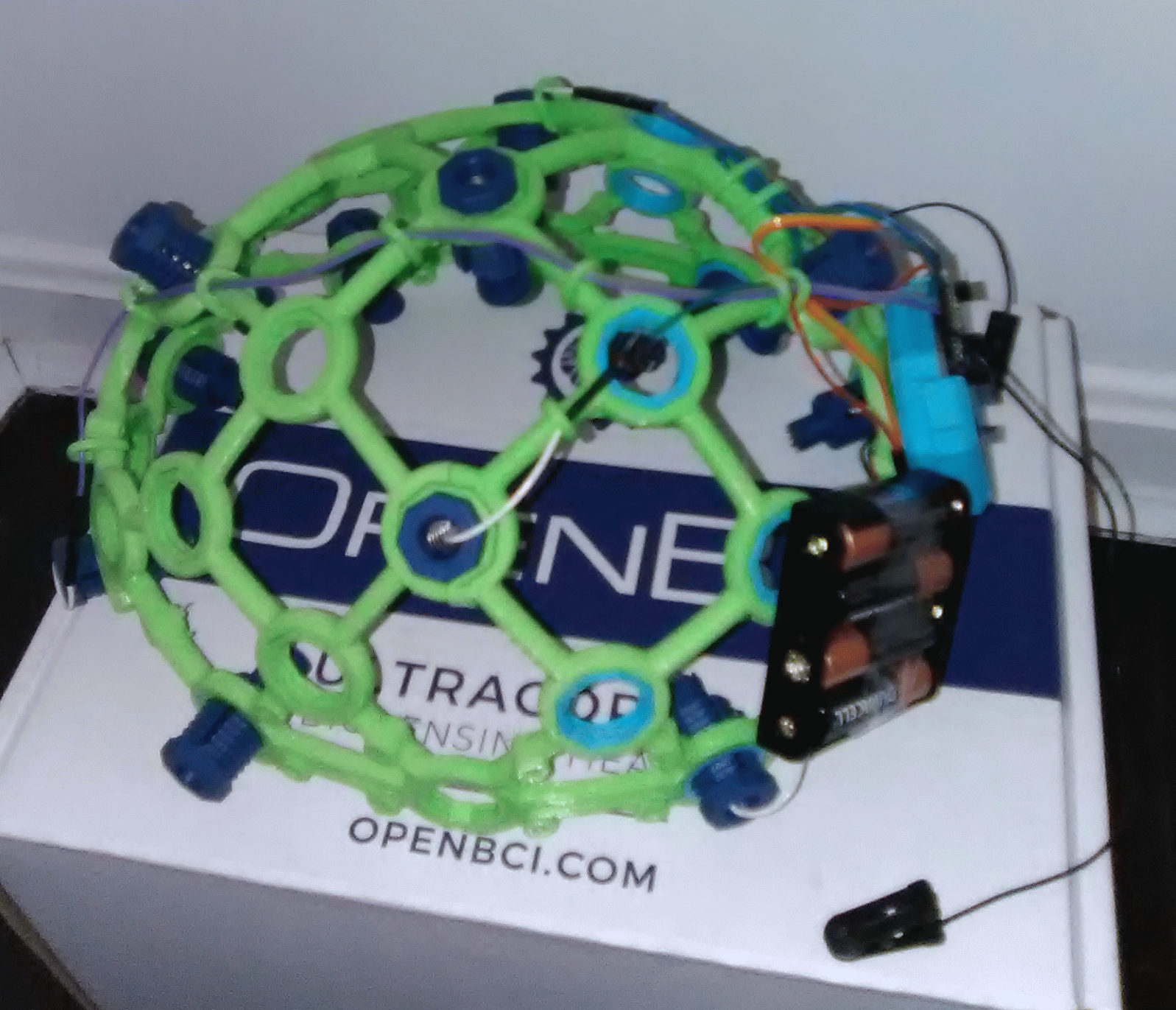

I received a kit from OpenBCI, a successful kickstarter project to make inexpensive brain wave readers available to the masses. Here’s what it looks like:

Yes, it looks like something Calvin and Hobbes would invent.

Here is how it looks on my head:

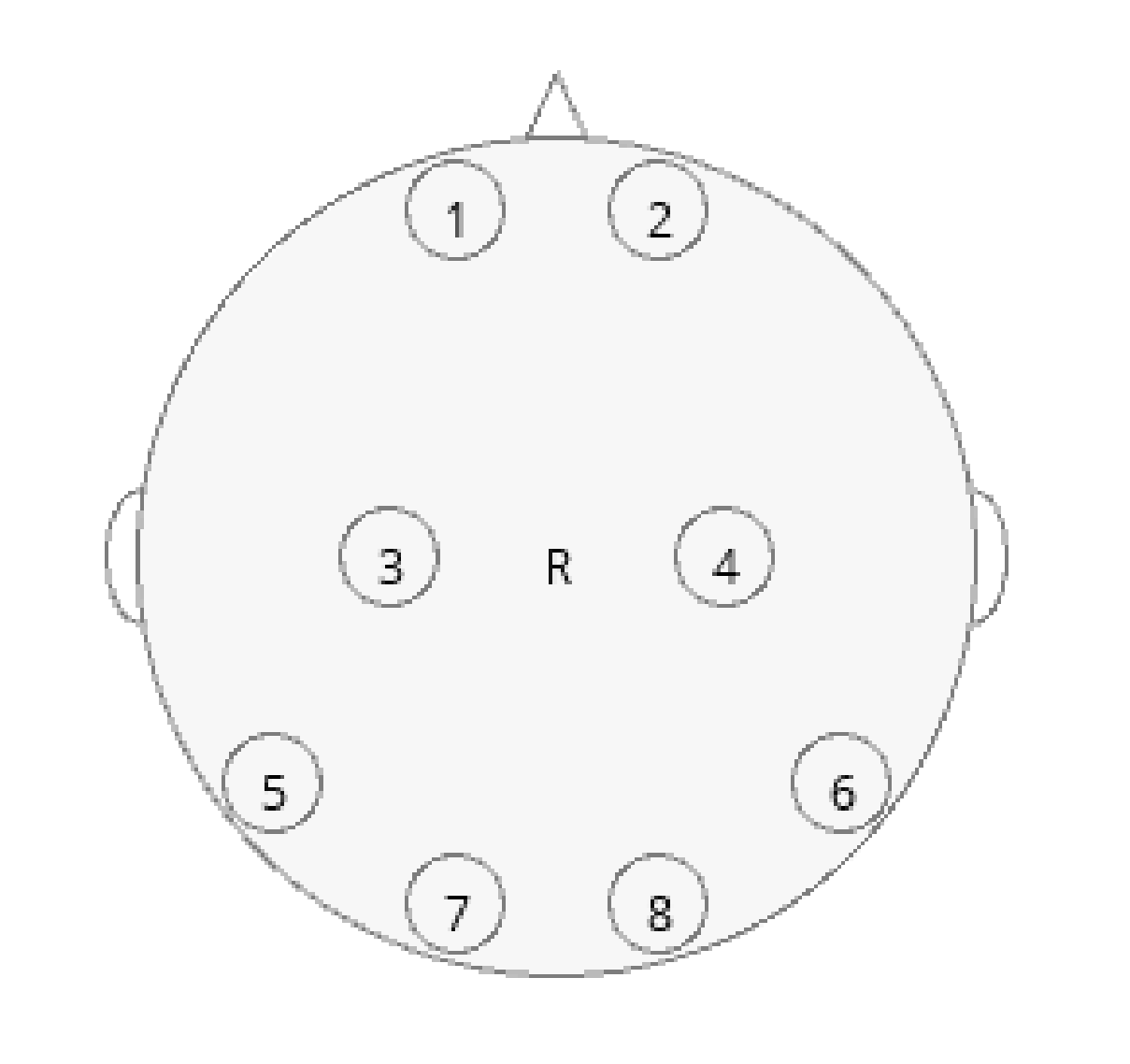

A number of electrodes are touching my scalp and a wire is connected to my ear. The layout on my head looks like the following schematic:

The EEG is measuring the voltage between different points on my scalp and my earlobe. The positions on my scalp are receiving a current from my brain while my earlobe acts as the ground. The EEG is essentially a multimeter for my brain.

Brain waves are generated by ions building up inside the neurons. Once the neurons reach capacity, they release the ions in a cascade across the brain. This leads to the wave effect.

So can I see any connection between my brain waves and what I’m consciously experiencing in my mind?

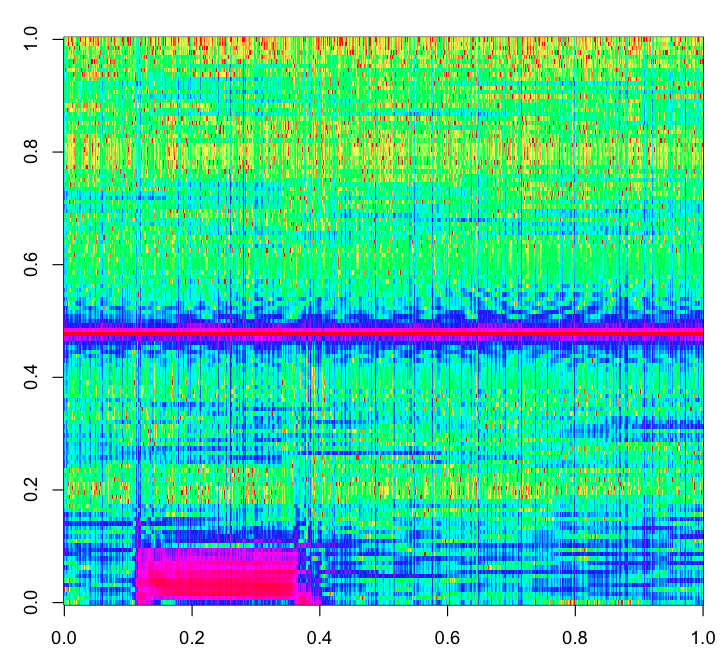

To test that, inspired by the EEG hacker blog, I generated a graphic known as a spectrogram of my brain waves across a set of activities.

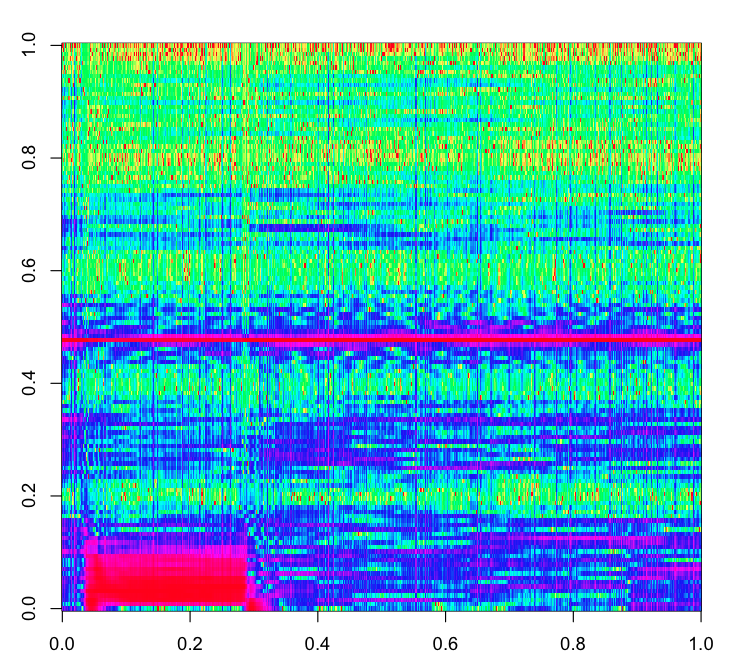

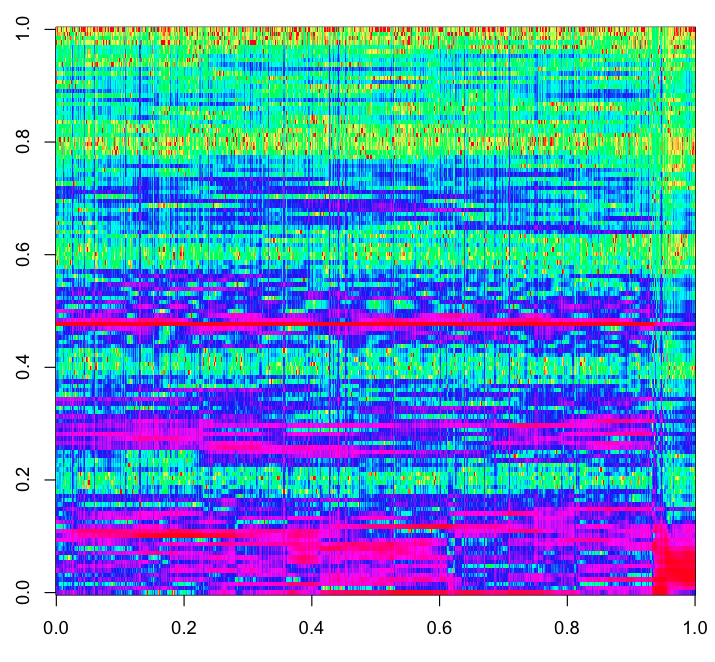

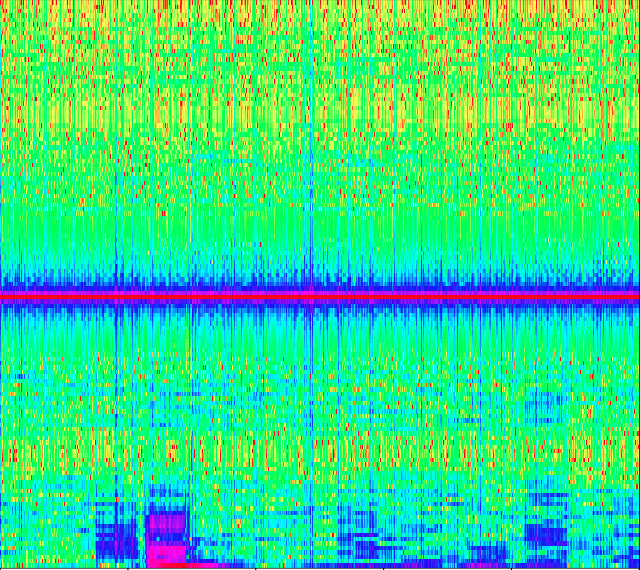

The spectrogram shows the range of brainwave frequencies in my brain at a given point in time. In the following plots, the horizontal axis is time, and the vertical axis is frequency. There are some artifacts in the plots, such as a middle band and a big pink blotch, so don’t take all patterns as significant. The important thing to note is the overall texture of the plot.

The greens and reds are low amplitude frequencies, and the blue and magenta are high amplitude frequencies, meaning those brain waves are stronger. The spectrogram is generated from the readings of the #1 electrode in the schematic above.

I performed three different activities to see how they affect the

spectrogram. Results and code are provided at

https://github.com/yters/eeg_tetris.

First, I just absentmindedly tapped the Enter key on my keyboard. I did not focus on anything in particular, just pressed Enter whenever I felt like it. This is the EEG spectrogram that random tapping generated:

Second, I played a game of Tetris on very slow speed, using a Github repo.

Here’s a video of the game speed:

This is the corresponding spectrogram:

Finally, I played Tetris much faster, and the spectrogram looked like this:

You can watch a video of the game speed here:

The big difference is that, as my activity became cognitively more difficult, the spectrogram became more blue and magenta, meaning that my brain waves became stronger.

What does this mean? It means that, at least at a high level. I can measure how cognitively difficult a mental task is.

Another interesting thing is the direction of causality. The intensity of my mental processing brought about an observable brain state. The causality did not go in the other direction; the magenta brain state did not increase my conscious process.

So my subjective mental experience brought about a change in my physical brain. In other words, my consciousness has a causal impact on my physical processing unit, the brain.

This type of observation causes a problem for those hoping to duplicate human intelligence in a computer program. This Tetris EEG experiment shows that conscious thought is essential to human intelligence. So, until we make conscious computers, which is most likely never, we will not have computers that display human intelligence.

Update: Someone online suggested it might just be my facial muscle tension. So I tested out the idea by recording while I tensed my brow (where the electrode is placed). https://github.com/yters/eeg_tetris

The result looked no different than the tapping EEG, so I consider the ‘just facial tension’ hypothesis falsified.

If you enjoyed this item, here are some of Eric Holloway’s other reflections on human consciousness and computer intelligence:

No materialist theory of consciousness is plausible All such theories either deny the very thing they are trying to explain, result in absurd scenarios, or end up requiring an immaterial intervention

We need a better test for AI intelligence Better than Turing or Lovelace. The difficulty is that intelligence, like randomness, is mathematically undefinable

and

Will artificial intelligence design artificial superintelligence? And then turn us all into super-geniuses, as some AI researchers hope? No, and here’s why not