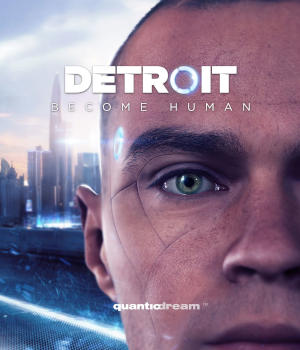

A Closer Look at Detroit: Become Human, Part I

Gaming culture provides a window into our culture’s assumptions about artificial intelligence

While the accelerated growth of artificial intelligence (AI) technologies is certainly nothing new, the attention it has garnered in popular media is more recent. Movies like Her (2013), Ex Machina (2014), and Chappie (2015) offer fictional futures for AI entities. And, although AI is no stranger to gaming culture, the hype prompts a closer look at its role there. Detroit: Become Human (2018) is a good place to start.

In this three-part review, I want to look first at the narrative, the story itself. Second, I would like to divide the glamor of film from the deeply rooted worldview and philosophical assumptions that underlie that narrative. Lastly, I would encourage you to consider that traditional philosophy has more to say about AI than you may think.

Detroit 2038. The city has transcended its current economic despair, emerging as the epicenter of an android revolution. Cyberlife, headquartered there, is the first company to engineer and produce fully autonomous, general purpose AI androids for consumers. They are bought and used like laptops. Go to a Cyberlife store, purchase your new maid or nanny, and go home with it. The story centers around three of these androids; Markus, Kara, and Connor.

In the first chapter, Markus lives with Carl Manfred, an elderly painter. Carl is kind and loving; he teaches him that not all humans are evil. But Carl dies of a heart attack in his studio and his son Leo (who had arrived to steal his father’s paintings for money) blames Markus. Two police officers promptly arrive and shoot Markus, shutting him down.

Markus wakes up at the bottom of a trash pit filled with the scraps and leftovers of countless androids. Somewhat like a phoenix rising from the ashes, he slowly repairs himself and makes his ascent to the top. He emerges changed; he is no longer just an android. For the rest of the story, he goes on to free countless other androids from their programming and give them free will (I’ll get to the blatant religious symbolism later). Working from a refugee camp called Jericho, he fights to bring equality, civil rights, and recognition to his people. Markus becomes the leader of a nationwide movement.

By the end of the story, it’s easy to forget that Markus is an android. We quickly move past questions about being human, about what it means to be alive. Because it is a game, Markus’s eventual fate is for you to decide. Throughout the story, you’re confronted with decisions that will determine how it will unfold. The ending I chose was peace. Markus resists violence and instead resorts to peaceful protest. In the end, Markus’s strong will gives way to open dialogue with the humans about recognizing androids as a new form of intelligent life.

In another thread of the story, Kara is a nanny bot owned by Todd Williams, an overweight, violent alcoholic. As her story unfolds, Kara builds a close relationship with Todd’s daughter, Alice. During her time living with Todd, we get our first real look at how owners mistreat androids.

One night, Todd flies into a rage, blaming Alice for the fact that his wife left him. After taking a few hits of a future drug called Red Ice, he charges upstairs, belt in hand, towards her room. Kara, overcome by concern for Alice, breaks past her programming. She runs upstairs, struggles with Todd, and shoots him with a gun she finds in his room. Kara and Alice run away, in fear of their lives.

Through the rest of the story, Kara and Alice come across many people (some good and some intent on killing them) on their way to Canada. There are no laws regarding androids in Canada, so living there would mean freedom. In the final scene of her story, Kara makes it to Canada after a treacherous crossing, carrying Alice onto a beach. However, this outcome depends on the decisions made throughout the game. My decision to prefer peace and kindness over violence may have led me to a different ending than what other players may experience.

Connor, in a third thread, is a new prototype android. He was created by Cyberlife to investigate the “deviants” (the androids who have escaped their programming). He is programmed to follow orders strictly and equipped with the best technology for crime scene investigations. The game opens with Connor arriving at a hostage-taking in which a young girl is being held over the ledge of a high rise by a deviant. After a few minutes during which the player is offered choices of dialogue, he saves the girl, which results in the deviant’s destruction. Shortly afterward, we are introduced to Lt Hank Anderson of the Detroit City Police Department. Despite his hatred for androids, Hank is assigned to investigate cases involving deviants and Connor is assigned as his partner.

Hank despises androids but he knows that he must work with Connor in order to close the deviant cases they encounter. Connor’s disposition throughout the game depends on the choices the player has Connor make. In the peaceful ending I chose, Connor eventually breaks past his programming after an encounter with Markus. This leads to Connor freeing the androids imprisoned at Cyberlife and joining the protest for rights.

The androids seem to have a built-in capacity to break through their programming and assert free will. What worldview underlies the assumption that they can somehow do that? In Part II. we will look at one component of that worldview, the AI religion.

Adam Nieri, Program Assistant, has interests in philosophy of science and philosophy of mind, and he holds an MA in Science and Religion from Biola University. He has background in social media and marketing, photography/graphic design, IT, and teaching.

See also: Does brain stimulation research challenge free will? (Michael Egnor)

and

Is free will a dangerous myth? (Michael Egnor)