New Learning Model for Brain Overturns 70 Years of Theory

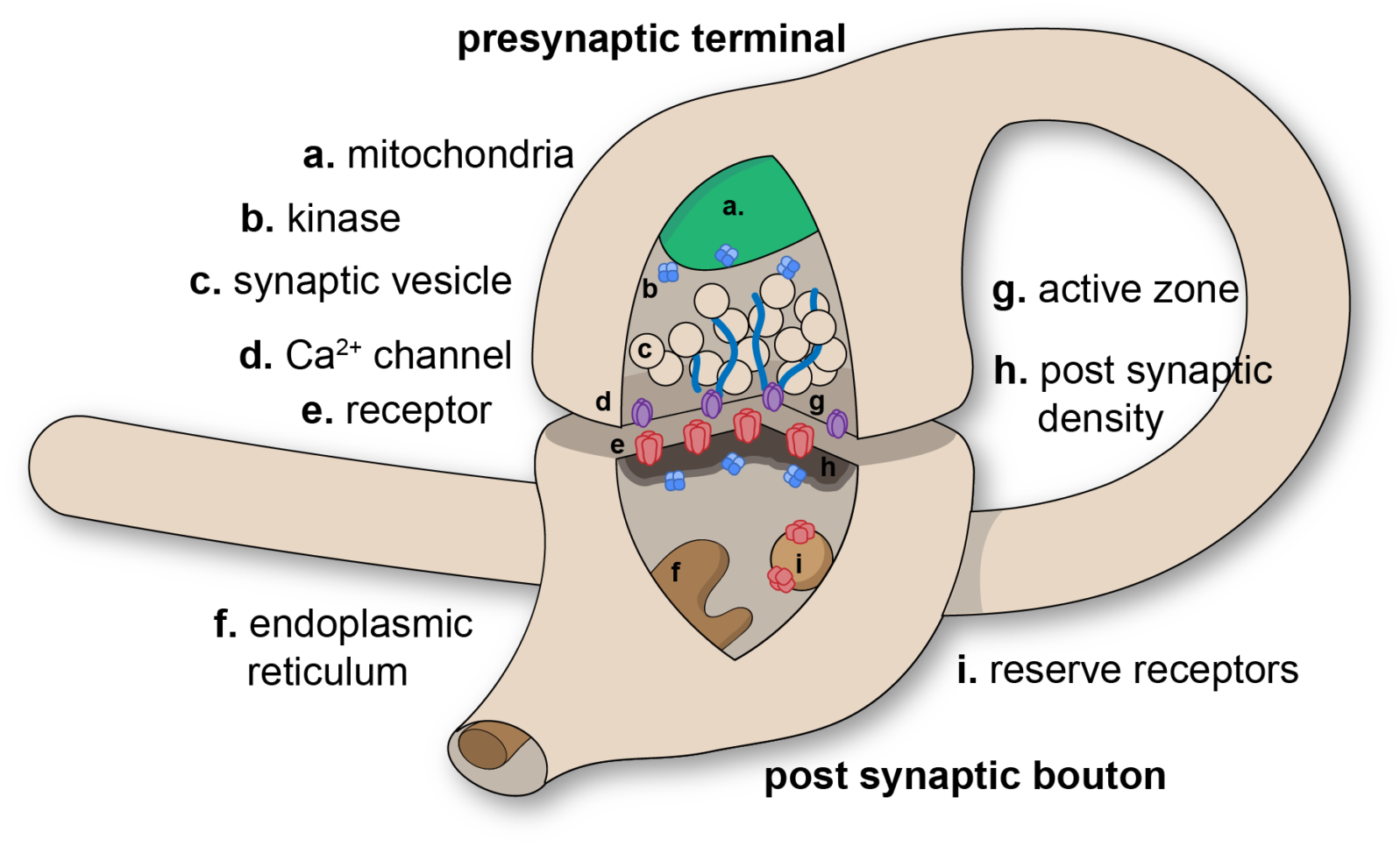

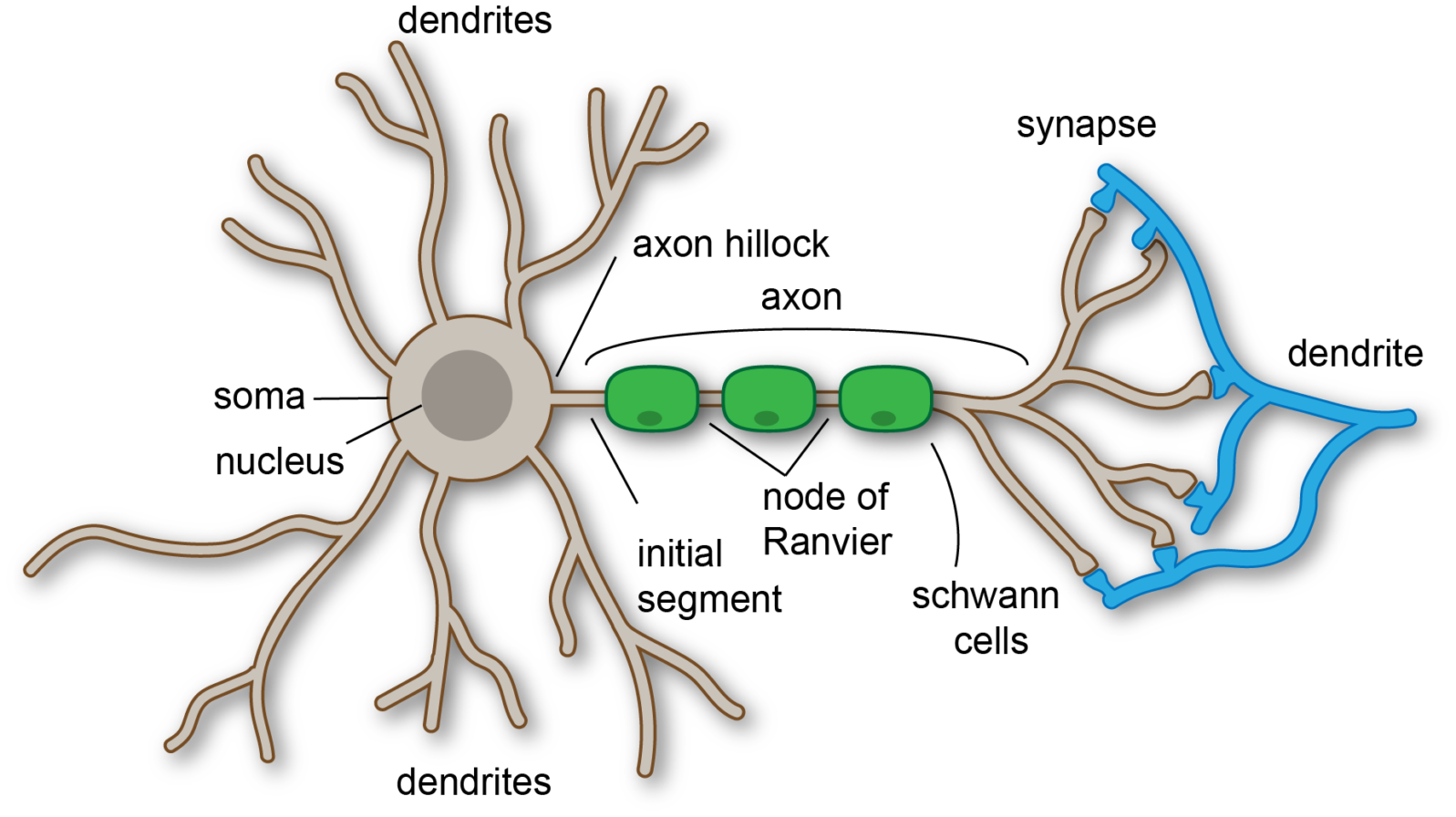

The new model, if confirmed, could change the way algorithms are developedAccording to new research, when learning takes place, it’s not just the synapses (by which neurons send signals to each other) but the whole communication structure (the dendrites) of the neuron that changes. The researchers compare the synapses to leaves and the dendrites to a tree.

This, if it replicates, is a radical revision from nearly a century ago.

For the last 70 years a core hypothesis of neuroscience has been that brain learning occurs by modifying the strength of the synapses, following the relative firing activity of their connecting neurons. This hypothesis has been the basis for machine and deep learning algorithms which increasingly affect almost all aspects of our lives. But after seven decades, this long-lasting hypothesis has now been called into question.

In an article published today in Scientific Reports, researchers from Bar-Ilan University in Israel reveal that the brain learns completely differently than has been assumed since the 20th century. The new experimental observations suggest that learning is mainly performed in neuronal dendritic trees, where the trunk and branches of the tree modify their strength, as opposed to modifying solely the strength of the synapses (dendritic leaves), as was previously thought. These observations also indicate that the neuron is actually a much more complex, dynamic and computational element than a binary element that can fire or not. Just one single neuron can realize deep learning algorithms, which previously required an artificial complex network consisting of thousands of connected neurons and synapses.

Bar-Ilan University, “New brain learning mechanism calls for revision of long-held neuroscience hypothesis” at ScienceDaily (May 1, 2022)

The AI modeled on how we thought neurons operate will work. But, according to the researchers, that is not how they actually work. They hope that their finding “paves the way for an efficient biologically inspired new type of AI hardware and algorithms.”

Incidentally, they also note, “The brain’s clock is a billion times slower than existing parallel GPUs, but with comparable success rates in many perceptual tasks.” That suggests that there is a lot else we don’t know.

The paper is open access.

You may also wish to read: Our neurons’ electrical synapses are the dark matter of the brain. These aren’t the familiar chemical synapses but a second set, the electrical synapses that enable currents to travel directly between neurons from pore to pore. A recent study in fruit flies shows that without the little-understood electrical synapses, neurons’ reaction is much weaker and some of them become unstable.