Chicken Little AI Dystopians: Is the Sky Really Falling?

Futurist claims about human-destroying superintelligence are uninformed and irresponsibleThe article “How an Artificial Superintelligence Might Actually Destroy Humanity” is one of the most irresponsible pieces about AI I have read in the last five years. The author, transhumanist George Dvorsky, builds his argument on a foundation of easily popped balloons.

AI is and will remain a tool. Computers can crunch numbers faster than you or me. Alexa saves a lot of time looking up results on the web or playing a selected tune from Spotify. A car – even a bicycle – can go a lot faster than I can run.

AI is a tool like fire or electricity used to enhance human performance and improve lifestyles. Like fire and electricity, AI can be used for evil or can have overlooked consequences. But any unforeseen AI action will not be due to any resident intelligence or intent originating from within the AI.

Using comments from Dvorsky’s paper, let me (again) state some areas where AI will never perform at the level of a human.

Dvorsky says, “I take a lot of flak for this, but the prospect of human civilization getting extinguished by its own tools is not to be ignored.” By tools, if Dvorsky means AI, there’s a good reason for this flak. Arguing for a flat earth would also draw a lot of flak.

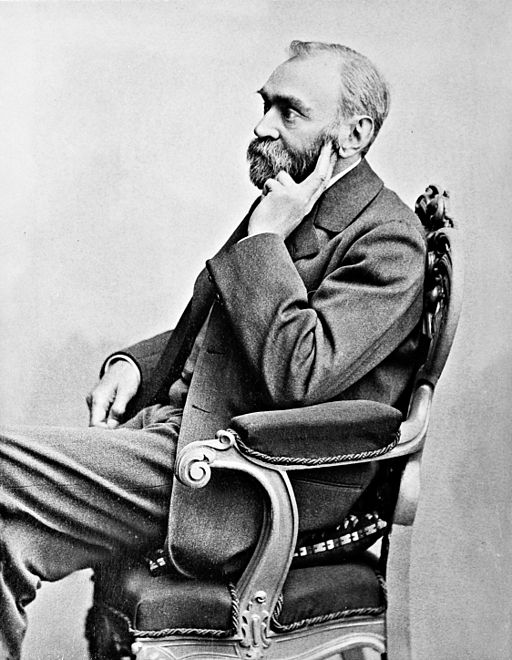

Alfred Nobel thought his invention of dynamite would forever tar his legacy. As one newspaper put it, Nobel would be remembered as “the merchant of death.” Nobel’s guilt was a factor in his founding of the Nobel Prize. Dynamite continues to be dangerous, but its danger has been mitigated. Likewise, Dvorsky should not be worried about the prospect of AI destroying the world.

Chicken Little AI preaching also brings to mind Thomas Edison’s campaign against Nikola Tesla’s alternating current (AC) electricity. To prove the danger of AC, Edison sponsored electrocution of animals at county fairs. Most famous was the electrocution of Topsy the elephant in 1903 at Coney Island. Edison wanted to make the case that AC current was among the most dangerous things in the world.

Edison, of course, was wrong. Today we access AC every time we plug something in a wall socket. Electricity remains dangerous. But stick a fork in the AC outlet and a breaker will trip. Safeguards against electricity dangers have been developed. Like the fire alarm safeguard, defense against AC danger is effective but not perfect. Such is and will be the case for development of safeguards against unintended consequences of AI (1). Using legal parlance, a potentially harmful AI system should be made safe “beyond a reasonable doubt.” Some AI, like Alexa, works well below this threshold. That’s okay. Alexa choosing a song you didn’t mean is sometimes angering, but never life-threatening.

Let’s return to Dvorsky’s article. He finds ridiculous that some think “superintelligence itself is impossible.” The author is astonishingly wrong in thinking computers can equal and surpass humans in mental performance. He seems unaware of limitations of AI as mathematically argued by Nobel laureate Roger Penrose decades ago in “The Emperor’s New Mind.” Or Searle’s Chinese room argument that convincingly demonstrates computers, and thus AI, will never understand what they do. Or Selmer Bringsjord’s Lovelace test that a computer must pass to demonstrate creativity. No AI executed on computers has yet passed the Lovelace test – or ever will. AI can only follow algorithms. Humans celebrate numerous non-algorithmic properties that can never be learned using a computer. Computer programs cannot be written to perform non-algorithmic tasks such as understanding, sentience, qualia experience or creativity. Human exceptionalism in such matters remains unassailable.

Dvorsky goes on and says, “Imagine systems, whether biological or artificial, with levels of intelligence equal to or far greater than human intelligence.” Before doing so, one must first imagine the author knows what he is talking about. Doing so requires imagining a fantasy. An argument can be built on imagining unicorns registering to vote in Florida. But the tide of reality washes away all such imaginings etched on beach sand. Speculating futurists should build their houses on solid foundations.

AI can only follow algorithms. Humans celebrate numerous non-algorithmic properties that can never be learned using a computer.

Like bubblegum left on the bedpost overnight, old dethroned AI speculations lose their impact with age. Dvorsky points to the dangers of weaponized autonomous AI drones scarily depicted in the slickly produced and scary Slaughterbots short. He is apparently unaware of AI weapons specialist Paul Scharre’s masterful retort of Slaughterbot claims or my unpacking of the topic in the monograph The Case For Killer Robots. He should also check out reality in the US Army’s swarm defense and the US Air Force’s Golden Horde drone swarm weapons and countermeasures program. Not researching background is a major contributor to the glut of fake news seen today.

Futurists, like Dvorsky, are not unlike Biblical prophets of old. If an Old Testament prophet prophesied incorrectly, they could be stoned to death. One brilliant futurist, George Gilder, long ago predicted the eventual obsolescence of broadcast-model television and the invention and widespread use of today’s cell phone. Concerning Gilder, drop your throwing stones. Gilder’s most recent monograph Gaming AI: Why AI Can’t Think but Can Transform Jobs shows that AI performance has an impenetrable concrete ceiling far below duplicating human performance. A good read, the piece throws another bucket of ice water on Dvorsky’s dystopian arguments.

Futurists often write provocative posts that get a lot of clicks. But like the comfort of warm coffee spilled in your lap on a cold day, the appeal can only be temporary. Time will reveal the truth. For Dvorsky, the spilled coffee is already well chilled.

(1) A recent paper of mine with Justin Bui is a contribution towards mitigating unintended consequences in neural network performance.