The Myth of “No Code” Software (Part III)

The complexities of human language present problems for natural language programmingMany visions of the future include humans programming through “natural language” — where humans merely state what they want and computers “figure out” how to write code that does what is requested. While there have been many demos that have led people to believe that this will be possible, the truth is, the idea has so many problems with it, it is hard to know where to begin.

Let’s begin with the successes of natural language programming.

Wolfram|Alpha is probably the best-known natural language programming system. You type in a command in natural English, and Wolfram|Alpha converts that command into native Mathematica code and runs it. In 2010, Stephen Wolfram announced that Wolfram|Alpha signified that natural language programming really was going to work. However, as time wore on, his tune changed. Despite having built the closest thing to a natural language programming system so far, Wolfram had decided by 2019 that, whether or not natural language programming was possible (he and I may or may not still disagree here), it was not actually practical!

The latest example of natural language programming success, however, has been the demos of Debuild.co. These demos show a programmer simply describing what they want to see, and the system automatically writing code for it. It’s hard to comment too much on this because Debuild has not released anything to the public — only videos. However, even in the videos, the system is not doing anything incredibly fancy. As is usual with demos, it has some eye-catching content (“make a button that looks like a watermelon” and “make a button with the color of Donald Trump’s hair”). But in the end, it really isn’t doing anything useful. Additionally, the fact that it has been almost a year since the demo, and no one has seen anything from it since, raises the possibility that even what is seen in the demo is highly cherry-picked.

So why the skepticism about natural language processing?

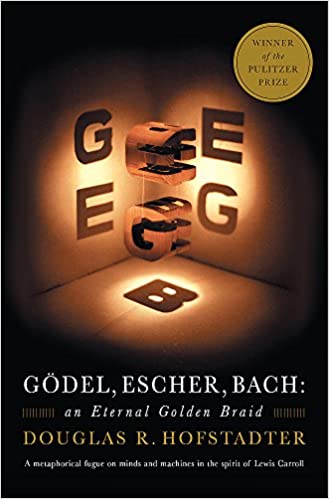

The first problem is with the ambiguity of language combined with the unambiguous nature of code. The fact is that human language is ambiguous. Therefore, assuming all the other issues are solved, you would still spend all day simply answering questions back to the AI to disambiguate what you asked it to do. Of course, that would decrease all of the productivity gains that are supposed to be made through natural languages. As Douglas Hofstadter put it in Gödel, Escher, Bach:

If the computer is to be reliable, then it is necessary that it should understand, without the slightest chance of ambiguity, what it is supposed to do. It is also necessary that it should do neither more nor less than it is explicitly instructed to do. If there is, in the cushion underneath the programmer, a program whose purpose is to “guess” what the programmer wants or means, then it is quite conceivable that the programmer could try to communicate his task and be totally misunderstood. So it is important that the high-level program, while comfortable for the human, still should be unambiguous and precise.

Now, a way around this is to do what Wolfram|Alpha does — tell you what its guesses are. Wolfram|Alpha oftentimes does guess what you mean. However, what Wolfram|Alpha does for you is to show you what your words look like after they have been translated into code, including any guesses needed to disambiguate your meaning. If I ask Wolfram|Alpha, “How big is Tulsa?”, it first states, “Assuming ‘how big’ is referring to cities”, and then it states how the input is interpreted. In my case, it is doing a lookup for “Tulsa, Oklahoma” and then accessing the property “area”. In the Wolfram Language, that is

Entity[“City”, {“Tulsa”, “Oklahoma”, “UnitedStates”}][EntityProperty[“City”, “Area”]]

If I ask, “What are the largest three cities in the United States?”, it first tells me that it is assuming that I’m talking about population size, and then translates my query into the following Wolfram Language code:

EntityClass[“City”, {EntityProperty[“City”, “Country”] -> Entity [“Country”, “UnitedStates”], EntityProperty[“City”, “Population”] -> TakeLargest[3]}]

Additionally, Wolfram|Alpha has a graphical representation of the code that makes it easier to understand.

So, Wolfram|Alpha has largely solved the language ambiguity problem by splitting the difference. It will make assumptions for you, but then it will tell you what those assumptions are. It will generate code for you, but then present you with the generated code so you can validate that it understood what you meant. Note that, while this makes code generation easier, it actually doesn’t replace programming, as programming skills would still be needed to validate that the generated code matched the intention.

The second problem is the fact that human languages themselves are not optimized for highly technical descriptions. This problem, as mentioned above, was noted by Stephen Wolfram himself. He said that mathematics started out with natural language descriptions, and it wasn’t until those descriptions were formalized into a formal system that heavy advances were able to be made. Why? Because natural language is clunky when applied to highly technical domains. It took hundreds of words to describe what we can now put into a small equation, which made it hard to deal with. Likewise, when talking about what programs should do, while we could describe them in clunky human terms, putting them in terms the computer understands is actually much more efficient.

Interestingly, the programming languages which have aimed at being very easy for humans to understand – AppleScript, Basic, and COBOL – are actually among the most derided programming languages precisely because they are so inefficient to work in.

In any case, the entire reason people wanted natural language programming is because they didn’t want to be so specific in the first place. But as Hofstadter noted, being specific is required to prevent mistakes. Therefore, it looks like the goals and constraints of natural language programming are directly at odds with each other.

The final consideration is a bit more technical. When someone talks about natural language programming, what they want to do is to tell the computer the goal, and have the computer figure out how to get there. Let’s take, for instance, the command, “Drive along the fastest path to New York.” You aren’t telling the computer what to do, you are asking it to figure out what to do. The problem is that, even if the goal is unambiguously specified, there are limits to what computation can do. Humans are limited, too, but not in the same way. Therefore, leaving the task of determining how something will be done to a computer means that you are removing a whole set of potential answers to your problem that could be done if you asked a human to help you out.

All this isn’t to say that there will be no human-language-based programming in the future. On the contrary, we have given several examples of how it is working now. We will probably continue to see advances in how programs are written, displayed to programmers and non-programmers, and methods for non-programmers to write simplistic functions. However, there is no reason to think that “natural language programming” will become the standard programming paradigm of the future, or that it is even possible for general-purpose programming.