Why AI Geniuses Think They Can Create True Thinking Machines

Early on, it seemed like a string of unbroken successes …In George Gilder’s telling, the story goes back to Bletchley Park, where British codebreakers broke the “unbreakable” Nazi ciphers. In Gaming AI, the tech philosopher and futurist traces the modern concept of a machine that really thinks for itself back to its earliest known beginnings. Free for download, his concise book also explains why the programmers were bound to fail in their quest for the supermachine. But let’s start with why they thought—and many today still think— it could work.

Success emboldened the pioneers to dream of a final AI triumph

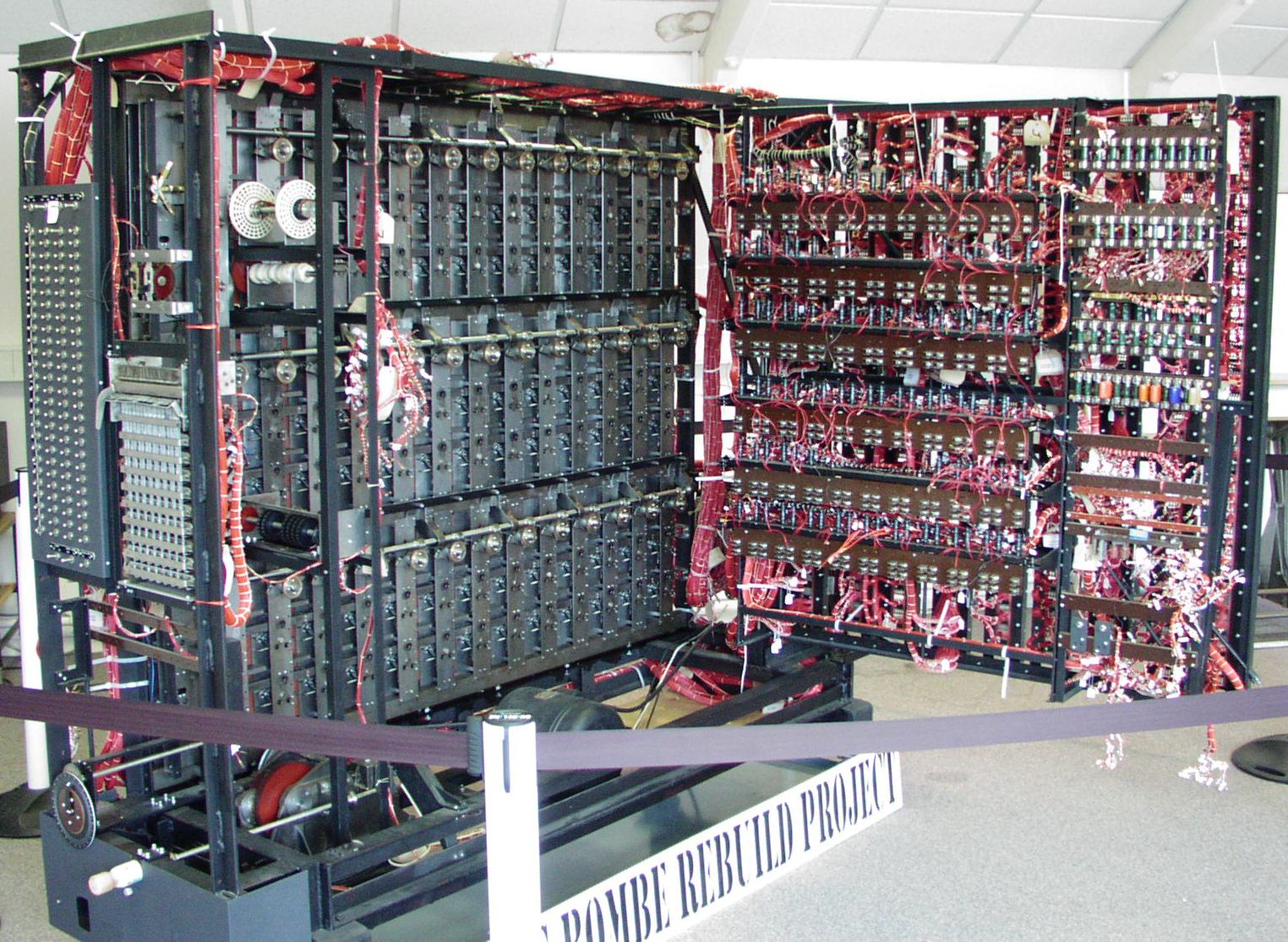

They had every reason to be emboldened by success. Special computers called “bombes,” created by Alan Turing’s team, broke every version of the famous Enigma code used by the Nazis in World War II—far surpassing human effort. (A rebuilt “Bombe” is shown from the rear, courtesy Tom Yates (CC 3.0))

Later, Turing’s team designed Colossus, a computer that, using over a thousand vacuum tubes, cracked the German High Command’s internal communications “almost instantly.” Gilder suggests that such efforts may have been more important in turning the tide of the war than the atom bomb (the Manhattan Project).

They understood the risks, as well as the benefits, of the final machine

In 1965, a Turing associate, I. J. (“Jack”) Good (1916–2009), captured the vision, the benefits, and the risks of the ultimate “thinking machine”—all in one paragraph:

Let an ultra-intelligent machine be defined as a machine that can far surpass all the intellectual activities of any man however clever. Since the design of machines is one of those intellectual activities, an ultra-intelligent machine could design even better machines. There would unquestionably be an “intelligence explosion” and the intelligence of man would be left far behind. Thus the first ultra-intelligent machine is the last invention that man need ever make, provided that it is docile enough to tell us how to keep it under control.

– Irving John Good, “Speculations Concerning the First Intelligent Machine,” Advances in Computers, vol. 6 (New York: Academic Press, 1965), quoted in Gilder, Gaming AI, 33

So there it all is, back in 1965: First, the machine that can far surpass all human activities, then design for itself even smarter machines—and maybe escape human control.

Alan Turing and Claude Shannon also thought that a machine could be built that would act like a human brain. (P. 19) Since then, Elon Musk, Stephen Hawking, and Max Tegmark have warned of the dangers of such a machine going rogue. Tegmark, in particular, has warned memorably: “ When humans came along, after all, the next-cleverest primate had a hard time. Subdued were virtually all animals; the lucky ones becoming pets, the unlucky… lunch.”

It is simply assumed that machines and brains have much in common, just as John von Neumann (1903–1957) supposed in his 1958 book, The Computer and the Brain. If so what could be more reasonable than the idea that a huge computer outpowers a small one?

The believers today are many and some of them write books, for example Yuval Noah Harari (Homo Deus) or Ray Kurzweil (How To Create a Mind) ,according to which computers will outstrip us by 2041.

Applying those principles has worked wondrously well

Why shouldn’t the computer scientists believe it? Silicon Valley’s AI triumphed at chess, Go, StarCraft II, and poker. Then AlphaGoZero beat AlphaGo 100-0 at Go—and by that point, it was all machine vs. machine. The same technology, known as AlphaFold, also detects protein folds, useful in medicine, much more quickly than human competitors.

Rapid technological development—Moore’s Law, fiber optics, RISCs (reduced instruction set computers) and now quantum computers—certainly helped shape the computer techie’s worldview.

And, in Gilder’s view, AI has now become something of a religion

It is not a very freedom-oriented religion. He points us to Kevin Kelly of Wired Magazine, who “projects the existing internet into a cornucopian future of four-dimensional artificial intelligence”:

Humans become part of a single global agency. Kelly at various points dubs this global agency the “One Machine,” “the Unity,” or “the Organism.” The new web is its operating system in virtual reality. As Kelly puts it, “The One is Us.”

George Gilder, Gaming AI (p. 33)

The One is Us? China’s total surveillance system is just the beginning, apparently.

Everything seems to be in place for the final triumph, the Rapture of the Nerds or the “eschaton,” as Gilder calls it.

But wait. Any scheme for world domination could be successful or otherwise. And any religion could be true or false. Gilder calls the AI cult the “materialist superstition” of computer science (p. 19) Why? Both neuroscientists and computer theorists insist that there is mounting evidence for the brain as a mere “meat machine.” Could they be wrong?

How they could indeed be wrong

There is a hidden weakness in the assumption that every type of mental process is a form of computation. If a mental process is not a form of computation, the computer can’t do it.

Even though computer theorists see the human brain (assumed to be the source of consciousness) as a computer, it doesn’t resemble one in its operations.

For example, the brain is a billion times slower than a gigahertz computer. But it is also a billion times more energy efficient. Gilder explains: “When a supercomputer defeats a man in a game of chess or Go, the man is using twelve to fourteen watts of power, while the computer and its networks are tapping into the gigawatt clouds of Google data centers around the globe.” (p. 24). Even so, “One human brain commands roughly as many connections as the entire internet.” (p. 35)

Something isn’t right here. Is the human brain doing something different? Something that’s not like a computer?

To rule the world, the ultimate computer must be an all-purpose problem-solver, a “a general-purpose machine” with “the capability of judgment” (p. 20). But, for general-purpose judgment, consciousness is surely needed. And we don’t know exactly what consciousness even is. Oh, there are plenty of disputed theories of consciousness but we can’t make a mass of disputed theories into a computer program.

But from here on it gets worse. An underlying problem Gilder notes is that “Whether in the nervous system of a worm, in the weave of hardware and software of a computer, or in the ganglia, glia, synapses, dendrites and other wetware of a human brain, once you map all the links you still do not understand the messages or their meaning. Knowing the location and condition of every molecule in a computer will not reveal its contents or function unless you know the ‘source code.’” (p. 35)

Why is that a problem? Gilder asks us to consider Go as an example. Only the players know what the positions of the black and white stones mean: “To you as an individual human player, it affords free will and choice. Yet in sheerly physical terms, you are just deploying patterns of smooth small white and black stones. All the symbolic freight of the saga of the game depends upon your role as interpreter of the meaning of the stones. With no interpretant, there is no strategy and no symbolic meaning. There is no winning or losing. There is no game.” (p. 36)

True, AlphaGo was programmed to use its massive computing power to calculate a winning strategy and it did. But it did not know that it was playing a game. And no one knows how to make it know that it is playing a game. AlphaGoZero didn’t know either. Gilder concludes, “The game of Go, touted as the launching point for AI’s conquering of the human mind, is in fact an inexorable symbol of the futility of AI as an ultimate model of intelligence in the universe.” (p. 36)

The current outcome of all that ambition among so many undoubted geniuses is worth unpacking in more detail—which we will do shortly.

Next: Why AI geniuses haven’t created true thinking machines. The problems have been hinting at themselves all along.

Note: George Gilder is also the author of Life after Google: The Fall of Big Data and the Rise of the Blockchain Economy (2018)

You may also enjoy: Can a machine really write for the New Yorker? If AI wins at chess and Go, why not? Then someone decided to test that…