The Mind Can’t Be Just a Computer

Gödel demonstrated that fact and Turing tried to live with itKurt Gödel (1906–1978) proved that there are truths in mathematical logic that lie outside mathematical logic. An Austrian-born friend of Albert Einstein, Gödel has stirred up debate for decades with his odd, ingenious result. He inspired mathematical platonists like physicist Roger Penrose, Rouse Ball Professor of Mathematics at Oxford University, to base his philosophical argument against mechanism, or, if you will, computationalism — the idea that all thinking is at root computer programs — on that result.

First, let’s get this straight: After Gödel, computationalism just can’t be correct, on what I’ll call the Strong Gödel Thesis. Any literal reading of Godel’s result licenses, really forces, anti-computationalist conclusions. Thus, it is no stretch to say that it forces anti-Artificial General Intelligence (AGI) conclusions, in today’s parlance.

Why? Gödel proved that the idea of provability in a purely formal system can’t capture all the truths expressible in that system, using its own rules and proof procedures. The concepts of “Proof” and “Truth” pull apart—they’re different ideas. In other words, from the one, you can’t say everything about the other. Incompleteness holds, no matter how complicated a formal (computational) system one considers. It’s general, in other words, or we can say scalable, beginning with simple systems that include the concept of counting integers, one, two, three, etc., to systems fantastically complex, ad infinitum. That is what makes incompleteness so powerful.

The question for computationalists and their critics is: what does Gödel’s strange proof say about systems that are supposed to undergird the human mind itself? It’s fantastically complex, sure. But Gödel’s result assures us that it, too, is subject to incompleteness. This leaves the mechanist in a bind: if in fact, the system for the human mind is subject to incompleteness, it follows that there is some perfectly formal and valid statement in mathematical logic that is completely impervious to all attempts at proving it. But if we are computers, this means that our insights into mathematics must stop at this statement. We are blind to it because as computers ourselves, we must use only our proof tools, with no access to our “truth” tools. Strange. As believers in computationalism, we would be ever so strangely incapable of doing our jobs as mathematicians.

Some statement — call it “G” in keeping with Penrose’s convention — is true but not provable for our own minds. We can’t prove it. But as mathematicians, we should still be able to see that it’s true. Truth, in other words, ought still to be available to the human mind, even as the tools of a strict logic are inadequate. That’s mathematical insight, like the kind Gödel himself most surely used to prove Incompleteness. Ergo, we must not be completely computational at root. The mind must have some powers of perception or insight outside the scope of purely formal methods.

Critics have dismissed the Strong Gödel Thesis on the grounds that any statement G undergirding human thinking would be so fantastically complex that it could not be understood as true or false. Douglas Hofstadter, for instance, wrote in his Pulitzer Prize-winning Gödel, Escher, Bach: An Eternal Golden Braid (1999) that the Godel “game” is really just one-upmanship. As computational systems become more and more complex, the human mind becomes more and more flummoxed. At the point where we’ve reproduced all of the mind as a computer program, “G” is just too darn complicated for mathematicians to see its truth, while also realizing it can’t be proved. This nullifies the strong thesis.

Other criticisms stem from observations that the brain is constantly in flux and so we can never really construct a stable Gödel statement to get the incompleteness result for our own minds. Penrose anticipated this objection, and so asked his readers to consider only the part of human thought that is directly responsible for mathematical thinking. Whatever algorithms undergird our ability to think through proofs in mathematics are all that is required. And, indeed, by the computationalists’ own admission, there must be some level of abstraction in the human mind that we can call thought. Certainly, at the level of neurons and biology, all is flux. We can hardly say the same about our ability to work through complicated mathematical proofs. Whatever this capability is, it’s clearly stable (to the computationalist, it’s programs).

Perhaps. But a weaker thesis, still inspired by Gödel’s groundbreaking result, really provides evidentiary support for the common sense conclusion that our insights, discoveries, and sheer guesses aren’t disguised programs. On a Weak Gödel Thesis, we see that the philosophical or metaphysical claim that the human mind is a computer accounts poorly for obvious observations about thinking. Insight becomes programmed. But it is the very nature of the mind to sit outside such determinism.

Such an observation is not mere philosophizing. Seeing that something is true in spite of some prior set of rules, or prior observations, is the hallmark of discovery. Certainly, Copernicus (1473–1543) can be credited with seeing a truth buried and “unprovable” in a system, realizing in 16th century Italy that Earth revolved around our sun and not vice versa. These are truths not provable by using the available system. In this case, it required ignoring hundreds of years of astronomical observations and an entire system from the ancient Greek astronomer Ptolemy, about the nature of the heavens. We can likewise attribute some insight into truth on the part of his near contemporary Johannes Kepler (1571–1630), who intuited somehow the elliptical orbits of planets. There are many other possible geometric shapes, all simple and thus able to satisfy Occam’s Razor, yet wrong). If Copernicus and Kepler were computer programs, they certainly had powers of insight that computationalists must uncomfortably attribute to complicated programming. That’s a neat trick.

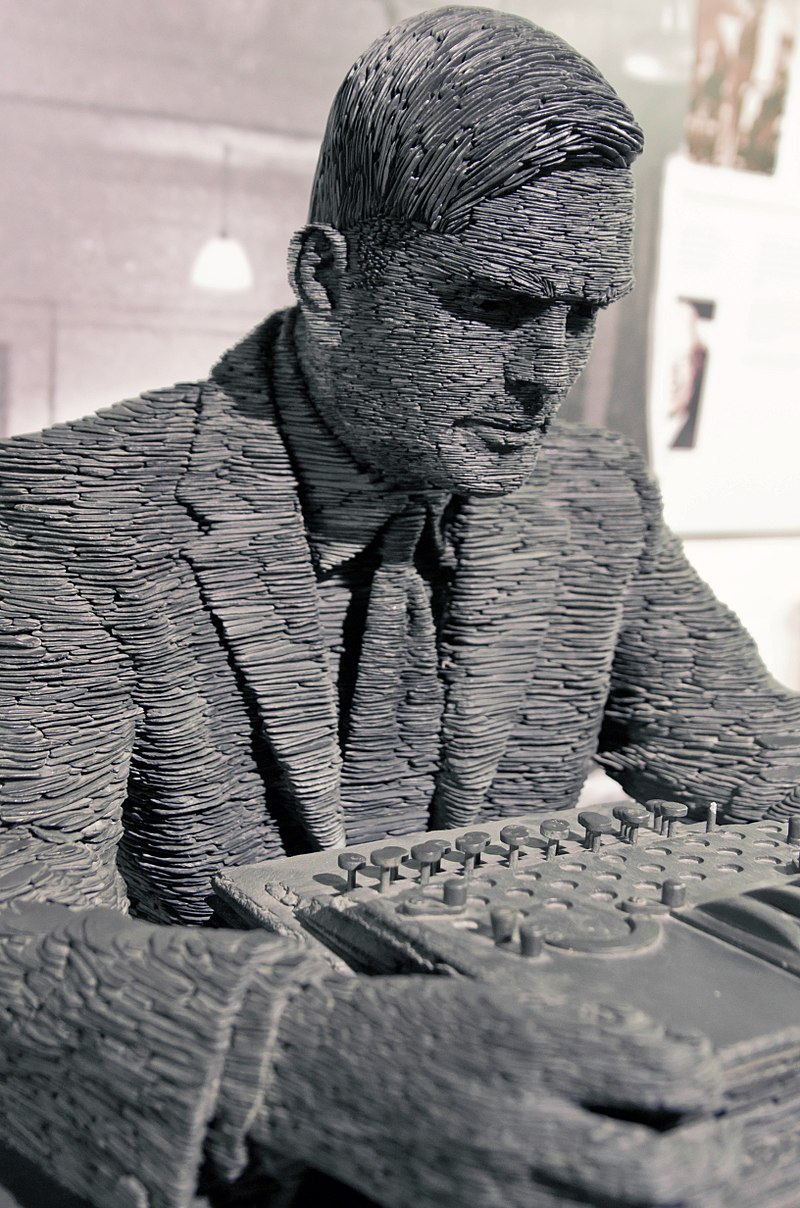

The great pioneer of AI, British mathematician Alan Turing (1912-1954), seems to have believed in something like the weak Gödel thesis in his earlier, theoretical work on computation, notably in his own Ph.D. thesis published in the late 1930s. That was before the advent of digital computers and before his own writing about the possibility of artificial intelligence. The latter came later, in 1950, when Turing gave philosophers and scientists the Turing test. He wrested the fledgling AI, officially inaugurated in 1956 at the Dartmouth Conference (where the term “AI” likely originated), from metaphysical speculations about interior consciousness in machines. (Machine consciousness, in spite of Turing’s efforts, is still part and parcel to futurism about AI.)

Antoine Taveneau CC– BY-SA 3 0

In 1936 and 1938 though, Turing thought that ingenuity, seen as solving problems using formal rules, by applying proof theory in mathematical logic, is a different idea from insight, seen as seeing from the outside of a formal system what problems to work on in the first place—that is, what’s fruitful, and therefore probably true. This is very likely what motivated the genius Kurt Gödel to find the truth of incompleteness and it is also a very clear statement of the value of the Weak Gödel Thesis. All cannot be machines, we might say, for then there is nowhere for insight about truth to sit, looking in.

It is indeed a strange quirk in intellectual history that Turing seems to have flip-flopped on this issue, almost politician-like, yet no one seems to have noticed. Gödel, for his part, remained cagey about the strong version of his result, noting only that a disjunction must, therefore, be true: we are either inconsistent machines or minds. Gödel must surely have jested here because inconsistency in the mathematical sense means that anything can be proven, making human thinking worthless — moons might be made of cheese then. Gödel seems to have stopped short of believing in his own Platonism, or at least proving that it must be true. But the evidence suggests strongly that viewing the mind as a big computer is probably wrong.

Science, at any rate, is something we do, looking in from the outside as it were. Whether we are ultimately caught up inside the very systems we devise to describe and explain is a philosophical question. It seems to me anyway, and indeed to the Turing of the 1930s, and most assuredly to Kurt Gödel the Platonist, that we sit outside the systems we make, the webs we weave so to speak, always on the precipice of the discovery of new truths. That’s a belief that makes sense of evidence, to be sure. It has the additional salutary consequence of making sense of our own possibilities and future.

See also: Human intelligence as a halting oracle (Eric Holloway)

and

Things exist that are unknowable (Robert J. Marks)