Could AI think like a human, given infinite resources?

Given that the human mind is a halting oracle, the answer is noEarlier this year, I published a three-part exchange with Querius (linked below), who is looking for answers as to whether computers can someday think like people. He contacted me again recently and once again kindly gave me permission to publish our discussion:

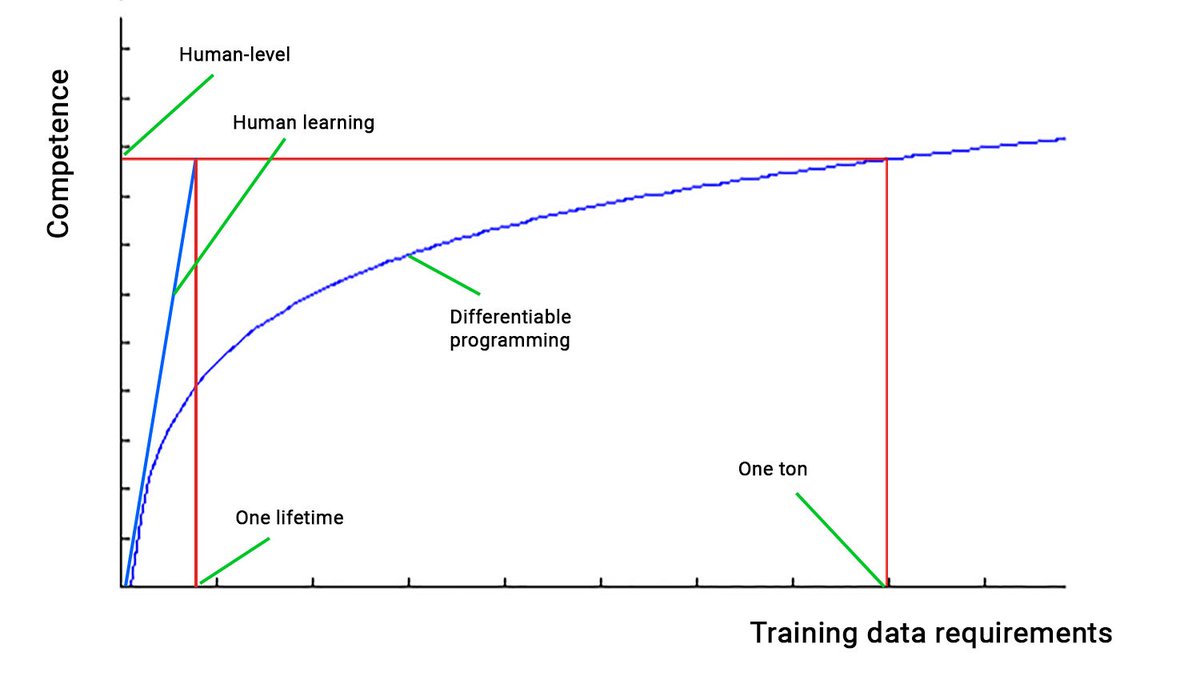

What do you think of this post by François Chollet?: “Given infinite data, you can solve arbitrarily complex problems with a ‘dumb’ model (such as a densely-connected neural network). However, when it comes to difficult real-world problems like self-driving, ‘lots of data’ is very different from ‘infinite data.’ The long tail is fat.” – Querius

And I want to extend a question. I liken AI to tinker toys. Behaviors are supposedly within the scope of whatever ML program/architecture we devise. Even given an infinite amount of data, perhaps we may never have an intelligent machine. But do you believe that, given an infinite amount of data, storage, processing power an AI can “virtually” know how to navigate every scenario in life as a human can? Why or why not? I’m trying to build an argument around the failures of data and of the ability to master tasks because of the data itself more than around the lack of data. – Querius

Great to hear from you again, Querius! Chollet makes a great point! More data gives diminishing returns. Based on VC dimension analysis of neural networks, you need exponentially more data to adequately train an incrementally larger neural network. I believe this is the same for gradient boosting and other ML techniques that build large, complex models.

Giving an off-the-cuff answer based on the argument that the mind is a halting oracle, I would say that the answer is no. We can arrive at this conclusion on a principled basis.

For example, let’s say that, when faced with a decision, the AI can run as long as it wants and use as much memory as possible until it arrives at a conclusion that is at least as good as a human decision—and then it takes an action. Further, it has a time halting device that freezes the rest of the world until it makes a decision. It also has a bottomless knapsack of storage. So when we compare its performance to that of humans, we can ignore conventional issues like AI resource usage and runtime.

In this case, because the halting problem, is undecidable, even with unbounded resources, then the AI still cannot perform like a human mind. That seems counterintuitive at first because all the halting programs will eventually halt, given enough time and resources. However, because

the AI does not know ahead of time how many halting programs there are, it will never know when they’ve all stopped. Instead, we’ll end up with a permanently frozen world when the AI goes head to head with a human.

Bringing this back to Chollet’s point, I am going even further: I am saying that, even with infinite data, an AI cannot make the same quality inferences as a human can with finite data.

And these are great questions to think about!

See also: The flawed logic behind “thinking” computers (a three-part series):

Part I: A program that is intelligent must do more than reproduce human behavior

Part II: There is another way to prove a negative besides exhaustively enumerating the possibilities

and

Part III No program can discover new mathematical truths outside the limits of its code.

Also: Software pioneer says general superhuman artificial intelligence is very unlikely. The concept, Chollet argues, shows a lack of understanding of the nature of intelligence