AI computer chips made simple

The artificial intelligence chips that run your computer are not especially difficult to understandIncreasingly, companies are integrating“AI chips” into their hardware products. What are these things, what do they do that is so special, and how are they being used?

What do computer processors do?

Computer processors take instructions and data, process them, and produce results. Their chips are categorized by their “instruction set”—the list of instructions that they recognize and are able to process. These instructions include the ability to read data, write data, perform arithmetic, compare data, and change the instructions they will execute next based on the comparisons they have made. It’s simple stuff, but that is literally all a computer needs, to do everything that it does. What makes computers valuable is that they can perform billions of these operations every second.

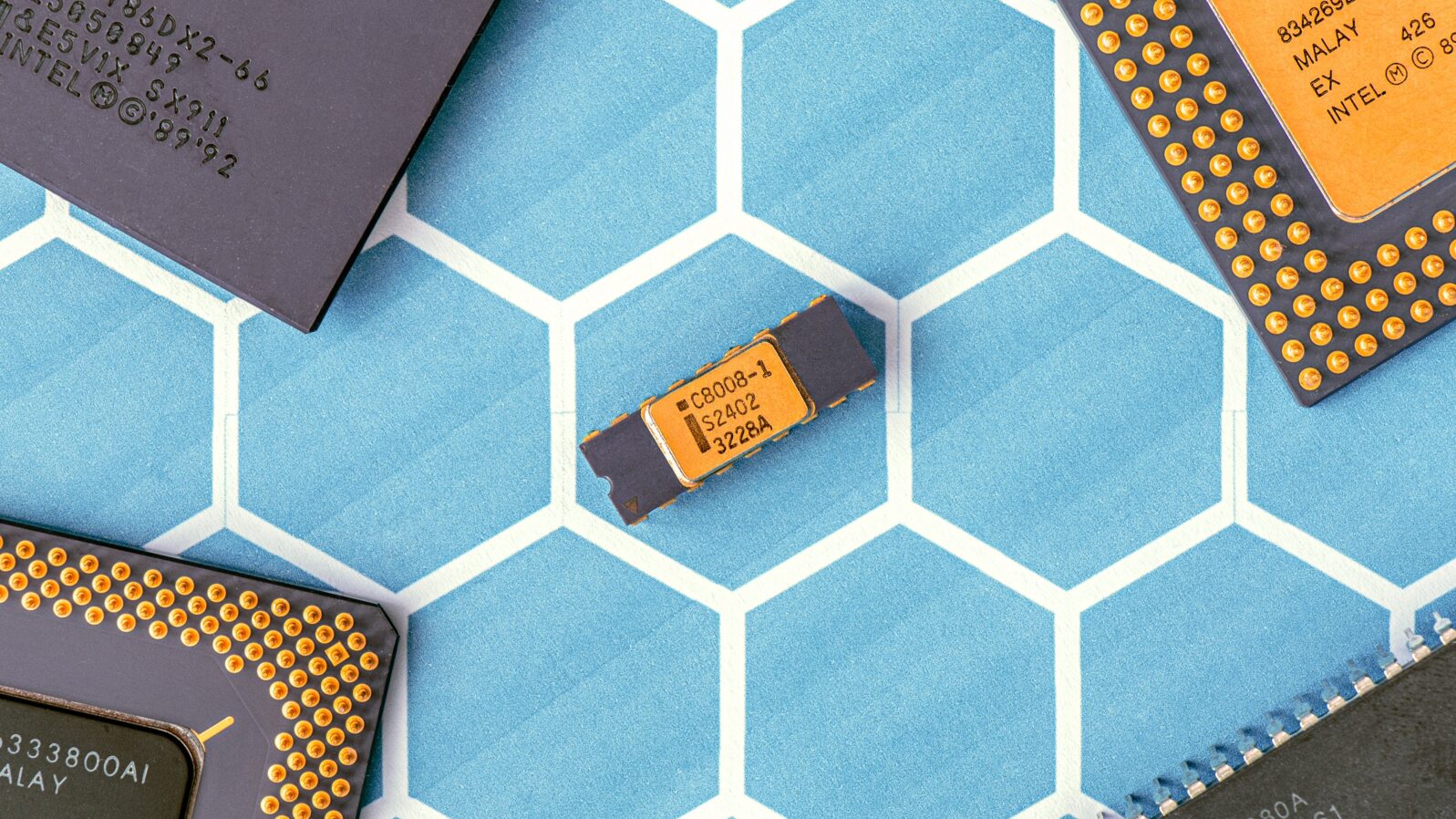

The most common instruction set used in computers today is the x86 instruction set, named after the first chip that used it, Intel’s 8086 CPU. If you are on a Mac, Linux, or Windows desktop, chances are you are using an x86 instruction set.* Processors don’t differ significantly from each other except that some are optimized for high-speed and some for low power consumption. Essentially, they all offer the same basic tradeoffs.

How can slow processors be speeded up?

Sometimes, however, standard chips are just too slow for specialty applications. One example is calculations using numbers with decimals (called floating-point numbers in computer-speak). Most processors are fairly slow at floating-point arithmetic. On my own computer, multiplying floating-point numbers takes up to 50 times longer than multiplying integers.

The first specialty processors were math co-processors which can boost the speed of math operations significantly. Pretty much every desktop computer has one today.

The next step in the evolution of specialty processors was vector processors. A vector processor can process more than one math operation at once. For instance, if I have four numbers, A, B, C, and D, and want to multiply them all by 4, I can do it with a single command in a vector processor. These are known as SIMD instructions—“single instruction multiple data”. This command applies the same operation (“single instruction”) to many different pieces of data (“multiple data”). Most modern desktop computers feature some vector processing ability.

How do processors display graphics?

Other common specialty processors are media processors, such as graphics cards and sound cards. The earliest graphics cards (graphics processing units or GPUs) were comparatively simple: They had memory on the card (buffers) and the user issued commands which copied images from the main memory onto the memory on the card for display. Graphics cards had multiple buffers, which allowed you to draw on one while showing the other and then instantaneously switch over. As graphics cards improved, the number of operations they supported also increased. Each operation offloaded work from the main computer processor onto the graphics card.

The first AI processors were actually graphics cards. AI is essentially a mass-scale statistics engine that runs numerous computations across a large set of data, much as GPUs do. Therefore, AI researchers realized early on that GPUs could be used to accelerate machine learning.

Eventually, rather than rendering an image to the graphics card, the main computer processor simply described the image to the card, which then rendered it into a 2D image for display. A user would load all of the components into the card—the textures, where things are located in 3D, and then the vantage point from which the scene is observed —and the graphics card rendered it. These more advanced graphics processors are known as GPUs—graphics processing units.

GPU rendering has gotten ever more sophisticated over the years. Eventually, developers recognized that the graphics cards’ ability to render scenes could have other uses. While graphics cards are not as general-purpose as typical processors, many processing tasks that are not graphics-related can be offloaded to them. Much of the processing for Bitcoin mining has been offloaded to graphics cards. The OpenCL language was developed to simplify writing programs that can be offloaded to graphics cards.

How were AI processors invented?

The first AI processors were actually graphics cards. AI is essentially a mass-scale statistics engine that runs numerous computations across a large set of data, much as GPUs do. Therefore, AI researchers realized early on that GPUs could be used to accelerate machine learning.

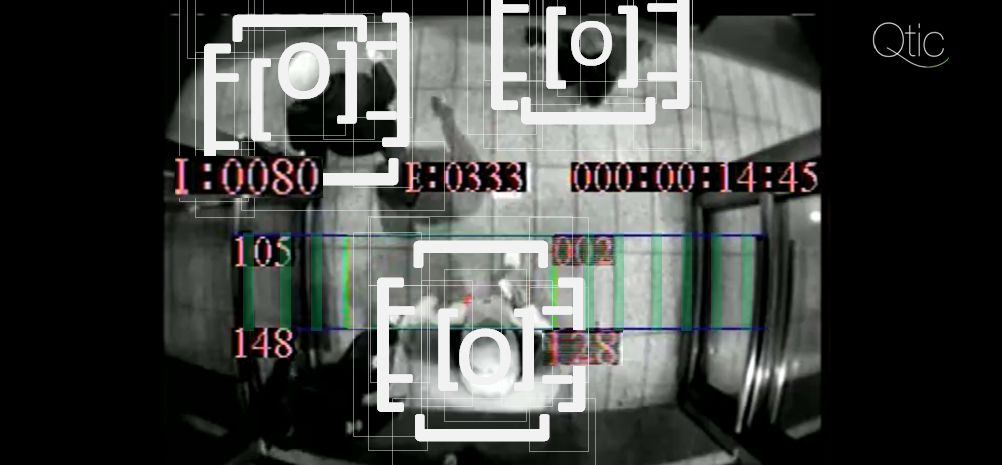

Other types of AI systems, such as computer vision, are even more closely aligned with GPUs. Computer vision is essentially the reverse process of rendering graphics. Rendering graphics takes a scene’s 3D description and turns it into a 2D image. Computer vision takes a 2D image and tries to turn it back into a 3D description. There is a large overlap in the mathematics required for these processes, so GPUs can significantly boost the systems’ performance.

However, AI tools tend to need two things that are not often provided by GPUs—increased flexibility and a means of favoring fast arithmetic over accuracy. Because the inputs to AI systems are often fuzzy, the mathematics need not be as precise. Therefore, one way to speed up AI systems is to make tradeoffs that favor speed over precision.

Additionally, while regular processors operate on one value at a time, and GPUs generally operate on vectors (multiple values at a time), some AI processors allow for manipulating whole matrices or tensors at a time (a tensor is a matrix with more than one dimension). Google’s new AI chip, the Edge TPU, is an add-on processor that can be loaded with data and then instructed to do matrix and tensor operations extremely quickly.

While Google’s Edge TPU simply offloads tensor calculations, other chips are more involved with the system. They can operate somewhat independently of the main CPU because they have independent sets of instructions. IBM’s now-defunct Cell Processor operated this way. Still other chips are hyper-specialized, application-specific chips. That is, instead of being directed by software, their processes are hardcoded onto the chip.

What future developments might we expect?

For the most part, the current crop of AI chips continues to extend processing power. Essentially, while general microprocessors operate on a very small scale, one value at a time, each generation of co-processor has added on more complex operations on larger and larger sets of values. The early math co-processors worked on scalar (single) values, later co-processors and early GPUs worked on vectors (multiple values at a time), and later GPUs operated on whole matrices (vectors in two dimensions). Now, AI units operate on tensors, which are essentially vectors in more than two dimensions.

At every stage in the development of co-processors, the market settled on at least semi-standard designs and/or frameworks. The current set of AI co-processors has not yet reached a consensus, so each chip is radically different and requires considerable specialized programming. However, some programming tools are available to bridge some of the gaps. Within a few years, the market will settle on a standardized way of offloading tensor operations. My guess is that, due to the very close similarity between graphics and AI operations, AI will remain closely linked to the graphics processing industry. Although they will probably be marketed separately, there will be little difference in function between AI co-processors and GPUs.

*Note: Other instruction sets exist; for example, the PowerPC instruction set has been popular in the previous generation of gaming systems, such as the Xbox 360, the Wii, the GameCube, and the PS3 (the recent ones have gone back to x86). Your phone likely uses a processor which understands the ARM instruction set.

Jonathan Bartlett

Jonathan Bartlett is the Research and Education Director of the Blyth Institute.

Also by Jonathan Bartlett: Who built AI? You did, actually. Along with millions of others, you are providing free training data

“Artificial” artificial intelligence: What happens when AI needs a human I?

and

When machine learning results in mishap: The machine isn’t responsible but who is? That gets tricky