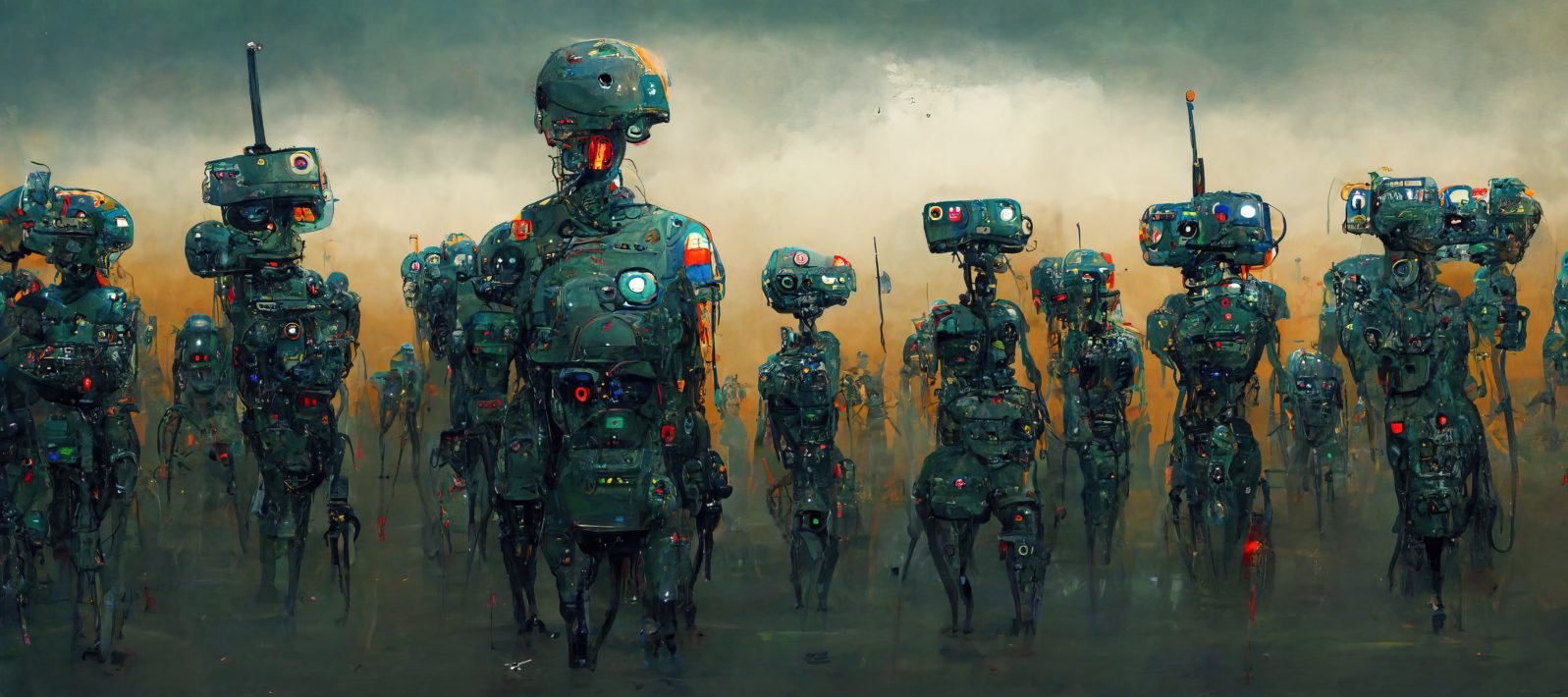

Robots, Drones, and Modern Warfare

Robots might not take over the world like the sci-fi movies depict, but AI in modern warfare threatens much destructionYou might remember the blockbuster movie I, Robot (2004) starring Will Smith, who plays a tough-minded homicide detective named Del Spooner in Chicago in the year 2035. Humanoid robots serve humanity and have become incorporated into society. Still, ever since a robot saved Del at the expense of a little girl, he hates them and thinks they will eventually overrun the world.

I, Robot imagines a society in which AI could physically overtake humanity. The technology we’ve created for our own use ends up using us, unto our own destruction.

Movies like I, Robot, Terminator, and others envision sentient, human-like robots that threaten to jeopardize the meaning of being human. But is that the real danger of AI, or does the threat lie elsewhere?

Robotic technology, it turns out, can be easily weaponized. Military drones can be controlled across continents. All one needs to do is punch some numbers, locate the target, and the “killer robot” is set loose to wreak havoc. We might not consider warfare drones as “robots,” but they are technological devices programmed by humans to do either good or ill, and so fit the bill.

The U.S. has used remote drones for years, now. American presidents, from Bush to Obama, have commanded multiple drone strikes in the Middle East. The ethics of such violence continues to garner debate, but it is nonetheless sobering that this kind of technology is readily available to world powers and is relatively easy to activate.

In fact, much of robotic tech’s power lies in its algorithms, allowing drone-like weaponry to act semi-autonomously. In a Robohub article, Toby Walsh writes,

On the nightly TV news, we see how modern warfare is being transformed by ever-more autonomous drones, tanks, ships and submarines. These robots are only a little more sophisticated than those you can buy in your local hobby store. And increasingly, the decisions to identify, track and destroy targets are being handed over to their algorithms. This is taking the world to a dangerous place, with a host of moral, legal and technical problems. Such weapons will, for example, further upset our troubled geopolitical situation.”

Toby Walsh, ‘Killer robots’ will be nothing like the movies show – here’s where the real threats lie – Robohub

Drone warfare feels less messy, more impersonal, and thus, perhaps more “humane” than the barbarism of the past. But we’re handing over vast amounts of destructive power to AI and haven’t duly calculated the human cost. War is bad enough when men are in trenches shooting at each other, but the death toll rises with each technological development in modern warfare. Historians point out that so many men died in World War I because of the invention of the machine gun, and military leaders didn’t adapt their strategy accordingly. They still charged each other out in the open, following the traditional method of combat and getting obliterated in the process.

With robotic warfare at play, it might become ever easier to regard war as a game, controlled by world elites who choose who and what gets destroyed. The farther we’re removed from the “trenches,” the easier it is to ignore the disastrous consequences of violence. Remote, algorithm-led war is also easier to instigate.

In an article from Plough, Samuel Moyn talks about the problem of “humane war” and how it plays out in the great Russian novel War and Peace (a book I’m now reading and would highly recommend). He writes,

Humanizing war could result in less humane outcomes. It could even foment more war by making it easier to start: a less fateful and momentous choice, because the stakes appear lower.”

Samuel Moyn, Tolstoy’s Case Against Humane War by Samuel Moyn (plough.com)

While we might think AI is the natural future of warfare, and despite its illusory promise to be more “humane,” we can also see how great a potential it has for widespread violence and destruction.