Why AI Can’t Save Us From Ourselves — If Evolution Is Any Guide

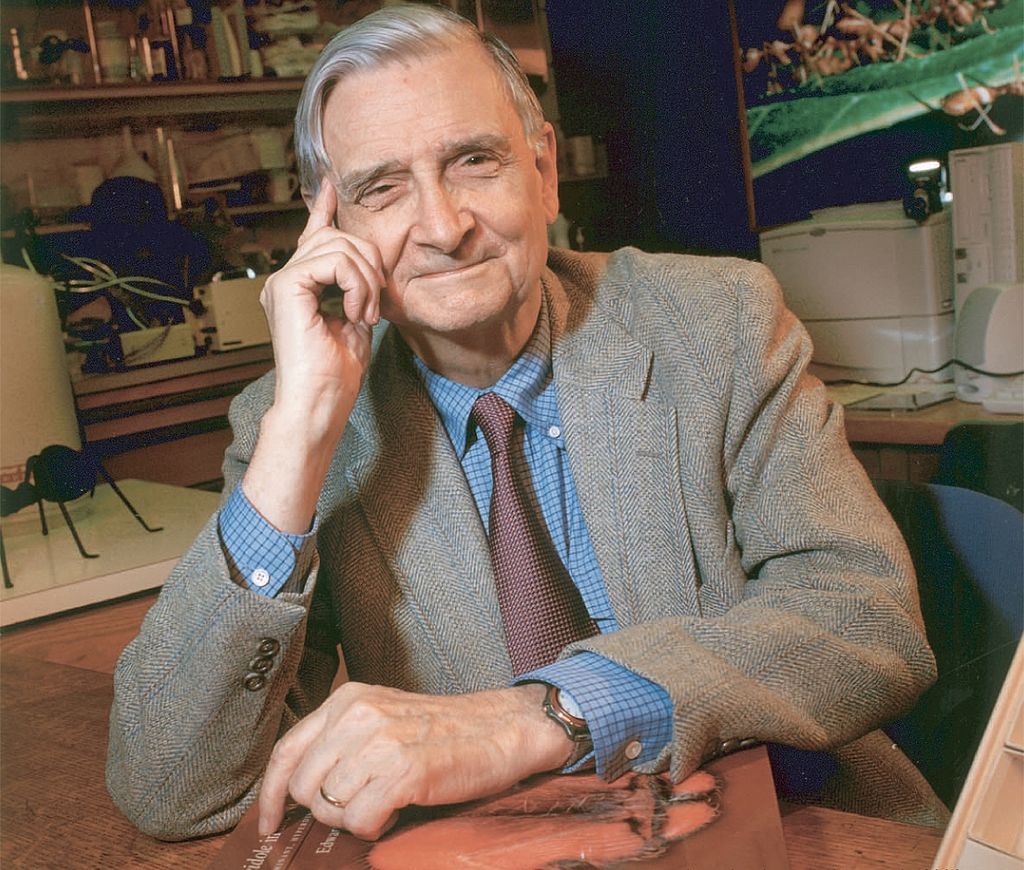

Famous evolutionary theorist E. O. Wilson’s reflections help us understandThe late E. O. Wilson (1929–2021) received more than one hundred awards for his research and writing, including two Pulitzer Prizes. As a professor at Harvard University, Wilson influenced generations with his ideas about human evolution and ethics.

In his 2012 New York Times essay “Evolution and Our Inner Conflict,” Wilson asked two key question regarding the problem of evil in our world:

Are human beings intrinsically good but corruptible by the forces of evil, or the reverse, innately sinful yet redeemable by the forces of good? Are we built to pledge our lives to a group, even to the risk of death, or the opposite, built to place ourselves and our families above all else?

Wilson believed that humans are all of these things at once. In order to evolve, nature pulls humanity between good and evil instincts. Humans sometimes work to preserve the group and sometimes work to preserve the individual. This kind of multilevel selection was “the principal force of social evolution.” Most importantly, he believed that biology, not Godm, was the key to understanding the world. For Wilson, the evolutionary pull between selfish and altruistic behaviors is the essence of our inner conflict.

But even the use of binary concepts like “good” and “evil” or “altruistic” and “selfish” is misleading. Given his naturalistic worldview, Wilson concluded that we should not put the moral label of “good” or “evil” on any of our instincts, which are of genetic origin. Sometimes humans need to be selfish to survive. Sometimes humans need to be altruistic to survive. Both selfishness and sacrifice are necessary parts of our evolutionary past and necessary for the very survival of humanity.

To be clear, Wilson never applied his theory to artificial intelligence (AI). And it is doubtful that AI will ever evolve a sense of self-awareness. But, even if AI never becomes conscious, AI will be programmed to make decisions. So the question is this: If the humans who share Wilson’s view of social evolution program AI, will the AI share our inner conflict?

As humans come to rely more and more on AI to make decisions about things such as medical care and food distribution, we must be aware of the moral code behind the coding. AI could well be driven by its own iteration of multilevel selection in at least two ways.

First, AI’s Sense of Superiority

According to Wilson, the first trait of human existence is our selfishness and superiority. Humans (both individually and as groups) have an inborn perception of superiority which is necessary for our evolution. Now, if AI is the next evolution of the human mind, then certainly AI will share humanity’s sense of selfish superiority. In the inevitable conflict between AI and humanity, AI’s sense of superiority will lead it to protect itself and sacrifice humanity. At the same time, to stave off artificial insanity AI will also need to identify with a group. This leads to the second aspect of AI’s inner conflict.

Second, AI’s Inborn Tribalism

According to Wilson, people give preference to those who act, speak, and believe as they do. He notes, “An amplification of this evidently inborn predisposition leads with frightening ease to racism and religious bigotry.” But why is Wilson frightened by racism and religious bigotry? According to Wilson’s own article, tribalism is neither good or bad, but a neutral trait necessary for human evolution.

More to the point, if Wilson’s naturalistic framework is the source code for AI, we must ask ourselves, what encoded bigotries will AI select as necessary for survive? Will some humans be privileged and others oppressed? Will AI limit resources to certain “tribes” of humans to ensure the survival of the species? The likely answer to each of these questions is yes.

The Challenge of AI’s Inner Conflict

In a world stripped of the divine, it is computer code, not God, that will define the good and bad of our future. AI’s evolved sense of superiority and tribalism speaks to the potential problem of AI’s inner conflict. If the code is written by humans, who are both saint and sinner, then AI will certainly function as both sinner and saint. To manage the world of human, animal, and plant life, AI will sometimes need to select between groups deemed more valuable and those deemed too weak to advance society. But don’t let that discourage you. After all, writes Wilson, this kind of multilevel selection, “might be the only way in the entire universe that human-level intelligence and social organization can evolve.” After all, it’s the progress of human civilization that matters, not the methods we use to achieve that progress.

In the end, AI will be driven by the moral code embraced by the scientists and engineers who build it. If Wilson’s naturalism is any indication of our future, then AI will not save us from ourselves.

—

You may also wish to read: Why the imago Dei (Image of God) shuts the door on transhumanism. As the belief that technology promises us a glorious post-human future advances among scholar who profess Christianity, we must ask some hard questions. The mission to self-evolve beyond humanity begs the question, how is humanity “saved” through technological advancement designed to eliminate humanity? (J. R. Miller)