Fool’s Gold: Even AI Successes Can Be Failures

Large doses of data, math, and computing power do not make a computer intelligentI recently read this enthusiastic claim by a professional data miner:

Twitter is a goldmine of data…. [T]erabytes of data, combined together with complex mathematical models and boisterous computing power, can create insights human beings aren’t capable of producing. The value that big data Analytics provides to a business is intangible and surpassing human capabilities each and every day.

Anthony Sistilli, “Twitter Data Mining: A Guide to Big Data Analytics Using Python” at Toptal

I was struck by how easily he assumes that large doses of data, math, and computing power make computers smarter than humans. He is hardly alone, but he is badly mistaken.

Computer algorithms are really, really good at making mathematical calculations and identifying statistical patterns (what Turing winner Judea Pearl calls “just curve fitting”) but, confined to Math World, they are not intelligent in any meaningful sense of the word. Computers are still nowhere close to having the commonsense, wisdom, and critical thinking skills that humans acquire from experiencing the real world.

This AI enthusiast cited a study of the Brazil 2014 presidential election as an example of how data mining Twitter data “can provide vast insights into the public opinion.” The author of the Brazilian study wrote that,

Given this enormous volume of social media data, analysts have come to recognize Twitter as a virtual treasure trove of information for data mining, social network analysis, and information for sensing public opinion trends and groundswells of support for (or opposition to) various political and social initiatives.

Elder Santos, “Data Mining for Predictive Social Network Analysis” at Adalyz

Brazil’s 2014 presidential election was held on October 5, 2014, with Dilma Rousseff receiving 41.6 percent of the vote and Aécio Neves 33.6 percent. As no one received more than 50 percent, a runoff election was held three weeks later, on October 26, which Rousseff won 51.6 percent to 48.4 percent.

To demonstrate the power of data mining social media, the research study mined the words used in the top ten Twitter Trend Topics in 14 large Brazilian cities on October 24, 25, and 26 in order to predict the winner in each city. It sounds superficially plausible, but there are plenty of reasons for caution. Those who tweet the most are not necessarily a random sample of voters. Many are not even voters. Many are not even people, but bots unleashed by businesses, governments, and scoundrels. Instead of reflecting the opinions of voters, the Twitter data may mainly reflect the attempts of some to manipulate voters.

And then we have the problem of deciding which words are positive and which are negative, which is not answered convincingly by data mining words after the election is over.

How well did it do? The study proudly reported a 60 percent accuracy rate. An old English proverb is that, “Nothing is good or bad, but by comparison.” That’s why, in medical research, the most compelling evidence is generally provided by a randomized controlled trial that separates the subjects randomly into a treatment group that receives the medication and a control group that does not. Without a control group, we don’t know if changes in the patients’ conditions are due to the treatment or to our bodies’ natural response to ailments.

Randomization is an important part of the process. If we simply compare people who choose to take a treatment with those who do not, there can be self-selection bias in that the people who choose the treatment are younger, healthier, or differ in other ways from those who do not. This is why well-designed medical studies divide the subjects randomly into the control and treatment groups and are also double-blind in that neither the patients or the doctors know which patients are in the treatment group — otherwise, the patients and doctors might be inclined to see more success than actually occurred.

It is also important that the control group provides a relevant comparison. For example, the original power-pose study had many problems, including a dodgy control group.

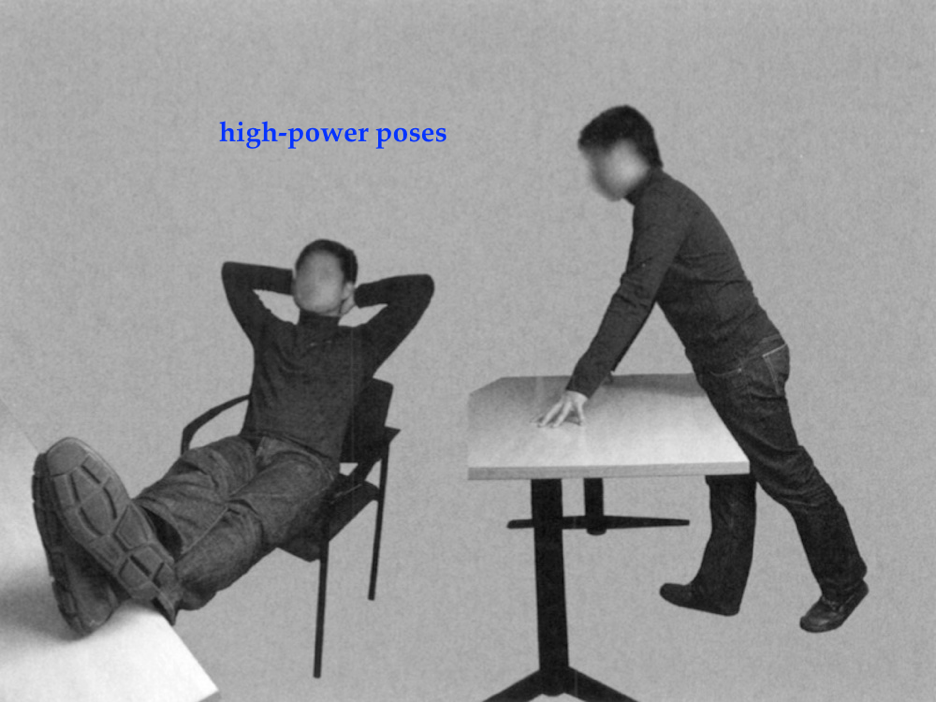

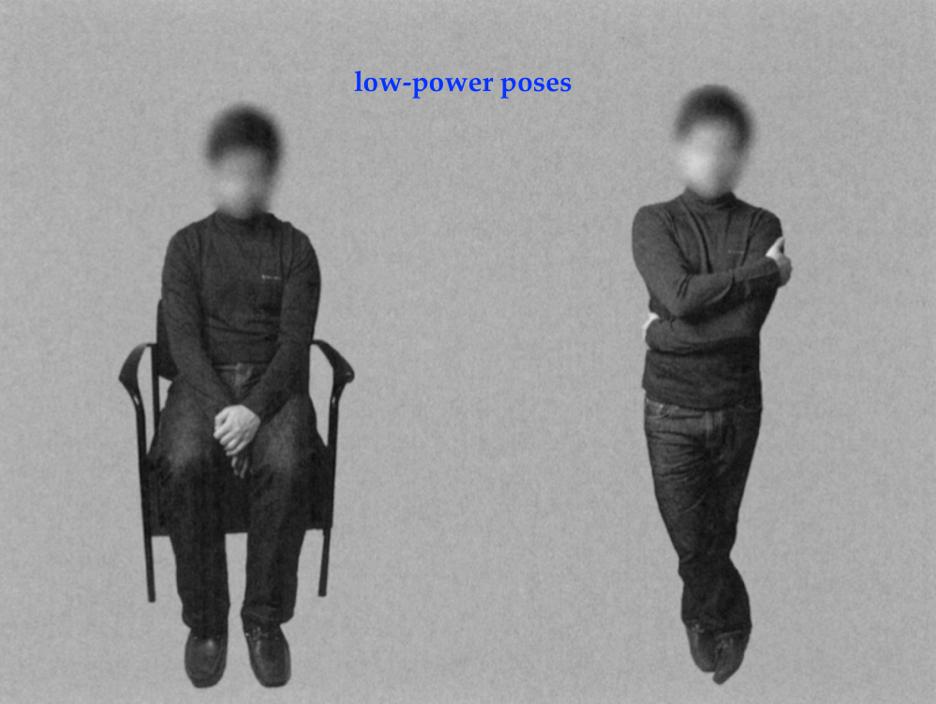

The researchers had 42 people assume two positions for one minute each — either the high-power poses or low-power poses shown below. However, when one of the authors, Amy Cuddy, gave a widely watched TED Talk, a giant screen projected a picture of Wonder Woman standing with her legs spread and hands on her hips while Cuddy tells her audience, “Before you go into the next evaluative situation, for two minutes, try doing this in an elevator, in a bathroom stall, at your desk behind closed doors.”

She advises high-power posing rather than a normal body position, but the study compared high-power with low-power poses. Perhaps any differences in power feelings found in the study were due to the negative effects of crossing one’s arms and legs rather than the positive effects of spreading one’s arms and legs. In medical research, this is known as the “poison medicine” problem — if sick people take a medicine and live while others drink poison and die, this doesn’t mean that the medicine is beneficial.

In the Brazilian election study, the AI analysis of Twitter trends was 60 percent accurate in predicting the winner in 14 large Brazilian cities. This struck me as a surprisingly modest success since I live in the United States, where most big cities reliably vote for one party or the other. It is not at all difficult to predict how Boston, Chicago, and New York will vote in presidential elections. With a bit of work (I don’t read or speak Portuguese), I found the voting results in the first and second rounds of the 2014 Brazil presidential election for the 14 cities in this study. If we had simply predicted that whoever did better in the October 5 vote would win the October 26 runoff, the accuracy would have been 93 percent.

Despite the superficial appeal of using super-fast computers to data mine mountains of data, this AI model’s discoveries are once again fool’s gold.