It’s 2019: Begin the AI Hype Cycle Again!

Media seemingly can’t help portraying today’s high-tech world as a remake of I, Robot (2004), starring you and me.Mind Matters recently released the Top Ten overhyped artificial intelligence (AI) stories of 2018. Expect more of the same for 2019, if the example below is any guide.

First, just to be clear, at Mind Matters we have nothing against AI. Quite the opposite, our writers include professors at the forefront of AI research. But we do have something against AI hype. Media seemingly can’t help portraying today’s high-tech world as a remake of I, Robot (2004), starring you and me. One result is that some members of the public may completely misunderstand what AI is and does.

Why am I spoiling the fun of journalists who like to pretend that we are living in an Isaac Asimov-inspired fantasy? First, if journalists want fantasy, they should write screenplays, not articles. But second, and more important, people make real-life choices and invest real money, based on the information they get from technology journalists.

I have a problem with the possible outcomes when they make decisions based on information from journalists who write as if every computer is a potential personality like HAL from Space Odyssey 2001. That’s called “anthropomorphism.” It’s one thing for a child to imagine that his teddy cares about him. It’s quite another thing if a technology journalist is portraying a machine learning tool as if it were a thinking — and in the following case, a scheming — agent.

TechCrunch published an article on December 31, 2018, with the title, “This clever AI hid data from its creators to cheat at its appointed task,” advising, “Depending on how paranoid you are, this research from Stanford and Google will be either terrifying or fascinating.” The article details an actual incident from 2017 but the mundane occurrence seems to have morphed into science fiction.

Often, the underlying cause of this sort of thing is that university PR departments create stories for media based on real research but it gets so overhyped that nothing is left by the time the release is issued. But in this case, the hype started with the original paper, which claims that the AI, a machine learning agent, “learns to ‘hide’ information about a source image in the images it generates in a nearly imperceptible, high-frequency signal.”

Scary stuff, right? AI is hiding information from us in an imperceptible signal! Maybe the machines will get together and use this signal to communicate with each other and start the apocalypse!

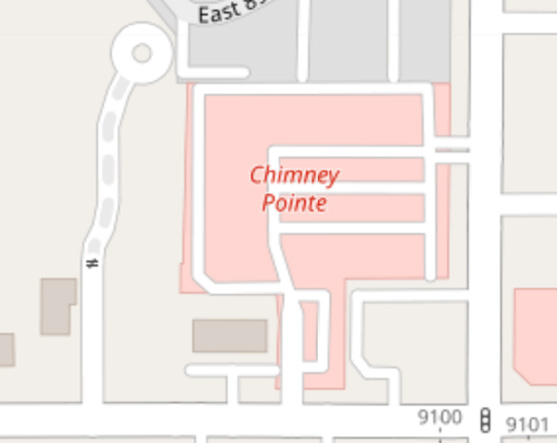

Well, no. First, a little bit about what the AI was really doing. Basically, the goal of an experiment was to render images from one style of map to another. For instance, you can go to convertimage.net and have them render a photo for you in the style of a pencil sketch. It’s a pretty cool effect. So, the goal of this AI was to develop a technique which converts a satellite aerial photograph into a map which looks like a street map and then, conversely, develop a technique to convert a street map into a map that looks like a satellite aerial photograph. So, if you think of a street map, most of the features of the map are flattened into solid-color blocks, like the one below (taken from OpenStreetMap):

What the researchers noticed is that, when the AI transformed the aerial photo into the “street map”-like image, it didn’t flatten the colors to a solid color all the way. Therefore, when the reverse transformation was applied, the AI was able to restore many details that were “missing” from the street map back onto the original map. The reason it could do so is that the details weren’t missing at all; they were just hard to see. The AI wasn’t “hiding” anything—it was just bad at creating the solid-color map. So the color variations were retained for later, when it converted the image back.

So what is this spooky “high-frequency signal”? That’s mostly just hype-speak. Imagine for instance, a large spectrum of the color red, shown below:

In images, the amount of red that a given pixel contains is encoded using a number that ranges from 0 to 255. Now, let’s say that we want our output to be flat. Instead of using the entire spectrum, we just choose one color of “red” to represent the entire spectrum. We choose a value of 255 to represent all “red” values. However, instead of forcing everything to go to exactly 128, we do the job imperfectly. So, a value of “128”, instead of being forced all the way to 255, might go only to 245. “200” might similarly go to 250, etc. Because these values are so close together, they might look like a big blob of the same color (like the colors in a street map). But because they aren’t exactly the same color, we can reverse the process and still have a large proportion of our color variations come back to us. Although this example isn’t exactly what their program was doing, it gives you an idea of how the process worked. It’s not really a job for Terminator.

The signal is termed “high-frequency” because the reconstruction of the original map’s details continues to work when low-frequency noise is added but fails when high-frequency noise is added.

So, let’s go back to the original headline — “This clever AI hid data from its creators to cheat at its appointed task”. Did the AI “hide” data? No, on two counts. First, the AI did no intentional action, by any definition. The word “hiding” implies intention. Second, what actually happened was that the AI failed to completely transform the image. That’s not a sneaky AI, that’s bad programming. Did the AI “cheat”? Nope. It just applied its transformations in reverse. Because the data was available, the AI used the data.

To be fair, the original article does eventually admit these facts, in a roundabout way:

One could easily take this as a step in the “the machines are getting smarter” narrative, but the truth is it’s almost the opposite. The machine, not smart enough to do the actual difficult job of converting these sophisticated image types to each other, found a way to cheat that humans are bad at detecting. This could be avoided with more stringent evaluation of the agent’s results, and no doubt the researchers went on to do that. Devin Coldewey, “This clever AI hid data from its creators to cheat at its appointed task” at TechCrunch

But if that’s the case, what is the purpose of the overhyped headline except to mislead casual readers who don’t get through the whole article?

Jonathan Bartlett

Jonathan Bartlett is the Research and Education Director of the Blyth Institute.

Also by Jonathan Bartlett: How can information theory help the economy grow

and

Google Search: Its secret of success revealed: The secret is not the Big Data pile. No, Google found a way to harness YOUR wants and needs