Do either machines—or brains—really learn?

A further response to Jeffrey Shallit: Actually, brains don’t learn either. Only minds learn.

Jeffrey Shallit

The philosophical underpinnings of modern neuroscience is a compendium of fallacies. The salient fallacy is the mereological fallacy—the confusion of the part for the whole. Dr. Jeffery Shallit, a computer scientist who believes that machines have minds and can “learn,” provides an example of this fallacy. In critiquing my analogy between machine learning and a book that opens on specific pages more easily because of repeated use, Shallit writes:

Egnor claims that computers “don’t have minds, and only things with minds can learn”. But he doesn’t define what he means by “mind” or “learn”, so we can’t evaluate whether this is true.

Very well. “Mind” is the ability to have thoughts. Thoughts are absolutely distinguished from matter in that thoughts are always about something else but matter is never inherently about anything other than itself. A rock or a pencil is just a rock or a pencil whereas my thought about my cat or about the White House is about the cat or the White House. This fundamental difference between thought and matter was noted in modern times by Franz Brentano, a 19th century philosopher, although this property of thought—technically called ‘intentionality’— has been recognized by philosophers since the time of Plato and Aristotle.

Knowledge is thought that corresponds to reality and learning is the acquisition of new knowledge. Only living things with minds can learn because only living things have minds and only living things that have minds can have knowledge. Any attribution of mind or thought or learning to inanimate objects is merely metaphorical.

Shallit then stumbles into the mereological fallacy:

Most people who actually work in machine learning would dispute his claim [that machines don’t have minds]. And Egnor contradicts himself when he claims that machine learning programs “are such that repeated use reinforces certain outcomes and suppresses other outcomes”, but that nevertheless this isn’t “learning”. Human learning proceeds precisely by this kind of process, as we know from neurobiology.

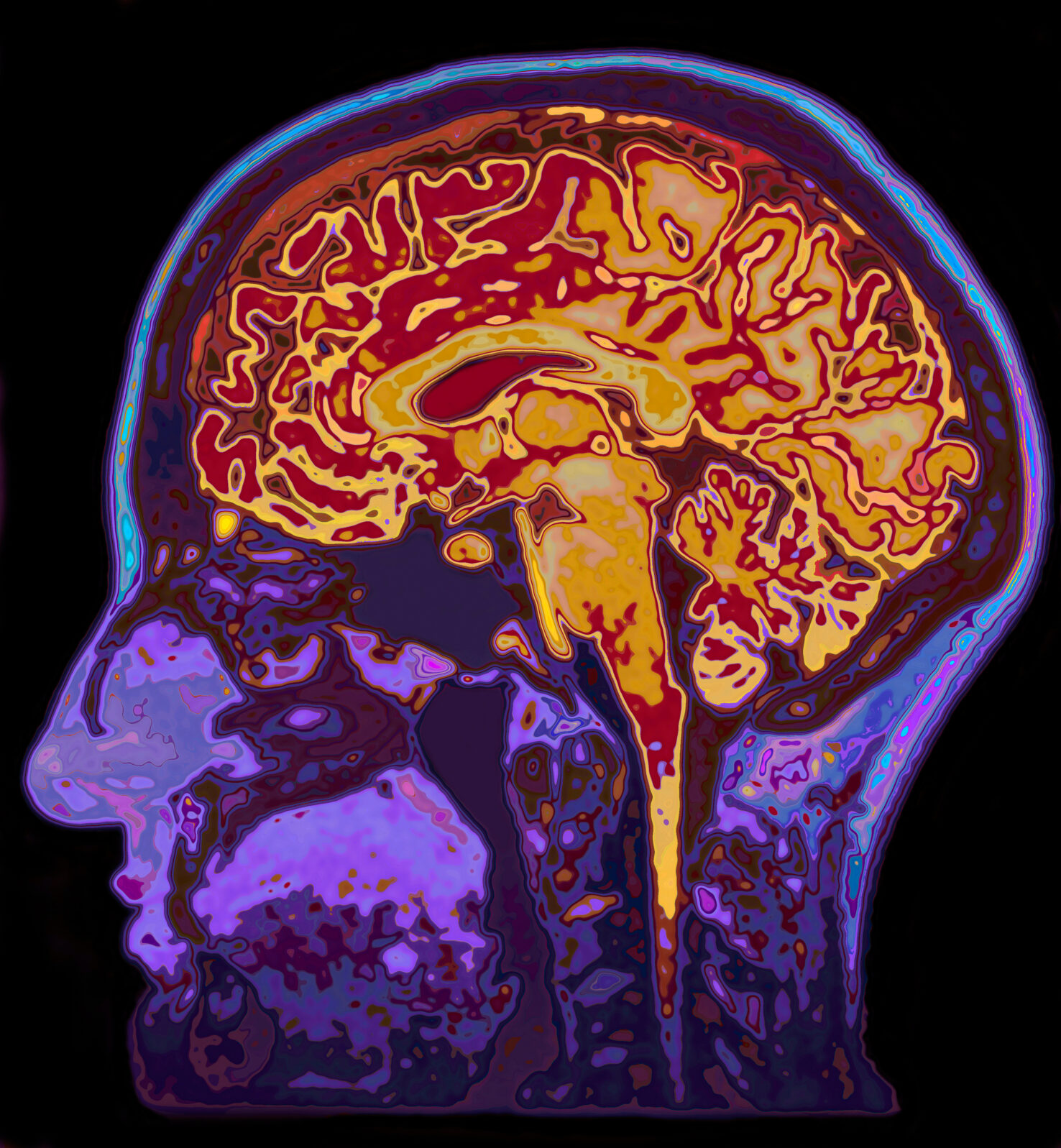

Shallit implies that the reinforcement and suppression of neural networks in the brain that accompanies learning means that brains, like machines, learn. He is mistaken. Brains are material organs that contain neurons and glia a host of cells and substances. Brains have action potentials and neurotransmitters.

Brains are extraordinarily complex, and brain function is a necessary condition for ordinary mental function.

Brains are extraordinarily complex, and brain function is a necessary condition for ordinary mental function.

But brains don’t have minds, and brains don’t have knowledge, and brains don’t learn. Reinforcement and suppression of neural networks in the brain are not learning. They are a necessary condition for learning, but learning is an ability of human beings, considered as a whole, to acquire new knowledge, not an ability of human organs considered individually. Human organs don’t “know” or “learn” anything. This error is the mereological fallacy. It is the same mereological fallacy to say that my brain learns as it is to say that my lungs breathe or my legs walk. I learn and I breathe and I walk, using my brain and lungs and legs.

And it is just as much a fallacy to say that machines learn. Human beings learn, using brains, eyes, hands, and books—and machines. We use many things to learn, but only we do the learning, not our organs nor our tools.

Also by Michael Egnor: A computer scientist responds to my parable: Jeffrey Shallit argues that a computer is not just a machine, but something quite special

Also by Michael Egnor: A computer scientist responds to my parable: Jeffrey Shallit argues that a computer is not just a machine, but something quite special

Can machines really learn? A parable of a book that learned

Neurosurgeon outlines why machines can’t think.

and

The brain is not a “meat computer”: Dramatic recoveries from brain injury highlight the difference

Dr. Egnor is a neurosurgeon, professor of Neurological Surgery and Pediatrics and Director of Pediatric Neurosurgery, Neurological Surgery, Stonybrook School of Medicine