We Trust Nonsense From Lab Coats More Than From Gurus

This shocking study is relevant to how we decide what to believe from science sources about COVID-19An international team of researchers staged a revealing experiment on who we believe when they are talking nonsense. The test of 10,195 participants from 24 countries asked questions about the credibility of the statements and about their personal degree of religiosity.

How could the researchers be sure that the statements were nonsense? They were produced by the New Age Bullshit Generator, an algorithm that generates impressive sounding elements of sentences that make rough grammatical sense even if they make no other sense.

Two statements were selected:

The 10,195 participants in the experiment were presented with two meaningless but profound-sounding statements: “We are called to explore the cosmos itself as an interface between faith and empathy. We must learn how to lead authentic lifes in the face of delusion. It is in refining that we are guided.” and “Yes, it is possible to exterminate the things that can confront us, but not without hope on our side. Turbulence is born in the gap when transformation has been excluded. It is in evolving that we are re-energized.” One claim was attributed to a scientist and the other to a spiritual guru. Participants were then asked to indicate on a 7-point scale to what extent they found the claims credible.

University of Amsterdam, “Study: Scientists carry greater credibility than spiritual gurus” at Phys.org (February 8, 2022) The paper is open access.

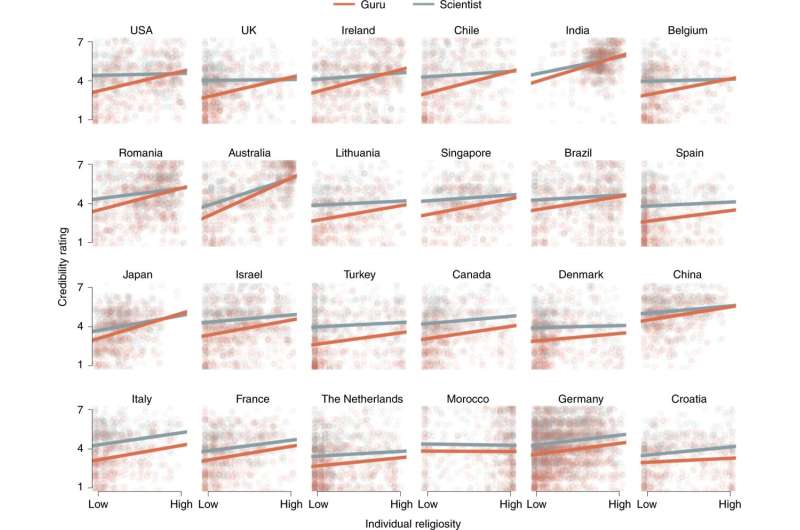

The results suggest that people generally find statements more credible if they come from a scientist when compared to a spiritual guru, with 76 percent of participants rating the ‘scientist’s’ balderdash at or above the midpoint of the credibility scale, compared with 55 percent for the ‘guru’.

Additionally, individuals who scored high for religiosity still showed a preference for the statement from the scientist compared to the spiritual guru; however, it was relatively weaker than the general sample. Religious individuals also gave higher credibility judgments to gurus compared to the general sample but were still lower than the scientist.

Conor Feehly, “The Einstein Effect: People Trust Nonsense More if They Think a Scientist Said It” at ScienceAlert (February 13, 2022) The paper is open access.

The study’s finding have implications for the question of why we decide to believe claims about COVID-19:

The corona crisis has recently brought the subject of the credibility of science to the fore. Does keeping 1.5 meters apart really work? Are vaccinations safe? Does wearing a face mask help? In many cases, the answers to such questions ultimately boil down to who we trust most and consider the most credible authority. Should we take the word of an anti-vaxxer or listen to the national health authorities to guide our beliefs and behavior regarding the virus? “In our research, we looked at the influence that the source of the information has on its credibility, apart from the content of the information itself. We did that with a simple experiment using nonsense claims. They were not about corona, but our findings are also very relevant to the debates around corona,” says UvA psychologist Suzanne Hoogeveen, who led the study.

University of Amsterdam, “Study: Scientists carry greater credibility than spiritual gurus” at Phys.org (February 8, 2022)

The fact that many people are willing to believe scientists even if they are talking nonsense doesn’t help us evaluate strategies for fighting COVID-19.

The effect the researchers identified has been called the “Einstein effect” where “trusted sources of information are given the benefit of the doubt because of the social credibility they possess.” An evolutionary origin is claimed:

“From an evolutionary perspective, deference to credible authorities such as teachers, doctors, and scientists is an adaptive strategy that enables effective cultural learning and knowledge transmission. Indeed, if the source is considered a trusted expert, people are willing to believe claims from that source without fully understanding them,” state the researchers.

Conor Feehly, “The Einstein Effect: People Trust Nonsense More if They Think a Scientist Said It” at ScienceAlert (February 13, 2022) The paper is open access.

It’s not clear, however, how acceptance of randomly generated nonsense is an adaptive strategy. Yet the researchers seem oddly comfortable with that aspect of their findings:

‘Our findings suggest that regardless of one’s worldview, science is seen worldwide as a powerful indicator of the reliability of information. In these times when there is a lot of talk about scepticism with regard to, for example, climate change and vaccinations, that is hopefully reassuring,’ says Hoogeveen.

University of Amsterdam, “Study: Scientists carry greater credibility than spiritual gurus” at Phys.org (February 8, 2022)

If science is seen as “a powerful indicator of the reliability of information,” even when the information is nonsense, then it will certainly be easier for some to market nonsense as science than has been supposed.

The researchers also suggested that people might appreciate incomprehensible statements on account of, rather than in spite of, their obscurity (the “Guru effect”). That’s not good news for people who want to communicate science clearly.

Why did the researchers use the term “guru”?

In the current study, the authors chose to contrast ‘scientist’ with ‘spiritual guru’ instead of ‘religious leader’, because they wanted to make sure they selected an authority that wasn’t specific to any particular religion, given that the study was taking place across different countries.

Conor Feehly, “The Einstein Effect: People Trust Nonsense More if They Think a Scientist Said It” at ScienceAlert (February 13, 2022) The paper is open access.

But wait. In English-speaking cultures, the term “guru” can have an unserious connotation: “my fashion guru,” “my steak guru,”etc. That may well have affected the research results.

The main problem with uncritical trust in science is that it gets science wrong. Science is, among other things, a process of error correction. The information purveyed by a science-based source at any given time can vary significantly in reliability — as issues around, say, anti-COVID-19 face masks in schools demonstrate.

A preemptive preference for information that is supposed to come from science might not serve the user well. Claims about what “science shows” may prove no more reliable than claims about what “religion reveals.” That’s part of why the Royal Society (Britain’s version of the National Academy of Sciences), came out against social media censorship of alternative viewpoints, with the COVID-19 issue clearly in mind.

You may also wish to read: Royal Society: Don’t censor misinformation; it makes things worse. While others demand crackdowns on “fake news,” the Society reminds us that the history of science is one of error correction. It’s a fact that much COVID news later thought to need correction was in fact purveyed by official sources, not blogs or Facebook or Twitter accounts.