What Are the “Architects of Intelligence” actually designing?

Even their polite disagreements are fairly substantial

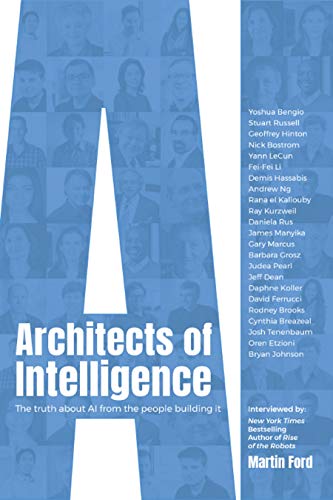

The reviewer for Spectrum, the magazine for the Institute of Electrical and Electronics Engineers (IEEE), observed that Martin Ford’s Architects of Intelligence (2018), interviewing 23 AI experts, illustrates the lack of consensus about where AI is going:

While it’s probably safe to say that most are generally optimistic about AI (frequently with some caveats), there’s strong disagreement about both what the pace of AI development is, as well as what it should be, and what needs to happen to take us to the next stage of AI. But that’s precisely why this book is so good: it’s these areas of disagreement that best define what the current state of AI really is, in a way that you’d never be able to get from some sort of generalized distillation or summary. If you’re interested in an accurate, honest view of the current state of artificial intelligence, Martin’s book is a must-read.

Evan Ackerman, “Book Review: Architects of Intelligence” at IEEE Spectrum

He seems to be saying that the field is best defined by its areas of disagreement. A snippet from Rodney Brooks, Professor of Robotics (emeritus) at MIT and founder of Rethink Robotics, illustrates Ackerman’s point: “I spend a large part of my life telling people that they are being delusional when they see videos and think that great things are around the corner, or that there will be mass unemployment tomorrow due to robots taking over all of our jobs.” Spectrum was permitted to republish some excerpts from Architects, which you will find at the review.

We also learn from Ackerman that Ford asked his experts to guess informally when there was a 50% chance of artificial general intelligence (human-level AI) would be created. Responses ranged from 2029 (Ray Kurzweil) through 2200 (Rodney Brooks). Larger surveys have suggested 2040–2050 but, we are told, they may have included less expert participants. In short, the top experts not only don’t agree with the 2040–2050 consensus but also differ wildly with each other in their disagreement. That’s good to know when we hear tales of Robogeddon! and the AI Apocalypse.

Another reviewer has pointed out a lesser but substantial area of disagreement:

Some of the researchers Ford spoke to said we have most of the basic tools we need, and building an AGI will just require time and effort. Others said we’re still missing a great number of the fundamental breakthroughs needed to reach this goal. Notably, says Ford, researchers whose work was grounded in deep learning (the subfield of AI that’s fueled this recent boom) tended to think that future progress would be made using neural networks, the workhorse of contemporary AI. Those with a background in other parts of artificial intelligence felt that additional approaches, like symbolic logic, would be needed to build AGI. Either way, there’s quite a bit of polite disagreement.

“Some people in the deep learning camp are very disparaging of trying to directly engineer something like common sense in an AI,” says Ford. “They think it’s a silly idea. One of them said it was like trying to stick bits of information directly into a brain.”

James Vincent, “This is when AI’s top researchers think artificial general intelligence will be achieved” at The Verge

A key problem may be deciding just what “common sense” is. As Vincent complains, “To date, we’ve built countless systems that are superhuman at specific tasks, but none that can match a rat when it comes to general brain power.”

Does it matter that a rat is expert at, if nothing else, saving its own skin?

Ford explained recently that he undertook the book to focus attention away from hype and fearmongering, toward the real issues raised by AI as understood by actual experts:

Warnings that robots will soon be weaponized, or that truly intelligent (or superintelligent) machines might someday represent an existential threat to humanity, are regularly reported in the media. A number of very prominent public figures — none of whom are actual AI experts — have weighed in. Elon Musk has used especially extreme rhetoric, declaring that AI research is “summoning the demon” and that “AI is more dangerous than nuclear weapons.” Even less volatile individuals, including Henry Kissinger and the late Stephen Hawking, have issued dire warnings.

Martin Ford, “The Truth About AI From the People Building It” at Medium

He hopes to focus more attention on questions like “Is there a role for government regulation?” “Will AI unleash massive economic and job market disruption, or are these concerns over hyped?” and “Should we worry about an AI ‘arms race,’ or that other countries with authoritarian political systems, particularly China, may eventually take the lead?”

His own view, as author of Rise of the Robots: Technology and the Threat of a Jobless Future (2015), is that “we will inevitably see rising inequality and quite possibly outright unemployment, at least among certain groups of workers.” A scary thought but not an apocalypse. We’ve been there many times. Jay Richards likes to point out that few people are doing manual labor on farms today, as most people did 150 years ago — yet unemployment is currently low.

Perhaps one reason Rodney Brooks is so busy calming people’s fears is that future apocalypses offer a hidden benefit: Whether they ever happen or not, they distract us from critical thinking about present-day issues. The noise from Robogeddon! may drown out the fact that robots cannot steal nearly as many jobs as poorly thought out economic policies do. Unfortunately, economics is boring compared to apocalypses.

Next: Possible Minds?: But What If the Minds Are IMpossible? Suppose we actually can’t create thinking AI? How would THAT change the world?

See also: Artificial Intelligence: Prophets in Conflict A total of 48 AI experts tell us what it all means but their predictions strongly disagree