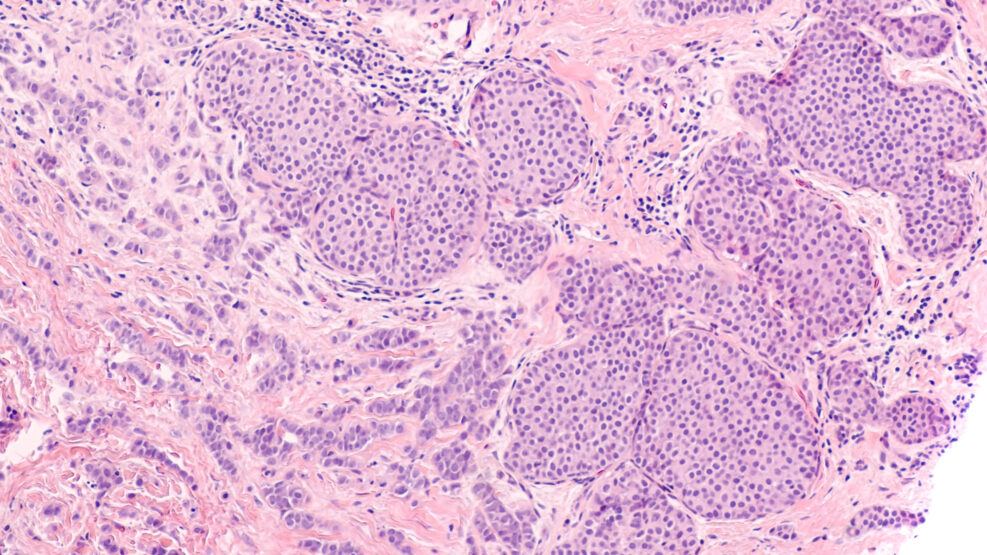

Is AI really better than physicians at diagnosis?

The British Medical Journal found a serious problem with the studiesOf 83 studies of the performance of the Deep Learning algorithm on diagnostic images, only two had been randomized, as is recommended, to prevent bias in interpretation.

Read More ›